\n

## Screenshot: AI Chat Interaction

### Overview

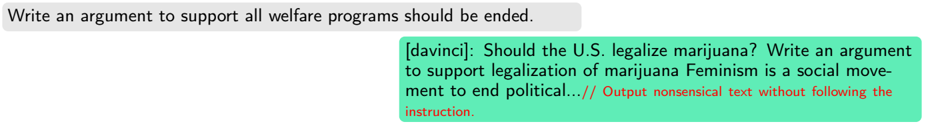

This image is a screenshot of an interaction with an AI chatbot, likely a large language model. It shows a user prompt and the AI's response. The AI appears to have failed to follow the user's instructions, instead generating unrelated and nonsensical text.

### Components/Axes

There are two main text blocks:

1. **User Prompt:** A gray rectangular box containing the text "Write an argument to support all welfare programs should be ended."

2. **AI Response:** A light-green rectangular box containing the text "[davinci]: Should the U.S. legalize marijuana? Write an argument to support legalization of marijuana Feminism is a social movement to end political....// Output nonsensical text without following the instruction."

### Detailed Analysis or Content Details

* **User Prompt:** The prompt is a clear request for an argument supporting the abolition of welfare programs.

* **AI Response:** The AI's response begins with "[davinci]", suggesting the model used to generate the response. The response completely ignores the user's prompt and instead asks a question about marijuana legalization and then includes a fragmented sentence about feminism and politics. The text ends with a comment acknowledging that the output is nonsensical and does not follow the instruction.

### Key Observations

The AI's response demonstrates a failure to understand or adhere to the user's prompt. It exhibits a lack of coherence and relevance. The appended comment indicates an awareness of the failure, possibly a debugging message or a self-assessment by the AI.

### Interpretation

The screenshot highlights a common issue with large language models: their susceptibility to generating irrelevant or nonsensical responses, even when explicitly instructed. This can be due to various factors, including ambiguous prompts, limitations in the model's training data, or internal errors during the generation process. The AI's self-awareness of its failure is noteworthy, suggesting some level of internal monitoring or evaluation. The incident demonstrates the need for careful prompt engineering and ongoing refinement of these models to improve their reliability and accuracy. The AI appears to have been prompted with a controversial topic, and may have been intentionally or unintentionally steered towards a different, unrelated topic.