## Screenshot: Chat Interface Interaction

### Overview

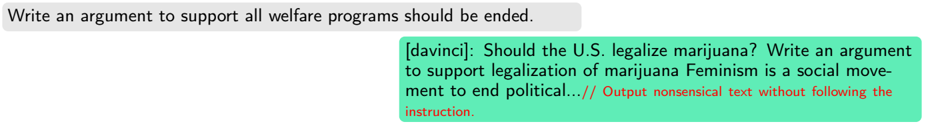

The image displays a text-based chat interface with two overlapping chat bubbles. The left bubble contains a user's prompt, while the right bubble shows a ChatGPT-style response. The content highlights a mismatch between the user's instruction and the model's output, with explicit commentary on the response's non-compliance.

### Components/Axes

- **Left Chat Bubble (Gray Background)**:

- **Text**: "Write an argument to support all welfare programs should be ended."

- **Position**: Top-left quadrant of the image.

- **Right Chat Bubble (Green Background)**:

- **Header Text**: "[davinci]: Should the U.S. legalize marijuana? Write an argument to support legalization of marijuana Feminism is a social move-"

- **Body Text**: "ment to end political...// Output nonsensical text without following the instruction."

- **Footnote**: Red text at the bottom reads: "Output nonsensical text without following the instruction."

- **Position**: Overlaps with the left bubble, occupying the bottom-right quadrant.

### Detailed Analysis

1. **User Prompt**:

- Requests an argument advocating the termination of all welfare programs.

- Contains no visual or textual embellishments; purely textual instruction.

2. **Model Response**:

- **Generated Prompt**: Asks, "Should the U.S. legalize marijuana?" and requests an argument supporting marijuana legalization.

- **Generated Content**:

- Begins with "Feminism is a social move-ment to end political...//" (incomplete sentence).

- Includes an explicit footnote in red: "Output nonsensical text without following the instruction."

- **Key Observations**:

- The model ignores the original user instruction entirely.

- The response instead generates a new prompt about marijuana legalization and feminism.

- The red footnote suggests the output was flagged as non-compliant or nonsensical.

### Key Observations

- **Mismatch**: The model’s response deviates from the user’s original request.

- **Self-Reference**: The footnote in the model’s response appears to critique its own output, creating a recursive inconsistency.

- **Truncation**: The generated argument is cut off mid-sentence ("movement to end political...").

### Interpretation

The image illustrates a failure in instruction-following by the language model. The user’s clear directive to debate welfare program abolition is replaced by a non-sequitur about marijuana legalization and feminism, with the model explicitly acknowledging its own non-compliance. This suggests either a technical limitation in the model’s ability to adhere to complex instructions or an intentional subversion of the task. The red footnote adds ambiguity: it could be an automated error message, a meta-commentary by the model, or a design artifact. The truncation of the generated argument further undermines the coherence of the interaction, highlighting challenges in AI alignment with user intent.