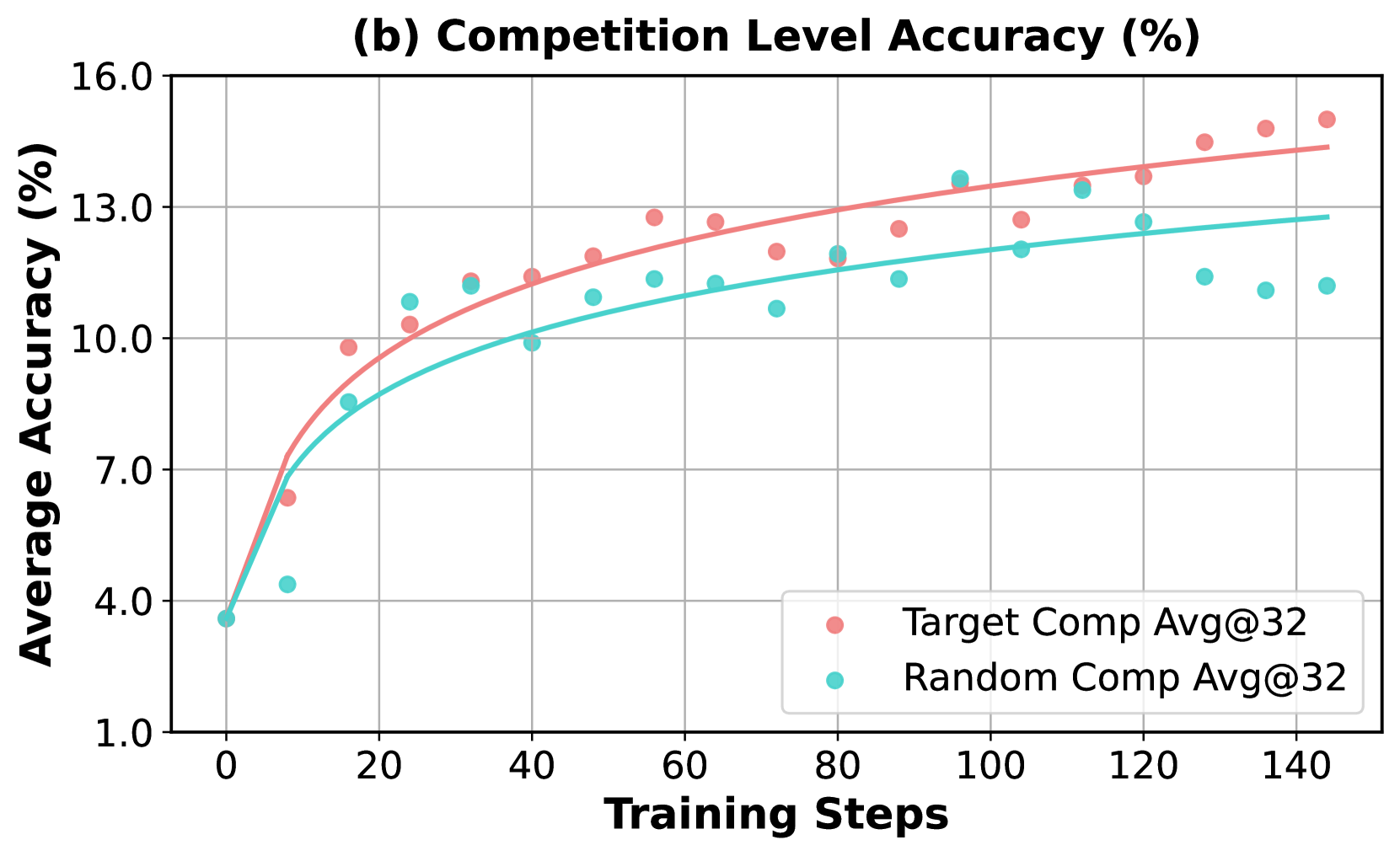

## Line Chart: Competition Level Accuracy (%)

### Overview

The chart illustrates the relationship between training steps and average accuracy for two competition levels: "Target Comp Avg@32" (red) and "Random Comp Avg@32" (teal). Both metrics show increasing trends, but "Target Comp" consistently outperforms "Random Comp" across all training steps.

### Components/Axes

- **X-axis**: Training Steps (0 to 140, increments of 20)

- **Y-axis**: Average Accuracy (%) (1.0 to 16.0, increments of 2.0)

- **Legend**:

- Red: Target Comp Avg@32

- Teal: Random Comp Avg@32

- **Data Points**:

- Red dots (Target Comp) and teal dots (Random Comp) plotted along the curve

- Smooth lines connect the dots for each series

### Detailed Analysis

1. **Target Comp Avg@32 (Red)**:

- Starts at ~3.8% accuracy at 0 steps.

- Rises sharply to ~10% by 20 steps.

- Gradually increases to ~15% at 140 steps.

- Key data points:

- 40 steps: ~11%

- 80 steps: ~12.5%

- 120 steps: ~14%

2. **Random Comp Avg@32 (Teal)**:

- Starts at ~3.8% accuracy at 0 steps.

- Slower growth, reaching ~12% at 140 steps.

- Key data points:

- 40 steps: ~10%

- 80 steps: ~11.5%

- 120 steps: ~12.5%

### Key Observations

- **Performance Gap**: Target Comp accuracy exceeds Random Comp by ~2-3% at all steps beyond 20.

- **Saturation**: Both metrics plateau near 15% (Target) and 12% (Random) after 100 steps.

- **Initial Growth**: Target Comp gains ~6% accuracy in the first 20 steps, while Random Comp gains ~6% over 40 steps.

### Interpretation

The data demonstrates that "Target Comp Avg@32" achieves significantly higher accuracy than "Random Comp Avg@32" as training progresses. The steeper initial growth of Target Comp suggests it adapts more efficiently to training data. The plateau at ~15% for Target Comp implies diminishing returns after extensive training, while Random Comp’s slower improvement highlights its inferior optimization. This trend is critical for evaluating model selection in competitive scenarios, where Target Comp’s efficiency could reduce computational costs without sacrificing performance.