## Line Chart: Performance Comparison of SymDQN(AF) vs. Baseline Over Training Epochs

### Overview

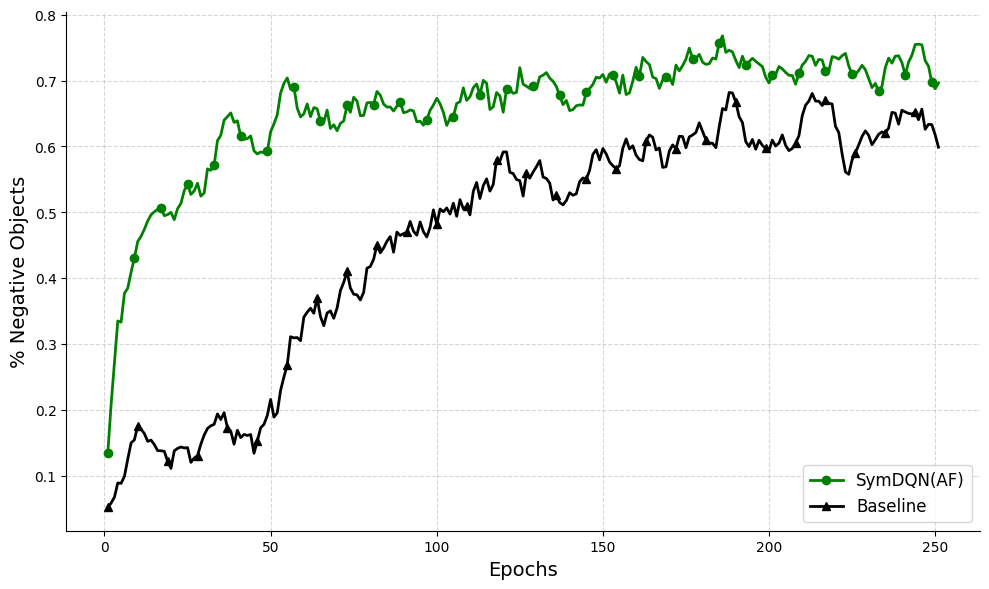

The image is a line chart comparing the performance of two models, "SymDQN(AF)" and "Baseline," over the course of 250 training epochs. The performance metric is the percentage of negative objects, where a higher value appears to indicate better performance. The chart demonstrates a clear and consistent performance gap between the two models throughout the training process.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Epochs"

* **Scale:** Linear scale from 0 to 250, with major tick marks at intervals of 50 (0, 50, 100, 150, 200, 250).

* **Y-Axis (Vertical):**

* **Label:** "% Negative Objects"

* **Scale:** Linear scale from 0.1 to 0.8, with major tick marks at intervals of 0.1 (0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8).

* **Legend:**

* **Position:** Bottom-right corner of the chart area.

* **Entry 1:** "SymDQN(AF)" - Represented by a solid green line with circular markers (●).

* **Entry 2:** "Baseline" - Represented by a solid black line with triangular markers (▲).

* **Grid:** A light gray, dashed grid is present in the background, aligned with the major tick marks on both axes.

### Detailed Analysis

**1. SymDQN(AF) (Green Line with Circles):**

* **Trend:** The line shows a very rapid initial increase, followed by a sustained high level with moderate fluctuations.

* **Data Points (Approximate):**

* Epoch 0: ~0.14

* Epoch 50: ~0.65

* Epoch 100: ~0.68

* Epoch 150: ~0.72

* Epoch 200: ~0.71

* Epoch 250: ~0.70

* **Visual Trend:** The line ascends steeply from epoch 0 to approximately epoch 25, reaching ~0.5. It continues a strong upward trend until around epoch 50. From epoch 50 to 250, the line plateaus in the 0.65-0.75 range, exhibiting noticeable volatility but no significant long-term upward or downward drift.

**2. Baseline (Black Line with Triangles):**

* **Trend:** The line shows a more gradual, steady increase over the entire training period, with less initial volatility than SymDQN(AF).

* **Data Points (Approximate):**

* Epoch 0: ~0.05

* Epoch 50: ~0.20

* Epoch 100: ~0.50

* Epoch 150: ~0.60

* Epoch 200: ~0.65

* Epoch 250: ~0.60

* **Visual Trend:** The line starts very low and climbs slowly until around epoch 50. The rate of increase accelerates between epochs 50 and 125. From epoch 125 onward, the line continues to trend upward but with significant oscillations, peaking near 0.68 around epoch 190 before dipping again.

### Key Observations

1. **Performance Gap:** The SymDQN(AF) model consistently outperforms the Baseline model at every measured epoch. The gap is largest in the early training phase (epochs 0-75) and narrows somewhat in the later stages but never closes.

2. **Learning Speed:** SymDQN(AF) demonstrates significantly faster initial learning, achieving over 50% negative objects by epoch ~20, a milestone the Baseline model does not reach until approximately epoch 95.

3. **Volatility:** Both models show performance fluctuations (noise) after the initial learning phase, which is typical in reinforcement learning training curves. The fluctuations for SymDQN(AF) appear slightly more pronounced in absolute terms during its plateau phase.

4. **Convergence:** Neither model shows a clear sign of having fully converged by epoch 250, as both lines are still exhibiting oscillations. However, SymDQN(AF) appears to have reached a performance plateau around the 0.7 mark.

### Interpretation

The data strongly suggests that the SymDQN(AF) algorithm is more sample-efficient and achieves a higher final performance level than the Baseline algorithm for this specific task of maximizing "% Negative Objects."

* **Faster Policy Improvement:** The steep initial slope of the SymDQN(AF) curve indicates it extracts more useful learning signal from early experiences, leading to a rapid improvement in its policy.

* **Higher Asymptotic Performance:** The sustained plateau at a higher value (~0.7 vs. ~0.6-0.65) suggests SymDQN(AF) is capable of learning a more optimal or effective policy in the long run.

* **Stability Trade-off:** While SymDQN(AF) learns faster and better, the persistent fluctuations in both curves indicate that the learning process for both models remains somewhat unstable even after hundreds of epochs. This could be due to the inherent stochasticity of the environment, the exploration strategy, or the optimization dynamics.

* **Practical Implication:** If training time or computational resources are limited, SymDQN(AF) is the clearly superior choice, as it reaches a usable performance level much sooner. Even with unlimited training time, it appears to settle at a better final performance.