## Diagram: Hierarchical Classification of LLM Benchmarks

### Overview

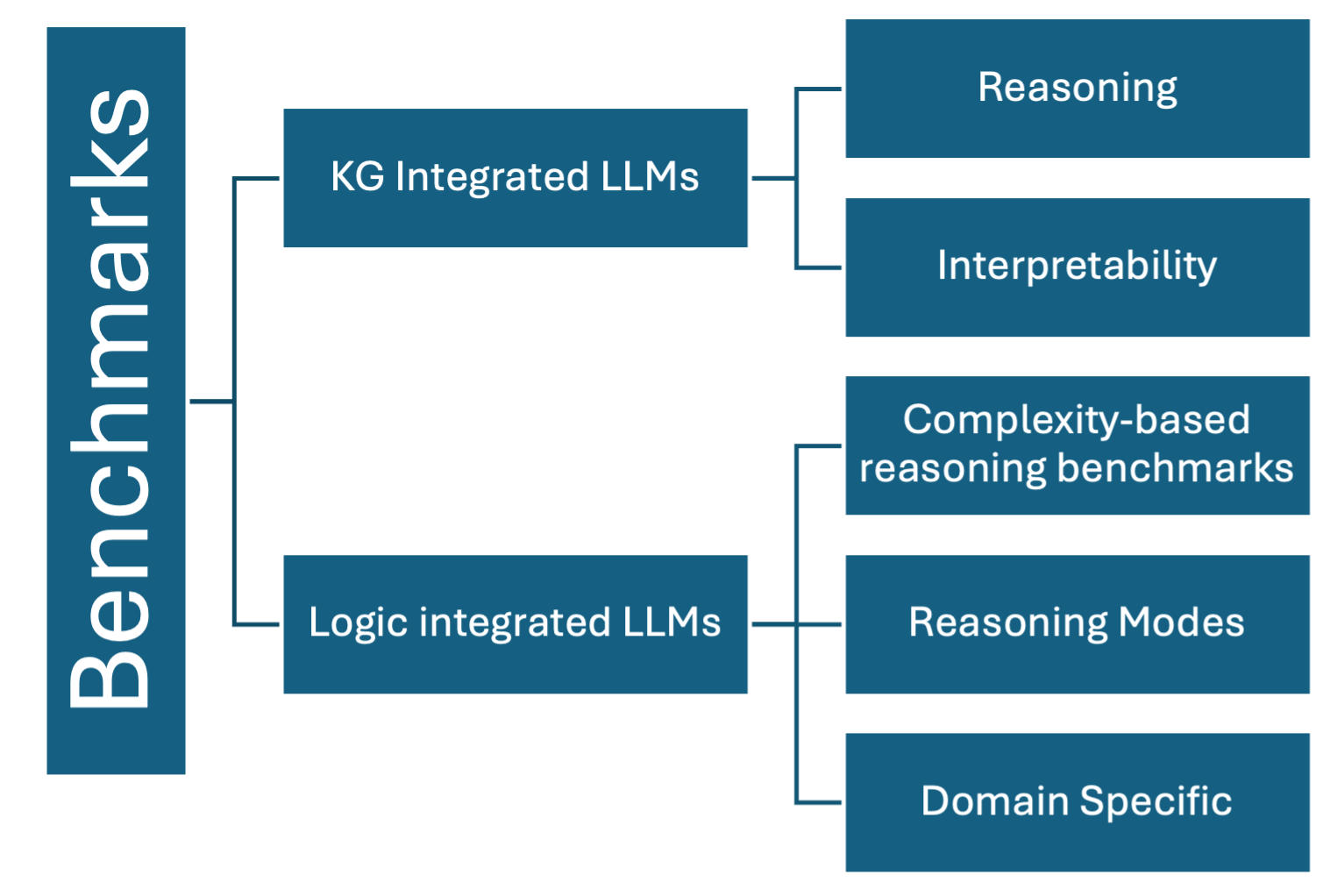

The image displays a hierarchical tree diagram (organizational chart) that categorizes benchmarks for Large Language Models (LLMs). The structure flows from a single root category on the left, branching into two primary categories, which then further subdivide into specific evaluation areas on the right. The design uses a consistent color scheme of teal boxes with white text, connected by dark blue lines on a light gray background.

### Components/Axes

* **Root Node (Leftmost):** A vertical box labeled **"Benchmarks"**. This is the overarching category.

* **Primary Branches (Middle Column):** Two boxes connected directly to the root node.

* Top box: **"KG Integrated LLMs"** (Knowledge Graph Integrated LLMs).

* Bottom box: **"Logic integrated LLMs"**.

* **Secondary Branches (Right Column):** Five boxes connected to the primary branches.

* Connected to "KG Integrated LLMs":

* **"Reasoning"** (top-right)

* **"Interpretability"** (below Reasoning)

* Connected to "Logic integrated LLMs":

* **"Complexity-based reasoning benchmarks"** (top of this sub-group)

* **"Reasoning Modes"** (middle)

* **"Domain Specific"** (bottom-right)

### Detailed Analysis

The diagram establishes a clear two-level taxonomy under the main theme of "Benchmarks."

1. **First-Level Split:** Benchmarks are divided based on the type of integration with the LLM:

* **KG Integrated LLMs:** Benchmarks for models that incorporate structured knowledge from Knowledge Graphs.

* **Logic integrated LLMs:** Benchmarks for models that incorporate formal logic or reasoning frameworks.

2. **Second-Level Breakdown:**

* For **KG Integrated LLMs**, the evaluation focuses on two core capabilities:

* **Reasoning:** The model's ability to infer new facts or relationships using the integrated knowledge.

* **Interpretability:** The transparency and explainability of the model's outputs, likely leveraging the structured nature of the KG.

* For **Logic integrated LLMs**, the evaluation is more granular, split into three distinct benchmark types:

* **Complexity-based reasoning benchmarks:** Tests that scale in logical difficulty or step count.

* **Reasoning Modes:** Benchmarks assessing different types of logical deduction (e.g., deductive, inductive, abductive).

* **Domain Specific:** Benchmarks tailored to evaluate logical reasoning within specific fields (e.g., law, mathematics, science).

### Key Observations

* The diagram is purely categorical and contains no numerical data, performance metrics, or specific benchmark names (e.g., MMLU, HellaSwag).

* The visual hierarchy is clear: "Benchmarks" is the parent, the two integration types are children, and the five rightmost boxes are grandchildren.

* The "Logic integrated LLMs" branch has a more detailed breakdown (3 sub-categories) compared to the "KG Integrated LLMs" branch (2 sub-categories).

* The text is exclusively in English.

### Interpretation

This diagram provides a conceptual framework for understanding the landscape of LLM evaluation, specifically for models that go beyond base capabilities by integrating external structured knowledge (KGs) or formal reasoning systems (Logic).

* **What it demonstrates:** It argues that evaluating such advanced LLMs requires specialized benchmarks. The taxonomy suggests that the evaluation focus differs fundamentally based on the integration type. KG integration is evaluated on the *outcomes* of its knowledge use (reasoning and interpretability), while logic integration is evaluated on the *process and scope* of its reasoning (complexity, modes, and domain applicability).

* **Relationships:** The structure implies that "Reasoning" is a common goal for both KG and Logic-integrated models, but it is approached and benchmarked differently. For KG models, reasoning is a direct output to be measured. For Logic models, reasoning is the core process to be dissected by complexity, mode, and domain.

* **Notable Implication:** The absence of a "Performance" or "Accuracy" category at this level suggests this is a high-level taxonomy of *what* to test, not *how well* the models perform. It serves as a map for researchers or practitioners to select appropriate benchmark suites based on the architectural integration of the LLM they are evaluating.