\n

## Diagram: LLM Benchmarks and Capabilities

### Overview

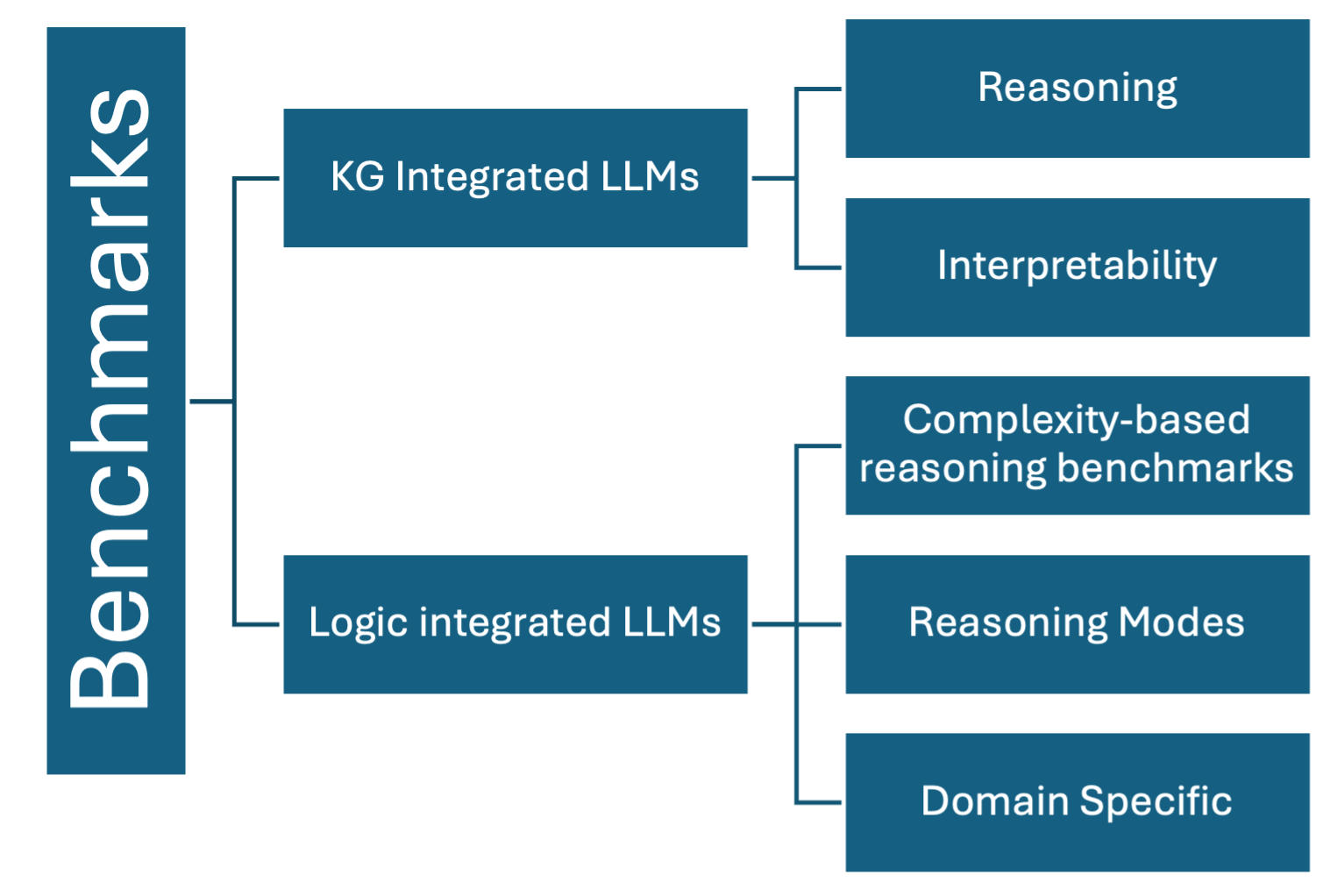

The image is a diagram illustrating the relationship between different types of Large Language Models (LLMs) and the benchmarks used to evaluate them. It depicts two main categories of LLMs – KG Integrated LLMs and Logic Integrated LLMs – and how they relate to various benchmark areas. The diagram uses rectangular blocks connected by lines to show these relationships.

### Components/Axes

The diagram consists of the following components:

* **Left Column:** "Benchmarks" – vertically oriented label on the left side of the diagram.

* **Central Blocks:** Two main blocks representing LLM types:

* "KG Integrated LLMs"

* "Logic integrated LLMs"

* **Right Column:** Blocks representing benchmark areas:

* "Reasoning"

* "Interpretability"

* "Complexity-based reasoning benchmarks"

* "Reasoning Modes"

* "Domain Specific"

* **Connecting Lines:** Arrows indicating the relationship between LLM types and benchmark areas.

### Detailed Analysis or Content Details

The diagram shows the following relationships:

* **KG Integrated LLMs** are connected to:

* "Reasoning"

* "Interpretability"

* **Logic integrated LLMs** are connected to:

* "Complexity-based reasoning benchmarks"

* "Reasoning Modes"

* "Domain Specific"

The blocks are arranged vertically, with "Benchmarks" on the far left, the LLM types in the center, and the benchmark areas on the right. The connections are made with straight lines, indicating a direct relationship.

### Key Observations

The diagram suggests that KG Integrated LLMs are primarily evaluated based on their reasoning and interpretability capabilities, while Logic Integrated LLMs are assessed using benchmarks focused on complexity, reasoning modes, and domain-specific knowledge. There is no overlap in the benchmarks used for the two LLM types.

### Interpretation

This diagram illustrates a categorization of LLMs based on their underlying architecture (Knowledge Graph integrated vs. Logic integrated) and the corresponding benchmarks used to assess their performance. It suggests a deliberate focus on different aspects of LLM capabilities depending on the model type. KG Integrated LLMs, leveraging knowledge graphs, are likely evaluated on their ability to reason with and explain knowledge, while Logic Integrated LLMs, built on logical reasoning principles, are assessed on their ability to handle complex reasoning tasks, different reasoning approaches, and specialized domain knowledge. The lack of overlap in benchmarks implies that these two types of LLMs are designed for different applications or have different strengths. The diagram doesn't provide quantitative data, but rather a conceptual framework for understanding the evaluation landscape of LLMs.