\n

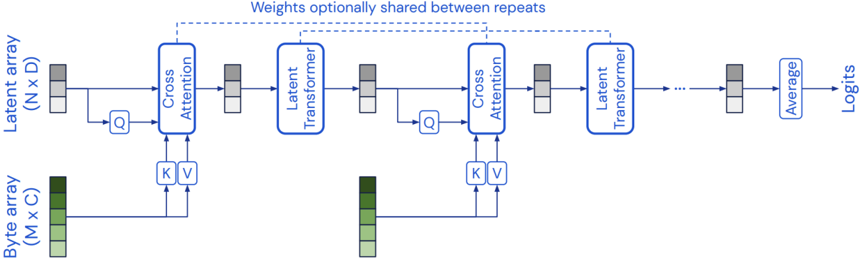

## Diagram: Model Architecture - Repeated Cross Attention and Transformer Blocks

### Overview

The image depicts a diagram of a model architecture consisting of repeated blocks of Cross Attention and Latent Transformer layers. The model takes a Byte array as input and processes it to produce Logits as output. The diagram illustrates the flow of data through these blocks, with optional weight sharing between repetitions.

### Components/Axes

The diagram consists of the following components:

* **Byte array (M x C):** Input data represented as a byte array with dimensions M x C.

* **Latent array (N x D):** An intermediate representation with dimensions N x D.

* **Cross Attention:** A block performing cross-attention between the Latent array and the Byte array.

* **Latent Transformer:** A block performing transformation on the Latent array.

* **Q, K, V:** Represent Query, Key, and Value vectors used within the Cross Attention mechanism.

* **Average:** A block performing averaging.

* **Logits:** The final output of the model.

* **Weights optionally shared between repeats:** A dashed line indicating optional weight sharing between repeated blocks.

The diagram is structured horizontally, with the input Byte array at the bottom and the output Logits at the right. The Latent array flows horizontally across the top.

### Detailed Analysis / Content Details

The diagram shows a repeating pattern of blocks. Each repetition consists of:

1. **Cross Attention:** The Latent array (N x D) is fed into a Cross Attention block. Simultaneously, the Byte array (M x C) is processed to generate Query (Q), Key (K), and Value (V) vectors. These vectors are used within the Cross Attention block.

2. **Latent Transformer:** The output of the Cross Attention block is then fed into a Latent Transformer block.

3. **Repetition:** This Cross Attention and Latent Transformer block is repeated multiple times, as indicated by the ellipsis ("...").

4. **Averaging and Output:** After the repetitions, the output is passed through an Average block, and finally produces Logits.

The Byte array (M x C) is shown as a series of green blocks. The Latent array (N x D) is shown as a series of gray blocks. The Q, K, and V vectors are represented by small boxes within the Cross Attention blocks.

### Key Observations

* The architecture is designed for sequential processing of the Byte array.

* The Cross Attention mechanism allows the model to attend to relevant parts of the Byte array when processing the Latent array.

* The Latent Transformer block likely performs further processing and refinement of the Latent array.

* The optional weight sharing suggests a potential for parameter efficiency.

* The diagram does not provide specific numerical values or dimensions beyond the array sizes (M x C, N x D).

### Interpretation

This diagram illustrates a model architecture likely used for processing sequential data, such as text or byte streams. The use of Cross Attention suggests that the model is designed to relate the input Byte array to an internal Latent representation. The repeated blocks of Cross Attention and Latent Transformer layers allow the model to iteratively refine its understanding of the input data. The optional weight sharing indicates a design choice to potentially reduce the number of parameters in the model, which can improve training efficiency and generalization performance. The final Logits output suggests that the model is likely used for a classification or prediction task. The architecture is reminiscent of Transformer-based models, but adapted to operate directly on byte arrays rather than token embeddings. The diagram is a high-level overview and does not provide details about the specific implementation of the Cross Attention or Latent Transformer blocks.