# Technical Diagram Analysis: LLM Quantization and Runtime Workflow

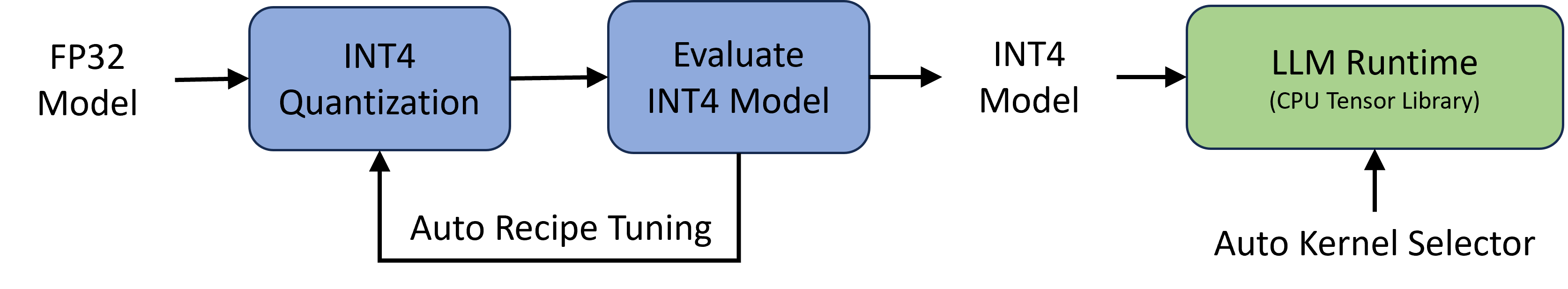

This image illustrates a technical pipeline for converting a high-precision Large Language Model (LLM) into a quantized format and deploying it to a specific runtime environment.

## 1. Diagram Components and Flow

The workflow is a linear process with a feedback loop, moving from left to right.

### Phase 1: Model Preparation and Optimization

* **Input:** **FP32 Model** (Floating Point 32-bit). This is the starting point of the pipeline.

* **Process Block 1 (Blue):** **INT4 Quantization**. An arrow points from the FP32 Model into this block, indicating the initial conversion process.

* **Process Block 2 (Blue):** **Evaluate INT4 Model**. An arrow points from the Quantization block to this evaluation block.

* **Feedback Loop:** A return path labeled **Auto Recipe Tuning** connects the bottom of the "Evaluate INT4 Model" block back to the "INT4 Quantization" block. This indicates an iterative optimization process to refine the quantization parameters based on evaluation results.

### Phase 2: Deployment

* **Intermediate Output:** **INT4 Model**. An arrow leads from the evaluation block to this text label, representing the finalized, optimized 4-bit integer model.

* **Process Block 3 (Green):** **LLM Runtime (CPU Tensor Library)**. An arrow points from the INT4 Model label into this block, representing the execution environment.

* **Support Component:** **Auto Kernel Selector**. An upward-pointing arrow indicates that this component feeds into or configures the LLM Runtime block.

---

## 2. Text Transcription

| Location | Text Content |

| :--- | :--- |

| Far Left (Input) | FP32 Model |

| First Box (Blue) | INT4 Quantization |

| Second Box (Blue) | Evaluate INT4 Model |

| Bottom Loop | Auto Recipe Tuning |

| Center Right | INT4 Model |

| Third Box (Green) | LLM Runtime (CPU Tensor Library) |

| Bottom Right | Auto Kernel Selector |

---

## 3. Technical Summary

The diagram depicts an automated optimization pipeline for LLMs. It starts with a standard **FP32 Model**, which undergoes **INT4 Quantization**. The resulting model is then subjected to an **Evaluation** phase. If the performance or accuracy does not meet requirements, the **Auto Recipe Tuning** loop adjusts the quantization "recipe" and repeats the process.

Once the **INT4 Model** is finalized, it is passed to the **LLM Runtime**, which utilizes a **CPU Tensor Library**. The runtime performance is further optimized by an **Auto Kernel Selector**, which likely chooses the most efficient computational kernels for the specific hardware and model architecture.