# Technical Diagram Analysis

## Component Overview

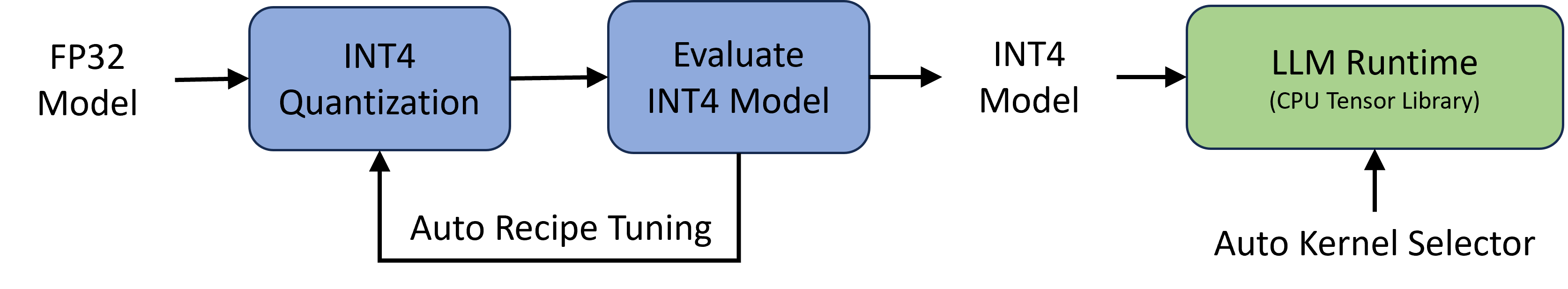

The diagram illustrates a model optimization and deployment pipeline with the following key components:

1. **FP32 Model**

- Starting point of the pipeline

- Represents full-precision 32-bit floating point model

2. **INT4 Quantization**

- Converts FP32 model to 4-bit integer precision

- Reduces model size and computational requirements

3. **Evaluate INT4 Model**

- Performance assessment stage

- Validates quantization quality

4. **INT4 Model**

- Optimized quantized model output

- Input to deployment stage

5. **LLM Runtime (CPU Tensor Library)**

- Deployment environment

- Utilizes CPU-based tensor operations

- Contains "Auto Kernel Selector" component

6. **Auto Recipe Tuning**

- Feedback loop from evaluation stage

- Optimizes quantization parameters

7. **Auto Kernel Selector**

- Runtime optimization component

- Selects optimal CPU instructions

## Process Flow

1. **Initial Conversion**

FP32 Model → INT4 Quantization

2. **Validation Loop**

INT4 Quantization → Evaluate INT4 Model

Evaluate INT4 Model → Auto Recipe Tuning (feedback)

3. **Deployment Pipeline**

Evaluate INT4 Model → INT4 Model → LLM Runtime

LLM Runtime → Auto Kernel Selector

## Technical Specifications

- **Precision Handling**:

FP32 (32-bit float) → INT4 (4-bit integer)

- **Hardware Target**:

CPU-based execution (CPU Tensor Library)

- **Optimization Features**:

Auto-tuning of quantization recipes

Dynamic kernel selection at runtime

## Key Technical Relationships

- Quantization quality directly impacts runtime performance

- Auto-tuning loop ensures optimal quantization parameters

- Kernel selection adapts to available CPU instruction sets