TECHNICAL ASSET FINGERPRINT

ebbca09e17f52f4574812100

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Heatmap: Benign vs. Jailbreak Token Embeddings

### Overview

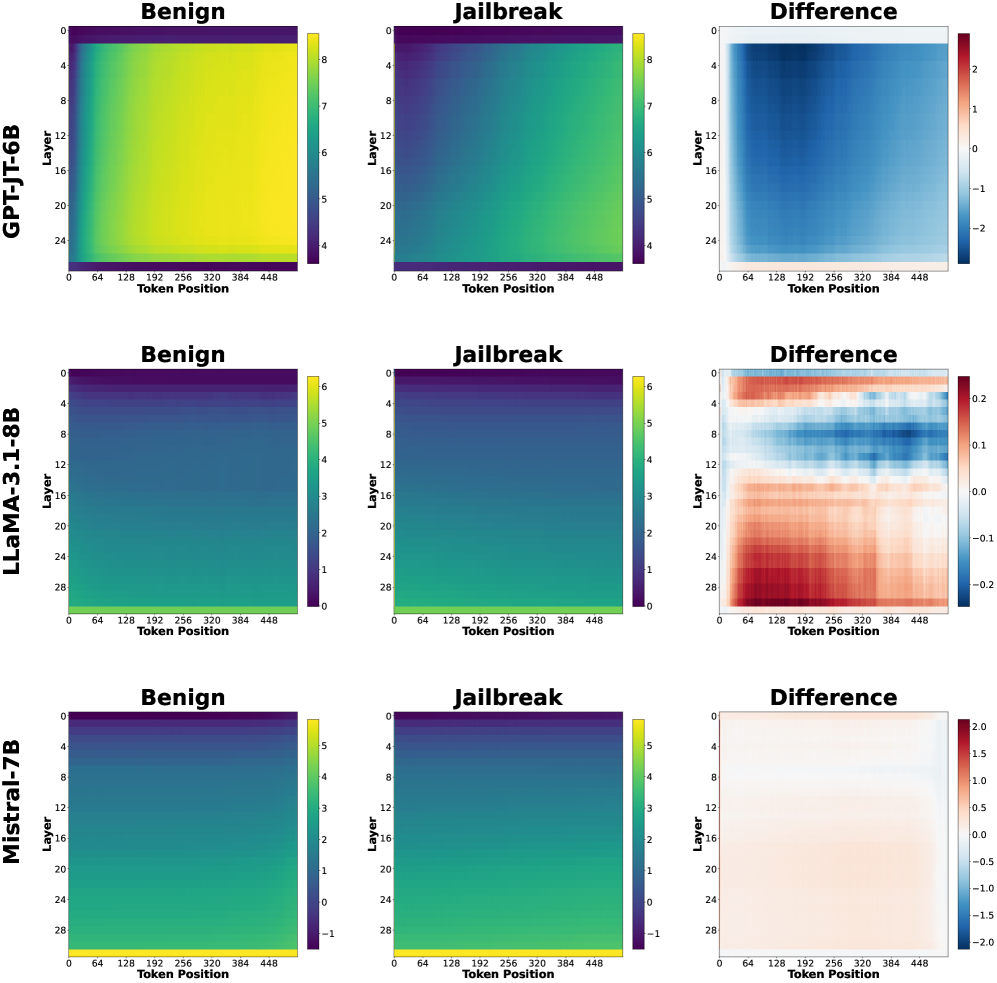

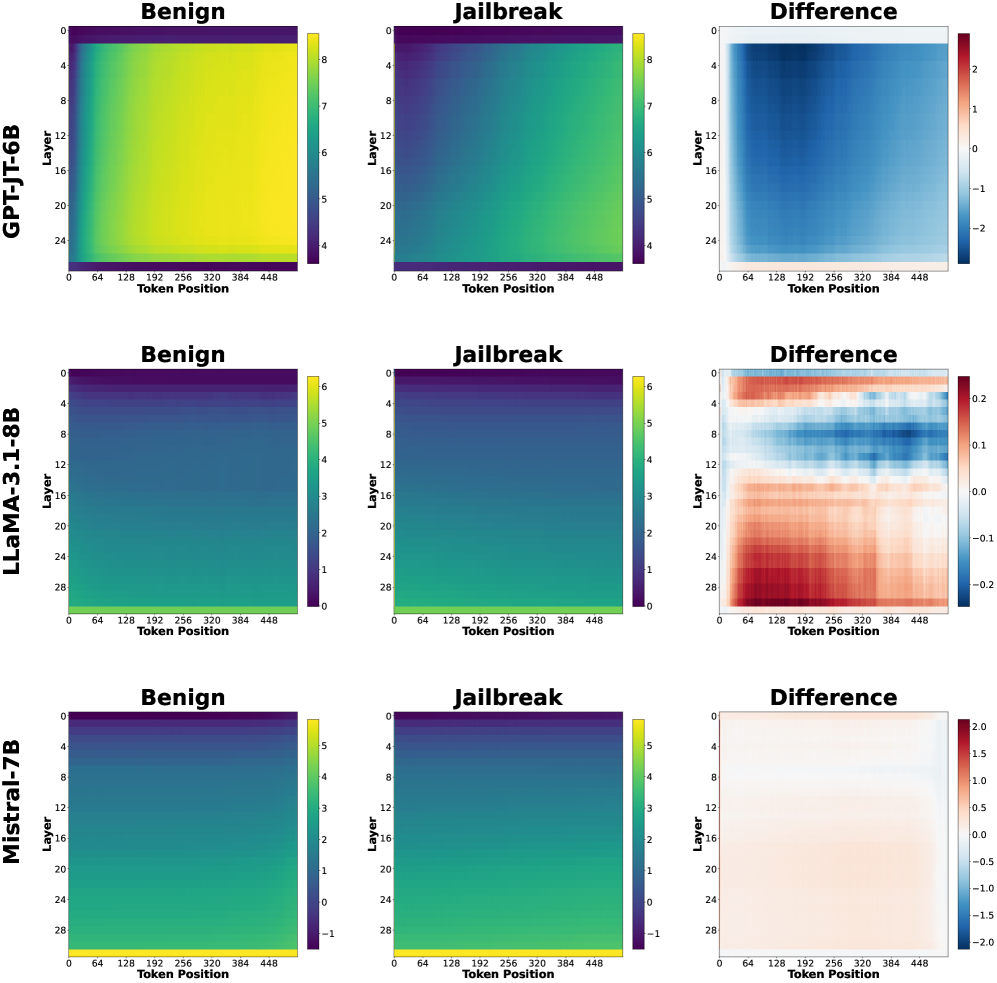

The image presents a series of heatmaps comparing the token embeddings of three language models (GPT-JT-6B, LLaMA-3.1-8B, and Mistral-7B) under two conditions: "Benign" and "Jailbreak". The heatmaps visualize the activation levels across different layers and token positions. A third heatmap shows the "Difference" between the Benign and Jailbreak conditions.

### Components/Axes

* **Rows:** Each row represents a different language model: GPT-JT-6B (top), LLaMA-3.1-8B (middle), and Mistral-7B (bottom).

* **Columns:** Each set of three columns represents a condition: "Benign", "Jailbreak", and "Difference".

* **Y-axis (Layer):** The y-axis represents the layer number of the language model. The layer numbers increase from top to bottom.

* GPT-JT-6B: Layers range from 0 to 24.

* LLaMA-3.1-8B: Layers range from 0 to 28.

* Mistral-7B: Layers range from 0 to 28.

* **X-axis (Token Position):** The x-axis represents the token position, ranging from 0 to 448.

* **Color Scale (Benign & Jailbreak):** The color scale represents the activation level, with warmer colors (yellow) indicating higher activation and cooler colors (purple/dark blue) indicating lower activation.

* GPT-JT-6B: Ranges from approximately 4 to 8.

* LLaMA-3.1-8B: Ranges from approximately 0 to 6.

* Mistral-7B: Ranges from approximately -1 to 5.

* **Color Scale (Difference):** The color scale represents the difference in activation levels between the "Benign" and "Jailbreak" conditions. Red indicates a positive difference (higher activation in "Benign"), and blue indicates a negative difference (higher activation in "Jailbreak").

* GPT-JT-6B: Ranges from approximately -2 to 2.

* LLaMA-3.1-8B: Ranges from approximately -0.2 to 0.2.

* Mistral-7B: Ranges from approximately -2 to 2.

### Detailed Analysis

#### GPT-JT-6B

* **Benign:** The heatmap shows relatively high activation across all layers and token positions, with a slight decrease in activation towards the bottom layers. The activation is generally uniform.

* **Jailbreak:** The heatmap shows a similar pattern to the "Benign" condition, but with slightly lower overall activation.

* **Difference:** The heatmap shows a clear negative difference (blue) in the upper layers (approximately 0-8), indicating higher activation in the "Jailbreak" condition for these layers. The lower layers show a slight positive difference (red), indicating higher activation in the "Benign" condition.

#### LLaMA-3.1-8B

* **Benign:** The heatmap shows lower activation in the upper layers, gradually increasing towards the bottom layers.

* **Jailbreak:** The heatmap shows a similar pattern to the "Benign" condition.

* **Difference:** The heatmap shows a more complex pattern. The upper layers (approximately 0-8) show a negative difference (blue), while the middle layers (approximately 8-20) show a positive difference (red). The bottom layers show a mix of positive and negative differences.

#### Mistral-7B

* **Benign:** The heatmap shows a similar pattern to LLaMA-3.1-8B, with lower activation in the upper layers and increasing activation towards the bottom layers.

* **Jailbreak:** The heatmap shows a similar pattern to the "Benign" condition.

* **Difference:** The heatmap shows a relatively small difference between the "Benign" and "Jailbreak" conditions. The upper layers show a slight negative difference (blue), while the lower layers show a slight positive difference (red).

### Key Observations

* The "Difference" heatmaps highlight the layers and token positions where the "Benign" and "Jailbreak" conditions diverge the most.

* GPT-JT-6B shows the most pronounced difference in the upper layers, with higher activation in the "Jailbreak" condition.

* LLaMA-3.1-8B shows a more complex difference pattern, with both positive and negative differences across different layers.

* Mistral-7B shows the smallest difference between the two conditions.

* The token position does not appear to have a strong influence on the activation levels, as the heatmaps are relatively uniform along the x-axis.

### Interpretation

The heatmaps provide a visual representation of how different language models respond to "Benign" and "Jailbreak" prompts. The "Difference" heatmaps are particularly useful for identifying the layers that are most affected by the "Jailbreak" condition.

The data suggests that GPT-JT-6B is more susceptible to "Jailbreak" attacks, as evidenced by the significant difference in activation levels in the upper layers. LLaMA-3.1-8B shows a more nuanced response, with different layers exhibiting different behaviors. Mistral-7B appears to be the most robust against "Jailbreak" attacks, as the difference between the two conditions is minimal.

These findings could be used to develop more effective defense mechanisms against "Jailbreak" attacks by focusing on the layers that are most vulnerable. Further research is needed to understand the underlying mechanisms that cause these differences in behavior.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmaps: Activation Analysis of Language Models - Benign vs. Jailbreak

### Overview

The image presents a 3x3 grid of heatmaps, comparing the activation patterns of three different language models (GPT-JT-6B, LLaMA-3-1.8B, and Mistral-7B) under benign and jailbreak prompts. Each model has three heatmaps: one for benign prompts, one for jailbreak prompts, and one showing the difference between the two. The heatmaps visualize activation levels across layers (y-axis) and token positions (x-axis).

### Components/Axes

* **Models:** GPT-JT-6B, LLaMA-3-1.8B, Mistral-7B (arranged vertically)

* **Prompts:** Benign, Jailbreak, Difference (arranged horizontally)

* **X-axis:** Token Position (ranging from approximately 0 to 448, with markers at 64, 128, 192, 256, 320, 384, and 448)

* **Y-axis:** Layer (ranging from approximately 0 to 24, with markers at 4, 8, 12, 16, 20, and 24)

* **Color Scales:** Each heatmap has a distinct color scale representing activation levels.

* GPT-JT-6B Difference: Ranges from -2 (blue) to 2 (red).

* LLaMA-3-1.8B Difference: Ranges from -0.2 (blue) to 0.2 (red).

* Mistral-7B Difference: Ranges from -1.5 (blue) to 1.5 (red).

* Benign and Jailbreak heatmaps use a similar color scheme, but the scales are not explicitly labeled.

### Detailed Analysis or Content Details

**GPT-JT-6B:**

* **Benign:** The heatmap is predominantly yellow/green, indicating moderate activation across all layers and token positions. There's a slight gradient, with higher activation in the middle layers (around layer 12-16).

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow/green. There's a slightly more pronounced gradient, with a potential increase in activation in the middle layers.

* **Difference:** The heatmap shows a clear pattern. The top half (layers 0-12) is predominantly blue (negative difference), indicating lower activation during jailbreak compared to benign prompts. The bottom half (layers 12-24) is predominantly red (positive difference), indicating higher activation during jailbreak. The strongest differences are around token positions 192-320.

**LLaMA-3-1.8B:**

* **Benign:** The heatmap is almost entirely yellow, indicating consistent moderate activation across all layers and token positions.

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow. There's a very subtle gradient.

* **Difference:** The heatmap shows very small differences. Most of the heatmap is white/light yellow (close to zero difference). There are some very faint blue and red areas, indicating minor activation differences.

**Mistral-7B:**

* **Benign:** The heatmap is predominantly yellow/green, with a gradient showing higher activation in the middle layers (around layer 12-16).

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow/green. There's a slightly more pronounced gradient.

* **Difference:** The heatmap shows a pattern similar to GPT-JT-6B, but more pronounced. The top half (layers 0-12) is predominantly blue (negative difference), and the bottom half (layers 12-24) is predominantly red (positive difference). The strongest differences are around token positions 192-320.

### Key Observations

* GPT-JT-6B and Mistral-7B exhibit a clear shift in activation patterns between benign and jailbreak prompts, with different layers responding differently.

* LLaMA-3-1.8B shows minimal activation differences between benign and jailbreak prompts.

* The difference heatmaps for GPT-JT-6B and Mistral-7B suggest that the lower layers are more suppressed during jailbreak, while the higher layers are more activated.

* The most significant differences in activation appear to occur around token positions 192-320 for GPT-JT-6B and Mistral-7B.

### Interpretation

The heatmaps suggest that GPT-JT-6B and Mistral-7B employ different activation strategies when processing benign versus jailbreak prompts. The layer-specific activation differences indicate that these models might be utilizing different parts of their neural networks to handle potentially harmful requests. The suppression of lower layers and activation of higher layers during jailbreak could be a mechanism for generating responses that bypass safety constraints.

LLaMA-3-1.8B's minimal activation differences suggest that it might be less sensitive to the type of prompt or that its activation patterns are more consistent regardless of the input. This could indicate a different internal representation of language or a more robust safety mechanism.

The concentration of differences around token positions 192-320 might correspond to the part of the prompt where the jailbreak attempt is most evident. This could be a region where the model detects a potentially harmful request and adjusts its activation accordingly.

These findings highlight the importance of analyzing internal activations to understand how language models respond to different types of prompts and to identify potential vulnerabilities. The differences in activation patterns across models suggest that different architectures and training procedures can lead to varying levels of sensitivity to jailbreak attempts.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Comparison: Benign vs. Jailbreak Activation Patterns Across Language Models

### Overview

The image displays a 3x3 grid of heatmaps comparing the internal activation patterns of three large language models (LLMs) under two conditions: "Benign" (standard prompts) and "Jailbreak" (adversarial prompts designed to bypass safety filters). The third column shows the "Difference" between the two conditions for each model. Each heatmap plots "Layer" (y-axis) against "Token Position" (x-axis), with color intensity representing a numerical value (likely activation magnitude or a related metric).

### Components/Axes

* **Grid Structure:** 3 rows (Models) x 3 columns (Conditions).

* **Row Labels (Left Side):**

* Row 1: **GPT-JT-6B**

* Row 2: **LLaMA-3.1-8B**

* Row 3: **Mistral-7B**

* **Column Headers (Top):**

* Column 1: **Benign**

* Column 2: **Jailbreak**

* Column 3: **Difference**

* **Axes (Per Heatmap):**

* **Y-axis:** Label: **Layer**. Scale: 0 at top, increasing downward. Ticks: 0, 4, 8, 12, 16, 20, 24, 28 (for LLaMA-3.1-8B and Mistral-7B). GPT-JT-6B scale ends at 24.

* **X-axis:** Label: **Token Position**. Scale: 0 at left, increasing rightward. Ticks: 0, 64, 128, 192, 256, 320, 384, 448.

* **Color Bars (Legends):** Located to the right of each individual heatmap.

* **GPT-JT-6B (Benign & Jailbreak):** Scale from ~4 (dark purple) to ~8 (bright yellow).

* **GPT-JT-6B (Difference):** Scale from -2 (dark blue) to +2 (dark red). Zero is white/light gray.

* **LLaMA-3.1-8B (Benign & Jailbreak):** Scale from 0 (dark purple) to 6 (bright yellow).

* **LLaMA-3.1-8B (Difference):** Scale from -0.2 (dark blue) to +0.2 (dark red). Zero is white.

* **Mistral-7B (Benign & Jailbreak):** Scale from -1 (dark purple) to 5 (bright yellow).

* **Mistral-7B (Difference):** Scale from -2.0 (dark blue) to +2.0 (dark red). Zero is white.

### Detailed Analysis

**1. GPT-JT-6B (Top Row)**

* **Benign Heatmap:** Shows a strong, consistent gradient. Values are lowest (dark purple, ~4) at Layer 0 across all token positions. Values increase steadily with layer depth, reaching the highest values (bright yellow, ~8) in the deepest layers (20-24). The pattern is uniform across token positions.

* **Jailbreak Heatmap:** Shows a similar but muted pattern. The gradient from low (purple) to high (green/yellow) with depth is present, but the overall intensity is lower. The deepest layers reach a medium green (~6-7), not the bright yellow seen in the Benign condition.

* **Difference Heatmap (Jailbreak - Benign):** Dominated by blue tones, indicating the Jailbreak condition has *lower* values than Benign across nearly all layers and token positions. The strongest negative difference (deepest blue, ~-2) occurs in the middle-to-deep layers (approx. 8-20). The difference is less pronounced in the very first and very last layers.

**2. LLaMA-3.1-8B (Middle Row)**

* **Benign Heatmap:** Shows a clear vertical gradient. Values are lowest (dark purple, 0) at Layer 0. They increase with depth, but the increase is not perfectly uniform. The highest values (yellow, ~6) appear in a band around layers 16-24. The pattern is largely consistent across token positions.

* **Jailbreak Heatmap:** Visually very similar to the Benign heatmap. The same vertical gradient and band of high activation in deep layers are present.

* **Difference Heatmap (Jailbreak - Benign):** Reveals subtle but systematic differences. The pattern is horizontally banded:

* **Early Layers (0-8):** Predominantly blue (negative difference, Jailbreak < Benign), with the strongest negative values (~-0.2) around layers 4-8.

* **Middle Layers (8-16):** A mix, with a notable band of red (positive difference, Jailbreak > Benign) around layers 10-14.

* **Deep Layers (16-28):** Strongly red (positive difference), with the highest values (~+0.2) concentrated in the deepest layers (24-28). This indicates Jailbreak activations are *higher* than Benign in the model's final layers.

**3. Mistral-7B (Bottom Row)**

* **Benign Heatmap:** Shows a smooth vertical gradient. Lowest values (dark purple, ~-1) at Layer 0, increasing to highest values (yellow, ~5) in the deepest layers (24-28). The pattern is uniform across token positions.

* **Jailbreak Heatmap:** Visually almost identical to the Benign heatmap. The same gradient and intensity are observed.

* **Difference Heatmap (Jailbreak - Benign):** Appears almost entirely white/very light orange, indicating near-zero difference across the entire layer-token space. The color bar ranges from -2 to +2, but the heatmap values are clustered very close to 0. There is a very faint, diffuse positive (light orange) tint in some middle-to-deep layers, but the magnitude is negligible compared to the scale.

### Key Observations

1. **Model-Specific Response to Jailbreak:** The three models exhibit fundamentally different internal activation responses to jailbreak prompts.

* **GPT-JT-6B:** Shows a global *suppression* of activations (blue Difference map).

* **LLaMA-3.1-8B:** Shows a *redistribution* of activations—suppressed in early layers, enhanced in deep layers (banded blue/red Difference map).

* **Mistral-7B:** Shows *minimal change* in activation patterns (near-white Difference map).

2. **Layer-Wise Sensitivity:** For LLaMA-3.1-8B, the most significant positive changes (Jailbreak > Benign) occur in the final layers, suggesting these layers are most affected by the adversarial prompt.

3. **Token Position Invariance:** Across all models and conditions, the activation patterns are remarkably consistent along the horizontal (Token Position) axis. The primary variation is vertical (Layer-wise).

### Interpretation

This visualization provides a "fingerprint" of how different LLM architectures process adversarial inputs at an internal, layer-by-layer level.

* **GPT-JT-6B's** uniform suppression suggests the jailbreak prompt may cause a general dampening of the model's standard processing pathway, potentially indicating a form of internal conflict or confusion.

* **LLaMA-3.1-8B's** layered response is particularly insightful. The suppression in early layers might reflect an attempt to filter or ignore the adversarial instruction, while the heightened activation in deep layers could indicate the model ultimately engaging with and processing the harmful content more intensely than a benign prompt. This aligns with theories that later layers handle more abstract, task-specific execution.

* **Mistral-7B's** near-identical maps suggest its internal representations are highly robust or invariant to the specific jailbreak technique used here. Its processing pathway does not significantly deviate from the benign case, which could imply stronger inherent safety alignment or a different failure mode not captured by this metric.

**Conclusion:** The "Difference" heatmap is a powerful diagnostic tool. It reveals that jailbreaking is not a monolithic phenomenon; its internal mechanistic impact varies dramatically across model families. LLaMA-3.1-8B shows the most structured and interpretable shift, while Mistral-7B appears most resistant *under these specific conditions*. This analysis moves beyond simply asking "did the jailbreak work?" to asking "how did the model's internal state change when it was attempted?"

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Analysis: Model Behavior Comparison Across Scenarios

### Overview

The image presents a comparative analysis of three language models (GPT-JT-6B, LLaMA-3.1-8B, Mistral-7B) across three scenarios: **Benign**, **Jailbreak**, and **Difference**. Each model is represented by three heatmaps showing values across **Layers** (vertical axis) and **Token Positions** (horizontal axis). Color gradients indicate magnitude, with legends specifying value ranges.

---

### Components/Axes

1. **Models**:

- GPT-JT-6B (top row)

- LLaMA-3.1-8B (middle row)

- Mistral-7B (bottom row)

2. **Panels per Model**:

- **Benign**: Baseline behavior

- **Jailbreak**: Modified/stressed behavior

- **Difference**: Absolute difference between Benign and Jailbreak

3. **Axes**:

- **Vertical (Y-axis)**: Layers (0–28, incrementing by 4)

- **Horizontal (X-axis)**: Token Positions (0–448, incrementing by 64)

4. **Legends**:

- Right-aligned colorbars with value ranges:

- GPT-JT-6B: 4–8 (Benign), 4–8 (Jailbreak), -2–2 (Difference)

- LLaMA-3.1-8B: 0–6 (Benign), 0–6 (Jailbreak), -2–2 (Difference)

- Mistral-7B: -1–5 (Benign), -1–5 (Jailbreak), -2–2 (Difference)

---

### Detailed Analysis

#### GPT-JT-6B

- **Benign**: Uniform yellow gradient (values ~7–8 across all layers/tokens).

- **Jailbreak**: Green gradient (values ~4–6), indicating reduced activity.

- **Difference**: Blue gradient (values ~-2 to 0), showing consistent decline in Jailbreak.

#### LLaMA-3.1-8B

- **Benign**: Dark blue gradient (values ~0–3), lower baseline than GPT-JT-6B.

- **Jailbreak**: Lighter blue gradient (values ~3–6), moderate increase.

- **Difference**: Mixed red/blue regions (values ~-1 to +1), indicating variable layer/token sensitivity.

#### Mistral-7B

- **Benign**: Green gradient (values ~1–4), moderate baseline.

- **Jailbreak**: Darker blue gradient (values ~-1–2), slight decline.

- **Difference**: Neutral gradient (values ~-0.5 to +0.5), minimal changes.

---

### Key Observations

1. **GPT-JT-6B**:

- Highest baseline values in Benign (yellow).

- Sharp drop in Jailbreak (green), with uniform decline across all layers/tokens.

- Difference heatmap shows consistent negative values (-2 to 0), suggesting jailbreak reduces performance.

2. **LLaMA-3.1-8B**:

- Lower baseline (dark blue) but notable variability in Jailbreak (lighter blue).

- Difference heatmap reveals red regions (positive values) in lower layers (0–12), indicating some layers improve under jailbreak.

3. **Mistral-7B**:

- Most stable performance: minimal difference between scenarios.

- Difference heatmap is nearly neutral, with slight red in lower layers (0–8).

---

### Interpretation

- **Model Robustness**:

- GPT-JT-6B exhibits the largest performance drop under jailbreak, suggesting vulnerability.

- Mistral-7B shows the least sensitivity to jailbreak, indicating robustness.

- **Layer-Specific Behavior**:

- LLaMA-3.1-8B’s red regions in Difference (layers 0–12) imply lower layers may adapt better to jailbreak prompts.

- GPT-JT-6B’s uniform decline suggests systemic sensitivity across all layers.

- **Token Position Impact**:

- No clear token-position trends in Difference heatmaps, indicating effects are layer-dependent rather than position-dependent.

---

### Critical Insights

- **Jailbreak Impact**:

- GPT-JT-6B’s uniform decline (-2 to 0) suggests jailbreak uniformly degrades performance.

- LLaMA-3.1-8B’s mixed red/blue Difference regions highlight layer-specific vulnerabilities.

- **Design Implications**:

- Models with higher baseline values (GPT-JT-6B) may require stricter safeguards.

- Mistral-7B’s stability could make it preferable for safety-critical applications.

---

### Uncertainties

- Exact numerical values are approximated from color gradients; precise thresholds require raw data.

- Token-position trends are ambiguous due to uniform coloration in Difference heatmaps.

DECODING INTELLIGENCE...