## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2.5-Math-7B)

### Overview

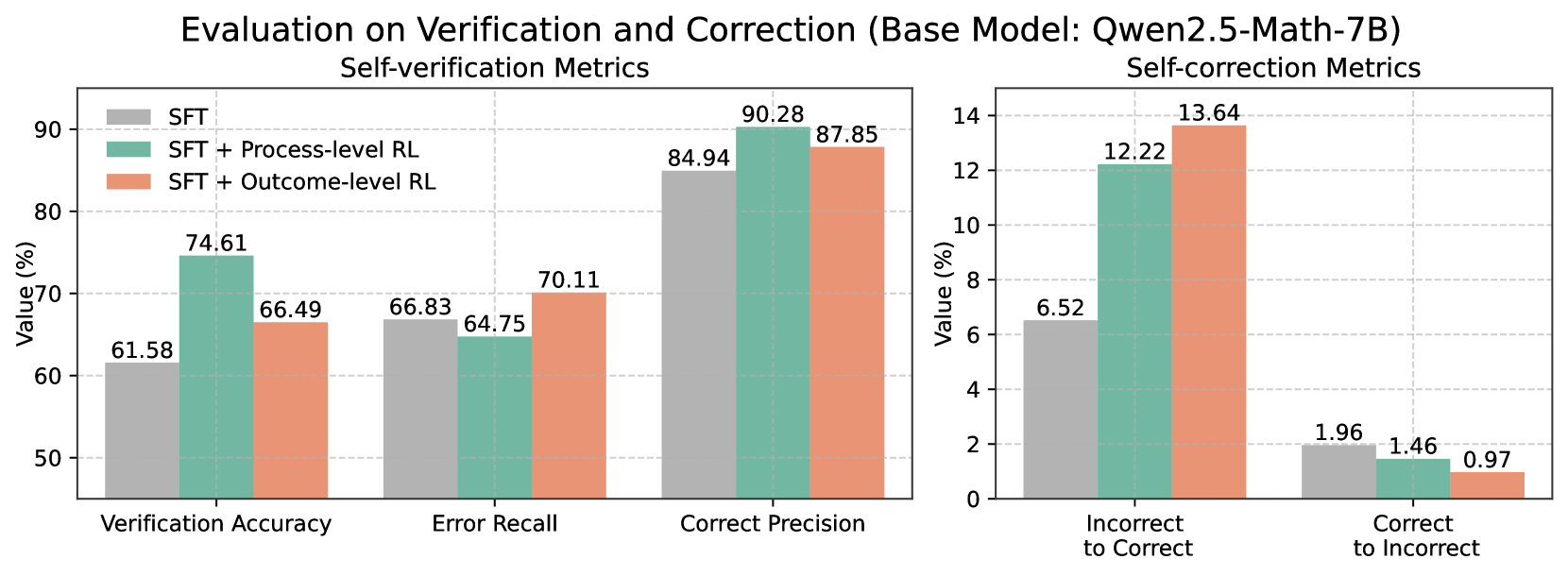

This image contains two bar charts side-by-side, presenting the results of self-verification and self-correction metrics for a base model named "Qwen2.5-Math-7B". The metrics are evaluated across three different configurations: "SFT", "SFT + Process-level RL", and "SFT + Outcome-level RL". The left chart focuses on "Self-verification Metrics" and the right chart on "Self-correction Metrics".

### Components/Axes

**Overall Title:** Evaluation on Verification and Correction (Base Model: Qwen2.5-Math-7B)

**Left Chart:**

* **Title:** Self-verification Metrics

* **X-axis Title:** (Implicitly, the metric categories)

* **X-axis Categories:** Verification Accuracy, Error Recall, Correct Precision

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 50 to 90, with major ticks at 50, 60, 70, 80, 90.

* **Legend:** Located in the top-left quadrant of the left chart.

* **SFT:** Represented by a grey color.

* **SFT + Process-level RL:** Represented by a teal/green color.

* **SFT + Outcome-level RL:** Represented by a coral/orange color.

**Right Chart:**

* **Title:** Self-correction Metrics

* **X-axis Title:** (Implicitly, the metric categories)

* **X-axis Categories:** Incorrect to Correct, Correct to Incorrect

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 0 to 14, with major ticks at 0, 2, 4, 6, 8, 10, 12, 14.

* **Legend:** The legend from the left chart applies to both charts.

### Detailed Analysis

**Left Chart: Self-verification Metrics**

* **Verification Accuracy:**

* SFT (Grey): 61.58%

* SFT + Process-level RL (Teal): 74.61%

* SFT + Outcome-level RL (Coral): 66.49%

* **Error Recall:**

* SFT (Grey): 66.83%

* SFT + Process-level RL (Teal): 64.75%

* SFT + Outcome-level RL (Coral): 70.11%

* **Correct Precision:**

* SFT (Grey): 84.94%

* SFT + Process-level RL (Teal): 90.28%

* SFT + Outcome-level RL (Coral): 87.85%

**Right Chart: Self-correction Metrics**

* **Incorrect to Correct:**

* SFT (Grey): 6.52%

* SFT + Process-level RL (Teal): 12.22%

* SFT + Outcome-level RL (Coral): 13.64%

* **Correct to Incorrect:**

* SFT (Grey): 1.96%

* SFT + Process-level RL (Teal): 1.46%

* SFT + Outcome-level RL (Coral): 0.97%

### Key Observations

* **Verification Accuracy:** "SFT + Process-level RL" shows the highest Verification Accuracy (74.61%), significantly outperforming both "SFT" (61.58%) and "SFT + Outcome-level RL" (66.49%).

* **Error Recall:** "SFT + Outcome-level RL" has the highest Error Recall (70.11%), followed by "SFT" (66.83%). "SFT + Process-level RL" has the lowest Error Recall (64.75%).

* **Correct Precision:** "SFT + Process-level RL" achieves the highest Correct Precision (90.28%), with "SFT + Outcome-level RL" (87.85%) and "SFT" (84.94%) following.

* **Incorrect to Correct:** The addition of RL significantly improves the ability to correct incorrect predictions. "SFT + Outcome-level RL" shows the highest value (13.64%), followed closely by "SFT + Process-level RL" (12.22%), both substantially higher than "SFT" (6.52%).

* **Correct to Incorrect:** The addition of RL appears to reduce the rate of correcting correct predictions. "SFT" has the highest rate (1.96%), while "SFT + Outcome-level RL" has the lowest (0.97%), with "SFT + Process-level RL" in between (1.46%).

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) strategies, particularly "Process-level RL" and "Outcome-level RL", generally enhances the self-verification and self-correction capabilities of the "Qwen2.5-Math-7B" model compared to the base "SFT" model.

Specifically, "SFT + Process-level RL" demonstrates superior performance in "Verification Accuracy" and "Correct Precision", indicating a better ability to accurately verify its own outputs and to precisely identify correct predictions. This configuration also shows a substantial improvement in correcting "Incorrect to Correct" scenarios, suggesting it is more adept at fixing its own mistakes.

"SFT + Outcome-level RL" also shows significant gains in correcting "Incorrect to Correct" predictions, even surpassing "Process-level RL" in this specific metric. It also leads in "Error Recall", implying it is better at identifying errors that need attention. However, it shows a slight decrease in "Correct Precision" compared to "Process-level RL" and a notable reduction in "Correct to Incorrect" rates, which could imply a more conservative approach to corrections, potentially avoiding unnecessary changes to correct outputs.

The base "SFT" model performs the lowest across most metrics, highlighting the benefit of the RL fine-tuning. The trade-off between "Incorrect to Correct" and "Correct to Incorrect" rates is also evident. While RL methods improve the correction of errors, they might also slightly increase the risk of incorrectly modifying already correct outputs, though "Outcome-level RL" appears to mitigate this risk more effectively than "Process-level RL".

Overall, the results indicate that RL fine-tuning is a promising direction for improving the self-evaluation and self-correction abilities of large language models, with "SFT + Outcome-level RL" showing a strong balance of correcting errors and preserving correct outputs.