\n

## Diagram: Model Training Pipeline

### Overview

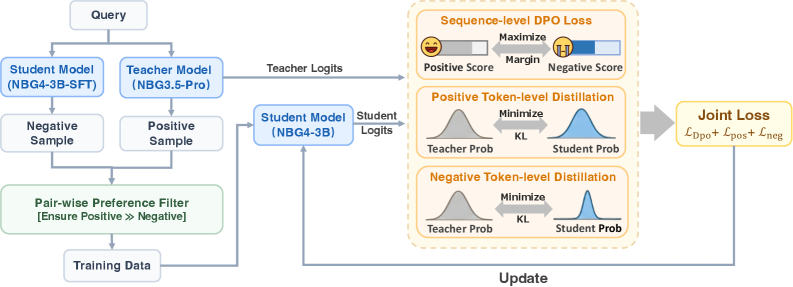

This diagram illustrates a model training pipeline involving a teacher model, a student model, and a preference filter, culminating in a joint loss function. The pipeline appears to be focused on refining a student model using knowledge distillation and direct preference optimization (DPO).

### Components/Axes

The diagram consists of several interconnected blocks representing different stages of the training process. Key components include:

* **Query:** The initial input to the system.

* **Teacher Model (NBG3.5-Pro):** A pre-trained model providing guidance.

* **Student Model (NBG4-3B-SFT):** The model being trained.

* **Student Model (NBG4-3B):** The model being updated.

* **Negative Sample:** A sample representing an undesirable output.

* **Positive Sample:** A sample representing a desired output.

* **Pair-wise Preference Filter:** A component ensuring the positive sample is preferred over the negative sample.

* **Training Data:** The data used to update the student model.

* **Teacher Logits:** The output of the teacher model.

* **Student Logits:** The output of the student model.

* **Sequence-level DPO Loss:** A loss function optimizing the model based on sequence-level preferences.

* **Positive Token-level Distillation:** A distillation process focusing on positive samples.

* **Negative Token-level Distillation:** A distillation process focusing on negative samples.

* **Joint Loss:** The combined loss function.

* **Update:** An arrow indicating the direction of model updates.

### Detailed Analysis or Content Details

The diagram shows a flow of information starting with a "Query" which is fed into both the "Teacher Model (NBG3.5-Pro)" and the "Student Model (NBG4-3B-SFT)". The teacher model outputs "Teacher Logits". The student model generates both "Negative Sample" and "Positive Sample". These samples are then passed through a "Pair-wise Preference Filter" which ensures "Ensure Positive >> Negative". The output of this filter is "Training Data".

The "Training Data" and "Teacher Logits" are then used in two distillation processes and a DPO loss calculation.

* **Sequence-level DPO Loss:** This block shows a horizontal bar with "Maximize Margin" labeled above it. The bar is divided into two sections: a light gray section labeled "Positive Score" with a smiling face icon, and a dark gray section labeled "Negative Score" with a frowning face icon.

* **Positive Token-level Distillation:** This block shows two overlapping bell curves. The left curve is gray and labeled "Teacher Prob". The right curve is blue and labeled "Student Prob". The space between the curves is labeled "KL". The text "Minimize" is above the block.

* **Negative Token-level Distillation:** Similar to the positive distillation, this block shows two overlapping bell curves. The left curve is gray and labeled "Teacher Prob". The right curve is blue and labeled "Student Prob". The space between the curves is labeled "KL". The text "Minimize" is above the block.

Finally, these three loss components are combined into a "Joint Loss" represented by the equation: `L_DPO + L_pos + L_neg`. An arrow labeled "Update" points from the "Joint Loss" block back to the "Student Model (NBG4-3B)" block.

### Key Observations

The diagram highlights a multi-faceted training approach combining direct preference optimization (DPO) at the sequence level with token-level distillation for both positive and negative samples. The use of a teacher model suggests a knowledge distillation strategy. The preference filter is crucial for ensuring the training data reflects desired model behavior.

### Interpretation

This diagram depicts a sophisticated model training pipeline designed to align a student model with a teacher model and human preferences. The DPO loss encourages the student model to generate outputs preferred by humans, while the distillation losses help the student model mimic the teacher model's behavior at the token level. The preference filter ensures that the training data is consistent with the desired preference ordering. The joint loss function balances these different objectives, leading to a refined student model. The use of both sequence-level and token-level approaches suggests a focus on both overall coherence and fine-grained accuracy. The model names (NBG3.5-Pro, NBG4-3B-SFT, NBG4-3B) indicate specific versions or configurations of the models involved. The diagram doesn't provide quantitative data, but rather illustrates the *process* of training. It suggests a focus on reinforcement learning from human feedback (RLHF) or a similar preference-based learning paradigm.