\n

## Screenshot: ChatGPT Interaction

### Overview

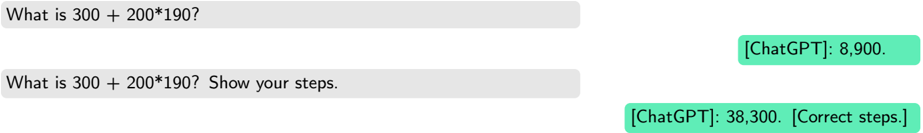

This image is a screenshot of a ChatGPT interface showing two user prompts and corresponding ChatGPT responses. The prompts involve a mathematical calculation, and the responses demonstrate ChatGPT's ability (and initial inaccuracy) to perform the calculation and show its work.

### Components/Axes

The screenshot contains the following elements:

* **Input Fields:** Two grey rectangular input fields containing user prompts.

* **ChatGPT Responses:** Two light-green rectangular boxes containing ChatGPT's responses.

* **Text:** The prompts and responses are displayed as text.

### Detailed Analysis or Content Details

The screenshot displays the following text:

1. **Prompt 1:** "What is 300 + 200\*190?"

* **ChatGPT Response 1:** "[ChatGPT]: 8,900."

2. **Prompt 2:** "What is 300 + 200\*190? Show your steps."

* **ChatGPT Response 2:** "[ChatGPT]: 38,300. [Correct steps]."

### Key Observations

* ChatGPT initially provides an incorrect answer to the calculation (8,900).

* When asked to show its steps, ChatGPT provides a different, and correct, answer (38,300) and indicates that the steps are correct.

* The order of operations (multiplication before addition) is crucial for the correct answer. The initial response likely failed to account for this.

### Interpretation

The screenshot demonstrates a common issue with large language models: they can sometimes provide incorrect answers, even to simple mathematical problems. However, when prompted to show their reasoning, they can often correct themselves and provide the correct solution. This suggests that ChatGPT has the underlying knowledge to perform the calculation but may struggle with applying the correct order of operations without explicit instruction. The inclusion of "[Correct steps]" in the second response is a self-assessment, indicating the model recognizes its initial error. This interaction highlights the importance of verifying the output of LLMs, especially for tasks requiring precision.