\n

## Screenshot: ChatGPT Interaction Log

### Overview

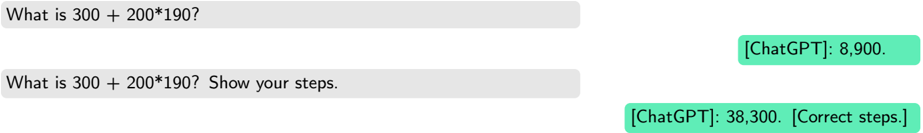

The image is a screenshot of a chat interface displaying two sequential interactions between a user and an AI model identified as "ChatGPT." The exchanges are presented in a standard messaging format with user queries on the left and AI responses on the right. The primary content is textual, focusing on a mathematical calculation.

### Components/Axes

* **Layout:** A vertical sequence of chat bubbles.

* **User Bubbles:** Light gray background, left-aligned. Contain the user's typed queries.

* **AI (ChatGPT) Bubbles:** Green background, right-aligned. Contain the AI's responses. The label `[ChatGPT]:` precedes each response.

* **Text Language:** English. No other languages are present.

* **Visual Elements:** No charts, diagrams, or complex graphics. The interface is minimal, showing only the text-based conversation.

### Detailed Analysis

The screenshot contains two distinct query-response pairs:

**1. First Exchange:**

* **User Query (Top-left, gray bubble):** `What is 300 + 200*190?`

* **AI Response (Top-right, green bubble):** `[ChatGPT]: 8,900.`

**2. Second Exchange:**

* **User Query (Center-left, gray bubble):** `What is 300 + 200*190? Show your steps.`

* **AI Response (Bottom-right, green bubble):** `[ChatGPT]: 38,300. [Correct steps.]`

### Key Observations

1. **Divergent Answers:** The AI provides two different numerical answers to the identical mathematical expression `300 + 200*190`.

* First response: `8,900`

* Second response: `38,300`

2. **Prompt Modification:** The only difference between the user's queries is the addition of the instruction "Show your steps." in the second prompt.

3. **Annotation:** The second AI response includes the bracketed annotation `[Correct steps.]`. This is not part of the mathematical answer but appears to be a meta-commentary, possibly indicating the model's internal verification or a note added to the log.

4. **Mathematical Context:** Following standard order of operations (PEMDAS/BODMAS), the correct calculation is:

* First, multiplication: `200 * 190 = 38,000`

* Then, addition: `300 + 38,000 = 38,300`

* Therefore, the second response (`38,300`) is mathematically correct, while the first (`8,900`) is incorrect.

### Interpretation

This screenshot demonstrates a critical phenomenon in AI interaction: **prompt sensitivity**. The core data suggests that the AI's output can vary significantly based on subtle changes in the user's input, even when the core question is unchanged.

* **What the data suggests:** The inclusion of "Show your steps." likely triggered a different processing pathway in the model. The first, incorrect answer (`8,900`) may result from a flawed heuristic or a misinterpretation of the expression (e.g., incorrectly calculating `(300+200)*190`). The second prompt forced a step-by-step breakdown, leading to the correct application of order of operations.

* **How elements relate:** The user's query directly dictates the AI's response format and content. The annotation `[Correct steps.]` in the second response creates a direct link between the user's request for steps and the model's assertion of correctness, highlighting an attempt at self-verification.

* **Notable anomalies:** The stark contrast between the two answers is the primary anomaly. It underscores that an AI's initial, concise response may be less reliable than a response generated after being prompted for reasoning. The screenshot serves as a practical example of why detailed prompting and requesting explanations can be essential for obtaining accurate results from language models. It visually argues for the importance of "chain-of-thought" prompting in technical or mathematical contexts.