## Line Charts: Model Accuracy vs. Noise Intensity

### Overview

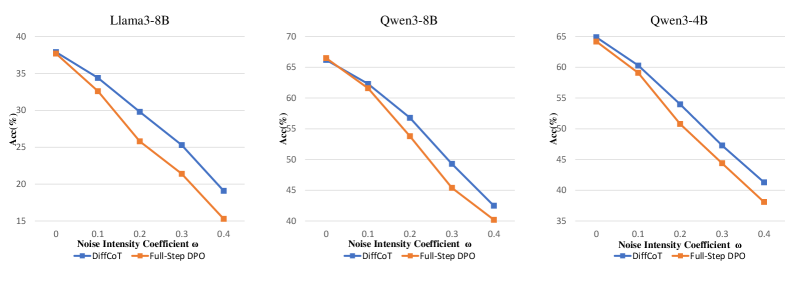

The image displays three horizontally arranged line charts comparing the performance of two methods, **DiffCoT** and **Full-Step DPO**, across three different language models (Llama3-8B, Qwen3-8B, Qwen3-4B). Each chart plots model accuracy (Acc%) against an increasing "Noise Intensity Coefficient ω". The consistent visual pattern shows that accuracy for both methods decreases as noise increases, but DiffCoT consistently maintains higher accuracy than Full-Step DPO.

### Components/Axes

* **Titles (Top of each chart):** "Llama3-8B" (left), "Qwen3-8B" (center), "Qwen3-4B" (right).

* **X-Axis (All charts):** Label: "Noise Intensity Coefficient ω". Ticks/Markers: 0, 0.1, 0.2, 0.3, 0.4.

* **Y-Axis (All charts):** Label: "Acc(%)". Scale varies per chart.

* **Legend (Bottom of each chart):** Located centrally below the x-axis label. Contains two entries:

* Blue line with square markers: "DiffCoT"

* Orange line with diamond markers: "Full-Step DPO"

### Detailed Analysis

**Chart 1: Llama3-8B (Left)**

* **Y-Axis Scale:** 15 to 40, in increments of 5.

* **Data Series & Trends:**

* **DiffCoT (Blue, Squares):** Starts at ~38% (ω=0). Slopes downward steadily. Points (approximate): (0.1, ~34%), (0.2, ~30%), (0.3, ~25%), (0.4, ~19%).

* **Full-Step DPO (Orange, Diamonds):** Starts at the same point as DiffCoT, ~38% (ω=0). Slopes downward more steeply than DiffCoT. Points (approximate): (0.1, ~33%), (0.2, ~26%), (0.3, ~21%), (0.4, ~15%).

* **Relationship:** The gap between the two lines widens as ω increases, with DiffCoT maintaining a clear advantage.

**Chart 2: Qwen3-8B (Center)**

* **Y-Axis Scale:** 40 to 70, in increments of 5.

* **Data Series & Trends:**

* **DiffCoT (Blue, Squares):** Starts at ~67% (ω=0). Slopes downward. Points (approximate): (0.1, ~62%), (0.2, ~57%), (0.3, ~49%), (0.4, ~42%).

* **Full-Step DPO (Orange, Diamonds):** Starts at the same point, ~67% (ω=0). Slopes downward more steeply. Points (approximate): (0.1, ~62%), (0.2, ~54%), (0.3, ~45%), (0.4, ~40%).

* **Relationship:** Similar to the first chart, DiffCoT degrades more gracefully. The lines are nearly identical at ω=0.1 but diverge significantly thereafter.

**Chart 3: Qwen3-4B (Right)**

* **Y-Axis Scale:** 35 to 65, in increments of 5.

* **Data Series & Trends:**

* **DiffCoT (Blue, Squares):** Starts at ~65% (ω=0). Slopes downward. Points (approximate): (0.1, ~60%), (0.2, ~54%), (0.3, ~47%), (0.4, ~41%).

* **Full-Step DPO (Orange, Diamonds):** Starts at the same point, ~65% (ω=0). Slopes downward more steeply. Points (approximate): (0.1, ~59%), (0.2, ~51%), (0.3, ~44%), (0.4, ~38%).

* **Relationship:** The pattern holds. DiffCoT's line is consistently above Full-Step DPO's line for all ω > 0.

### Key Observations

1. **Universal Negative Correlation:** For all three models and both methods, accuracy (Acc%) has a strong, negative, near-linear correlation with the Noise Intensity Coefficient (ω).

2. **Consistent Performance Hierarchy:** DiffCoT (blue line) demonstrates superior robustness to noise compared to Full-Step DPO (orange line) across all tested models and noise levels. This is visually evident as the blue line is always above the orange line for ω > 0.

3. **Convergent Starting Points:** At zero noise (ω=0), the performance of both methods is virtually identical for each respective model, suggesting they have similar baseline capabilities.

4. **Divergent Degradation:** The performance gap between the two methods generally widens as noise intensity increases, indicating DiffCoT's advantage becomes more pronounced under more challenging (noisier) conditions.

5. **Model-Specific Baselines:** The baseline accuracy (at ω=0) varies by model: Llama3-8B (~38%) is significantly lower than both Qwen models (~65-67%).

### Interpretation

This set of charts provides a clear comparative analysis of two techniques (DiffCoT and Full-Step DPO) for improving or maintaining the reasoning accuracy of Large Language Models (LLMs) when subjected to input noise.

* **What the data suggests:** The primary finding is that **DiffCoT confers greater noise robustness than Full-Step DPO**. While both methods suffer from performance degradation as input noise increases, DiffCoT mitigates this loss more effectively. This is a critical property for real-world applications where input data may be imperfect, ambiguous, or corrupted.

* **How elements relate:** The charts are designed for direct comparison. Placing the three models side-by-side with identical x-axes and legend allows the viewer to quickly assess if the observed trend (DiffCoT > Full-Step DPO under noise) is consistent across different model architectures and sizes. The consistency of the pattern across Llama3 and Qwen3 models strengthens the conclusion that the advantage is method-specific, not model-specific.

* **Notable implications:** The identical starting points at ω=0 are crucial. They indicate that the observed advantage of DiffCoT is not due to a higher inherent capability but specifically due to its **resilience to perturbation**. This makes DiffCoT a potentially more reliable method for deployment in uncontrolled environments. The charts effectively argue that for tasks where input quality cannot be guaranteed, employing DiffCoT would lead to more stable and predictable model performance.