## Diagram: Multimodal AI Model Training Pipeline

### Overview

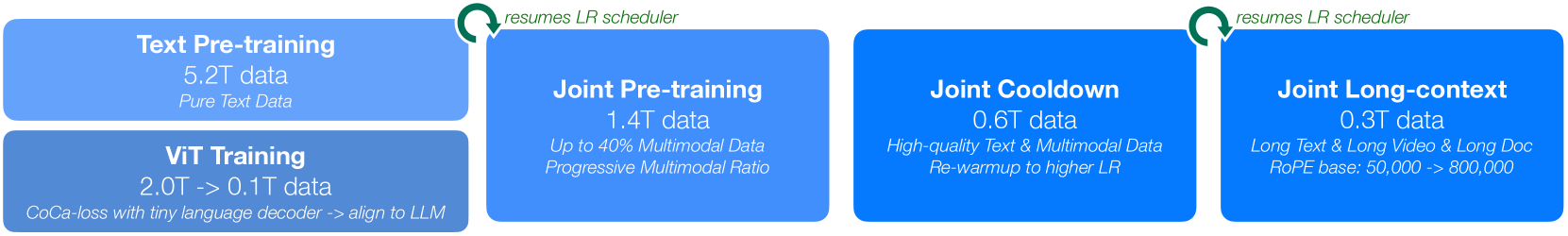

The image is a horizontal flowchart illustrating a multi-stage training pipeline for a multimodal AI model. The process flows from left to right, beginning with two parallel initial training phases that converge into a joint training sequence. The diagram uses color-coded blocks (shades of blue) and annotated green arrows to depict stages, data volumes, and key procedural notes.

### Components/Axes

The diagram consists of four primary rectangular blocks arranged horizontally, with one additional block stacked vertically on the far left. Green curved arrows with text annotations connect specific stages.

**Block 1 (Top-Left):**

* **Title:** Text Pre-training

* **Data Volume:** 5.2T data

* **Description:** Pure Text Data

**Block 2 (Bottom-Left, stacked below Block 1):**

* **Title:** ViT Training

* **Data Volume:** 2.0T -> 0.1T data

* **Description:** CoCa-loss with tiny language decoder -> align to LLM

**Block 3 (Center-Left):**

* **Title:** Joint Pre-training

* **Data Volume:** 1.4T data

* **Description:** Up to 40% Multimodal Data / Progressive Multimodal Ratio

**Block 4 (Center-Right):**

* **Title:** Joint Cooldown

* **Data Volume:** 0.6T data

* **Description:** High-quality Text & Multimodal Data / Re-warmup to higher LR

**Block 5 (Far-Right):**

* **Title:** Joint Long-context

* **Data Volume:** 0.3T data

* **Description:** Long Text & Long Video & Long Doc / RoPE base: 50,000 -> 800,000

**Connecting Elements:**

* **Arrow 1:** A green, curved arrow originates from the top-right corner of the "Text Pre-training" block and points to the top-left corner of the "Joint Pre-training" block. The text above the arrow reads: `resumes LR scheduler`.

* **Arrow 2:** A green, curved arrow originates from the top-right corner of the "Joint Pre-training" block and points to the top-left corner of the "Joint Cooldown" block. The text above the arrow reads: `resumes LR scheduler`.

### Detailed Analysis

The pipeline describes a sequential training regimen with distinct phases characterized by data type, volume, and learning rate (LR) schedule.

1. **Initial Parallel Phase:**

* **Text Pre-training:** This is the largest single data phase, using 5.2 trillion (`5.2T`) tokens of pure text data.

* **ViT Training:** This phase shows a data reduction, starting with 2.0 trillion (`2.0T`) tokens and ending with 0.1 trillion (`0.1T`) tokens. It uses a CoCa-loss function with a tiny language decoder, with the explicit goal to "align to LLM."

2. **Joint Training Sequence:** The outputs of the initial phases feed into a joint training sequence.

* **Joint Pre-training:** Uses 1.4 trillion (`1.4T`) data tokens. The multimodal data ratio is not fixed; it increases progressively up to a maximum of 40%.

* **Joint Cooldown:** Uses a smaller, curated dataset of 0.6 trillion (`0.6T`) tokens described as "High-quality Text & Multimodal Data." A key procedural step is a "Re-warmup to higher LR," indicating a deliberate adjustment of the learning rate schedule.

* **Joint Long-context:** The final phase uses the smallest dataset of 0.3 trillion (`0.3T`) tokens. It focuses on extending the model's context window for "Long Text & Long Video & Long Doc." A technical specification notes the RoPE (Rotary Positional Embedding) base is increased from 50,000 to 800,000.

### Key Observations

* **Data Volume Trend:** The total data volume decreases significantly across the joint training phases (1.4T -> 0.6T -> 0.3T), suggesting a shift from broad pre-training to specialized fine-tuning.

* **Learning Rate (LR) Management:** The LR scheduler is explicitly "resumed" when transitioning from the initial text pre-training to joint pre-training, and again from joint pre-training to joint cooldown. The cooldown phase itself involves a "re-warmup to a higher LR," indicating active and nuanced management of this hyperparameter.

* **Specialization of Phases:** Each joint phase has a clear, distinct purpose: general multimodal integration (Pre-training), quality refinement (Cooldown), and context window extension (Long-context).

* **Architectural Alignment:** The ViT (Vision Transformer) training phase has the explicit goal of aligning its output to the LLM (Large Language Model), which is a critical step for effective multimodal fusion.

### Interpretation

This diagram outlines a sophisticated, staged approach to building a capable multimodal AI. The process begins by separately establishing strong unimodal foundations in text (LLM) and vision (ViT). The critical "alignment" step in ViT training ensures the vision encoder's output is compatible with the language model's representation space.

The subsequent joint phases represent a deliberate curriculum. The model first learns to process mixed text and image/video data (Joint Pre-training). It then refines this ability on a smaller, higher-quality dataset while adjusting the learning rate to escape potential local minima (Joint Cooldown). Finally, it specializes in handling very long sequences of text and visual data, which is essential for understanding documents, videos, and complex narratives (Joint Long-context). The progressive increase in multimodal data ratio and the final extension of the RoPE base are technical strategies to efficiently build a model that is not just multimodal, but also capable of deep, long-form reasoning across modalities. The decreasing data volumes across joint stages imply a focus on precision and specialization over raw scale as the model matures.