\n

## Diagram: Training Pipeline Stages

### Overview

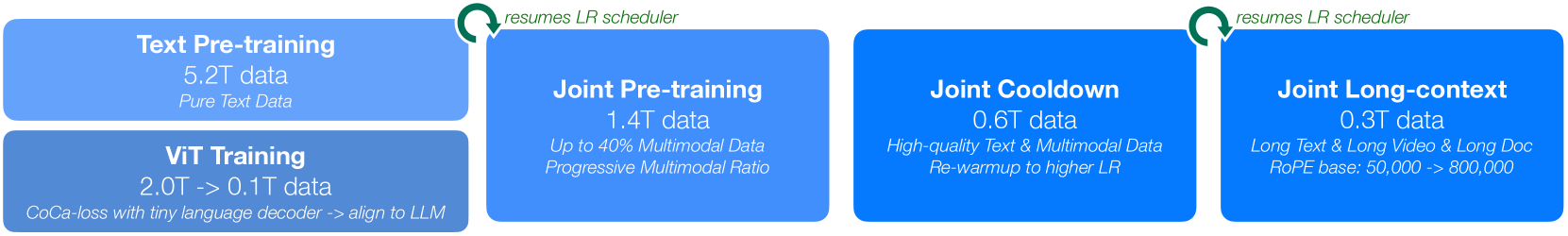

The image depicts a sequential training pipeline consisting of four main stages: Text Pre-training, Joint Pre-training, Joint Cooldown, and Joint Long-context. Below these stages is a separate stage for Vision Transformer (ViT) Training. Each stage is represented by a colored rectangle containing information about the data used, the training process, and relevant parameters. Arrows indicate the flow of the training process.

### Components/Axes

The diagram consists of five rectangular blocks arranged horizontally. Each block represents a training stage. The blocks are colored as follows:

- Text Pre-training: Blue

- Joint Pre-training: Green

- Joint Cooldown: Yellow

- Joint Long-context: Orange

- ViT Training: Light Blue

Each block contains text labels describing the stage, data size, and specific training details. There are also two circular icons with checkmarks and text "resumes LR scheduler" positioned above the Joint Pre-training and Joint Long-context stages.

### Detailed Analysis or Content Details

**1. Text Pre-training (Blue)**

- Data: 5.2T data

- Data Type: Pure Text Data

**2. Joint Pre-training (Green)**

- Data: 1.4T data

- Data Composition: Up to 40% Multimodal Data

- Training Approach: Progressive Multimodal Ratio

- Icon: "resumes LR scheduler" (top-left of the block)

**3. Joint Cooldown (Yellow)**

- Data: 0.6T data

- Data Quality: High-quality Text & Multimodal Data

- Training Approach: Re-warmup to higher LR

**4. Joint Long-context (Orange)**

- Data: 0.3T data

- Data Type: Long Text & Long Video & Long Doc

- Parameter: RoPE base: 50,000 -> 800,000

- Icon: "resumes LR scheduler" (top-left of the block)

**5. ViT Training (Light Blue)**

- Data: 0.0T -> 0.1T data

- Training Method: CoCa-loss with tiny language decoder -> align to LLM

### Key Observations

- The data size decreases as the training progresses from Text Pre-training to Joint Long-context.

- The training process transitions from pure text data to increasingly multimodal data.

- The "resumes LR scheduler" icon suggests a learning rate scheduling strategy is employed in the Joint Pre-training and Joint Long-context stages.

- The ViT training is a separate process, potentially running concurrently or as a pre-processing step for the multimodal data.

- The RoPE base parameter in the Joint Long-context stage indicates a focus on handling long sequences.

### Interpretation

This diagram illustrates a multi-stage training pipeline for a large language model (LLM) that incorporates vision capabilities. The pipeline begins with pre-training on a massive corpus of text data, then gradually introduces multimodal data (images, videos, documents) during the Joint Pre-training phase. The Joint Cooldown stage likely fine-tunes the model after the initial multimodal pre-training. Finally, the Joint Long-context stage focuses on extending the model's ability to process long sequences, potentially using techniques like RoPE (Rotary Positional Embedding). The separate ViT training suggests that visual features are extracted using a Vision Transformer and then integrated into the LLM. The decreasing data size across stages could indicate a focus on higher-quality data or more efficient training methods in later stages. The "resumes LR scheduler" icon suggests a dynamic learning rate adjustment strategy to optimize training performance. The overall pipeline aims to create a powerful multimodal LLM capable of understanding and generating both text and visual content.