## Flowchart: Multimodal Model Training Pipeline

### Overview

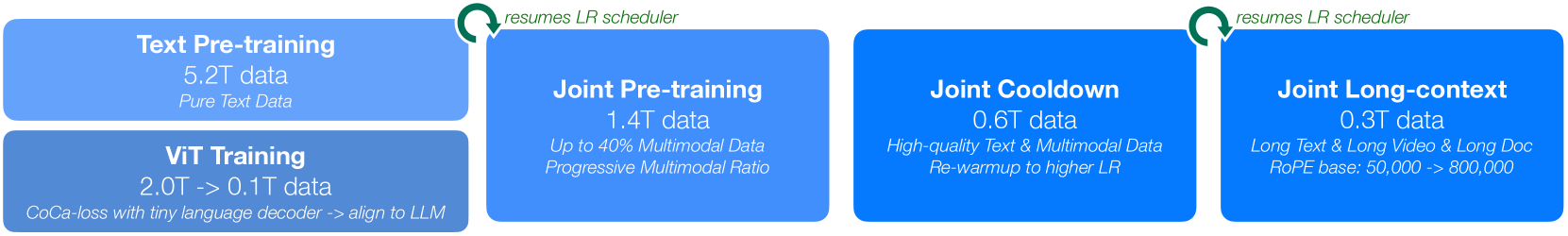

The image depicts a four-stage training pipeline for a multimodal AI model, visualized as a horizontal flowchart with blue rectangles connected by green arrows. Each stage represents a distinct training phase with specific data requirements and objectives. The flow progresses from left to right, with green arrows labeled "resumes LR scheduler" indicating iterative optimization steps.

### Components/Axes

1. **Stages (Left to Right):**

- Text Pre-training

- ViT Training

- Joint Pre-training

- Joint Cooldown

- Joint Long-context

2. **Data Sizes:** Expressed in terabytes (T), with ranges (e.g., 2.0T -> 0.1T) indicating progressive reduction.

3. **Key Attributes:**

- Data type (text, multimodal, long-context)

- Technical specifications (e.g., CoCa-loss, RoPE base)

- Optimization strategies (e.g., LR scheduler resumption)

### Detailed Analysis

1. **Text Pre-training**

- **Data:** 5.2T pure text

- **Purpose:** Foundational language understanding

- **Color:** Light blue

2. **ViT Training**

- **Data:** 2.0T -> 0.1T (text-to-image alignment)

- **Technique:** CoCa-loss with tiny language decoder

- **Objective:** Align vision-language representations

- **Color:** Dark blue

3. **Joint Pre-training**

- **Data:** 1.4T (40% multimodal)

- **Feature:** Progressive multimodal ratio

- **Color:** Medium blue

4. **Joint Cooldown**

- **Data:** 0.6T (high-quality text/multimodal)

- **Strategy:** Re-warmup to higher learning rate (LR)

- **Color:** Dark blue

5. **Joint Long-context**

- **Data:** 0.3T (long text/video/docs)

- **Technical:** RoPE base increased from 50k -> 800k

- **Color:** Light blue

### Key Observations

- **Data Progression:** Total data decreases from 5.2T to 0.3T, but complexity increases (text → multimodal → long-context).

- **Optimization Cycles:** LR scheduler resumption between stages suggests iterative refinement.

- **Multimodal Focus:** 40% multimodal data in Joint Pre-training highlights hybrid training emphasis.

- **Context Scaling:** RoPE base expansion (50k → 800k) indicates specialized long-sequence handling.

### Interpretation

This pipeline demonstrates a staged approach to multimodal model development:

1. **Foundation Building:** Start with massive text pre-training (5.2T) to establish language understanding.

2. **Vision Integration:** Use ViT training (2.0T→0.1T) with CoCa-loss to bridge text-image gaps.

3. **Hybrid Optimization:** Joint Pre-training (1.4T) introduces multimodal data with progressive ratio adjustments.

4. **Efficiency Phase:** Cooldown (0.6T) focuses on high-quality data while re-warming LR for stability.

5. **Specialization:** Final stage (0.3T) targets long-context understanding via RoPE base expansion.

The decreasing data sizes with increasing technical complexity suggest a shift from broad data collection to targeted, high-quality training. The LR scheduler resumption between stages implies careful learning rate management to avoid overfitting while maintaining model adaptability. The 40% multimodal ratio in Joint Pre-training indicates a balanced approach to hybrid training before final specialization in long-context scenarios.