\n

## Line Chart: Surprisal across Layers at Different Training Steps

### Overview

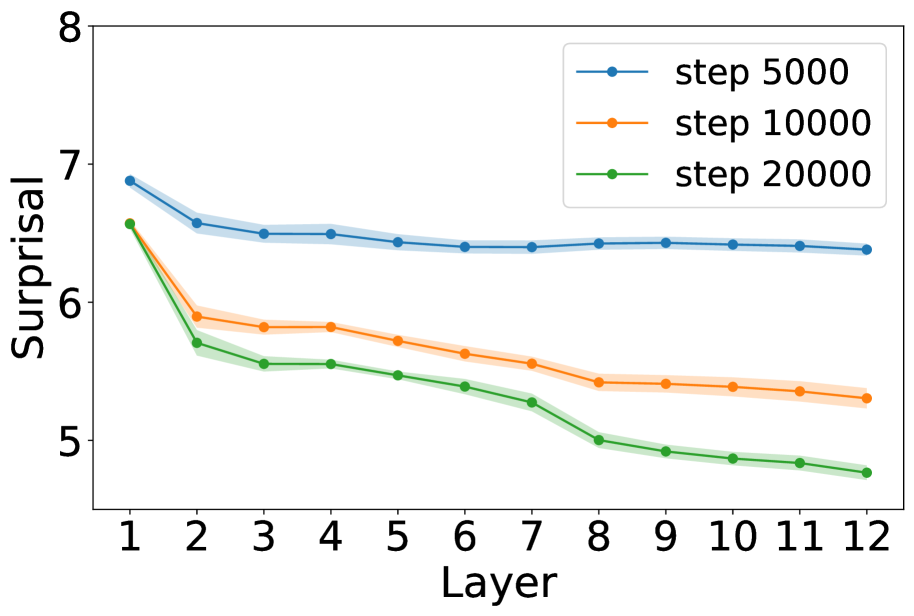

The image is a line chart plotting a metric called "Surprisal" on the vertical Y-axis against "Layer" number on the horizontal X-axis. It displays three data series, each representing a different training step count (5000, 10000, and 20000). The chart shows how the surprisal value changes across 12 layers for a model at these three distinct points in its training process.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence intervals or standard deviation bands.

* **X-Axis:**

* **Label:** "Layer"

* **Scale:** Linear, integer values from 1 to 12.

* **Markers:** Ticks and numerical labels at each integer from 1 to 12.

* **Y-Axis:**

* **Label:** "Surprisal"

* **Scale:** Linear, ranging from approximately 4.8 to 8.0.

* **Markers:** Major ticks and numerical labels at 5, 6, 7, and 8.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Content:** Three entries, each with a colored line segment and marker:

* Blue line with circle marker: "step 5000"

* Orange line with circle marker: "step 10000"

* Green line with circle marker: "step 20000"

* **Data Series:** Three lines, each with a shaded band of the same but lighter color, likely representing variance or confidence.

### Detailed Analysis

**Trend Verification:** All three lines exhibit a downward trend as the layer number increases. The steepest descent occurs between Layer 1 and Layer 2 for all series.

**Data Point Extraction (Approximate Values):**

* **Blue Line (step 5000):**

* **Trend:** Starts highest, decreases sharply initially, then flattens into a very gradual decline.

* **Points:** Layer 1: ~6.9, Layer 2: ~6.6, Layer 3: ~6.5, Layer 4: ~6.5, Layer 5: ~6.45, Layer 6: ~6.4, Layer 7: ~6.4, Layer 8: ~6.4, Layer 9: ~6.4, Layer 10: ~6.4, Layer 11: ~6.4, Layer 12: ~6.4.

* **Orange Line (step 10000):**

* **Trend:** Starts in the middle, decreases sharply initially, then continues a steady, moderate decline.

* **Points:** Layer 1: ~6.6, Layer 2: ~5.9, Layer 3: ~5.8, Layer 4: ~5.8, Layer 5: ~5.7, Layer 6: ~5.6, Layer 7: ~5.55, Layer 8: ~5.4, Layer 9: ~5.4, Layer 10: ~5.35, Layer 11: ~5.3, Layer 12: ~5.3.

* **Green Line (step 20000):**

* **Trend:** Starts at a similar point to the orange line at Layer 1, decreases sharply, and continues the most pronounced downward trend of all three series.

* **Points:** Layer 1: ~6.6, Layer 2: ~5.7, Layer 3: ~5.55, Layer 4: ~5.55, Layer 5: ~5.45, Layer 6: ~5.4, Layer 7: ~5.25, Layer 8: ~5.0, Layer 9: ~4.9, Layer 10: ~4.85, Layer 11: ~4.8, Layer 12: ~4.8.

### Key Observations

1. **Consistent Hierarchy:** The "step 5000" (blue) line is consistently the highest across all layers. The "step 20000" (green) line is consistently the lowest from Layer 2 onward. The "step 10000" (orange) line remains between them.

2. **Convergence at Start:** At Layer 1, the orange and green lines (steps 10000 and 20000) start at nearly the same surprisal value (~6.6), while the blue line (step 5000) starts significantly higher (~6.9).

3. **Divergence with Depth:** The gap between the lines widens as the layer number increases. The difference in surprisal between step 5000 and step 20000 is much larger at Layer 12 (~1.6 units) than at Layer 1 (~0.3 units).

4. **Steep Initial Drop:** The most dramatic reduction in surprisal for all series occurs between the first and second layers.

5. **Plateau vs. Continued Decline:** The blue line (step 5000) nearly plateaus after Layer 3, showing minimal change. In contrast, the orange and green lines continue to show a clear, albeit slowing, decline through all 12 layers.

### Interpretation

This chart likely visualizes the internal processing of a neural network (e.g., a transformer) during training. "Surprisal" is a measure of how unexpected a token or piece of information is to the model at a given layer. Lower surprisal indicates the model finds the data more predictable.

The data suggests that:

* **Training Reduces Surprisal:** As training progresses (from 5000 to 20000 steps), the model's surprisal decreases across all layers, indicating it is learning and becoming more confident in its representations.

* **Deeper Layers Refine Understanding:** The consistent downward trend across layers shows that each subsequent layer in the network reduces uncertainty (surprisal) further. The model builds a more predictable representation as data flows through its depth.

* **Training Step Impact is Layer-Dependent:** The benefit of additional training (more steps) is most pronounced in the deeper layers (e.g., Layers 8-12). The early layers (1-2) show less variation between training steps, suggesting they learn fundamental features quickly. The later layers require more training to fully minimize surprisal.

* **Model Maturity:** The plateau of the "step 5000" line suggests the model at that early stage has learned what it can in the initial layers but struggles to reduce uncertainty further in deeper layers. The continued decline in the later training steps indicates ongoing learning and refinement throughout the entire network depth.

**In summary, the chart demonstrates that both increased network depth and extended training time contribute to reducing model uncertainty (surprisal), with the most significant combined effect occurring in the later layers of a more extensively trained model.**