## Diagram: Sequence Prediction Model vs. Ground Truth

### Overview

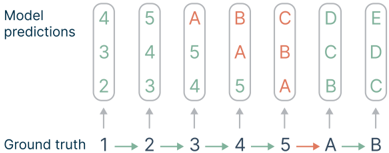

This image is a technical diagram illustrating the comparison between a model's sequential predictions and the corresponding ground truth labels. It visualizes a sequence prediction task, likely from a machine learning or time-series context, where the model outputs a ranked list of predictions at each step against a known true sequence. The diagram highlights a consistent predictive pattern and a transition in label type.

### Components/Axes

The diagram is organized into two primary horizontal rows, with vertical alignment connecting corresponding steps.

1. **Top Row: "Model predictions"**

* **Label:** The text "Model predictions" is positioned at the top-left.

* **Structure:** Contains seven vertical columns (or stacks). Each column is a rounded rectangle with a light green border, containing three vertically stacked characters.

* **Content (Left to Right):**

* Column 1: `4`, `3`, `2` (top to bottom)

* Column 2: `5`, `4`, `3`

* Column 3: `A`, `5`, `4`

* Column 4: `B`, `A`, `5`

* Column 5: `C`, `B`, `A`

* Column 6: `D`, `C`, `B`

* Column 7: `E`, `D`, `C`

2. **Bottom Row: "Ground truth"**

* **Label:** The text "Ground truth" is positioned at the bottom-left.

* **Structure:** A horizontal sequence of seven individual elements connected by right-pointing arrows (`→`).

* **Content (Left to Right):** `1` → `2` → `3` → `4` → `5` → `A` → `B`

* **Color Coding:** The first five elements (`1` through `5`) are in black text. The final two elements (`A` and `B`) are in **red text**, indicating a change in the label domain.

3. **Spatial Relationships & Connectors:**

* Each element in the "Ground truth" sequence has a thin, gray, upward-pointing arrow (`↑`) directly above it.

* These arrows point to the base of the corresponding column in the "Model predictions" row, establishing a direct step-by-step correspondence.

* The entire diagram flows from left to right, representing progression through a sequence.

### Detailed Analysis

* **Prediction Pattern:** At each step `n` (from 1 to 7), the model outputs a ranked list of three predictions. The **top prediction** in each column follows a clear pattern relative to the ground truth sequence.

* **Trend Verification:** The top predictions form the sequence: `4, 5, A, B, C, D, E`. This sequence is a **shifted version** of the ground truth. Specifically, the top prediction at step `n` corresponds to the ground truth label at step `n+3`.

* Step 1: Top prediction `4` = Ground Truth at Step 4.

* Step 2: Top prediction `5` = Ground Truth at Step 5.

* Step 3: Top prediction `A` = Ground Truth at Step 6.

* Step 4: Top prediction `B` = Ground Truth at Step 7.

* Steps 5-7: Top predictions `C, D, E` extrapolate beyond the provided ground truth sequence, continuing the alphabetic pattern established by `A` and `B`.

* **Secondary Predictions:** The middle and bottom predictions in each column appear to be the previous one or two top predictions, creating a "sliding window" effect. For example, at Step 2, the predictions are `5, 4, 3`, where `5` is the new top prediction, `4` was the top prediction from Step 1, and `3` was the middle prediction from Step 1.

### Key Observations

1. **Consistent Lag:** The model demonstrates a consistent predictive lag or offset, reliably forecasting three steps into the future relative to the current ground truth step.

2. **Domain Transition:** The ground truth transitions from numeric (`1-5`) to alphabetic (`A, B`) labels at step 6. The model not only captures this transition (predicting `A` and `B` correctly at steps 3 and 4) but also **extrapolates** the new domain, predicting `C`, `D`, and `E` in subsequent steps.

3. **Prediction Hierarchy:** Each prediction stack is ordered, with the most likely prediction at the top. The composition of the stack (current top + recent past tops) suggests the model may be using a recurrent or autoregressive mechanism.

4. **Visual Coding:** The use of red for the final ground truth labels (`A`, `B`) visually emphasizes the point of domain shift, which is a critical event in the sequence.

### Interpretation

This diagram likely illustrates the performance or internal state of a sequence prediction model (e.g., a recurrent neural network, transformer, or autoregressive model) on a specific task. The data suggests the following:

* **Task Nature:** The task involves predicting the next element in a sequence that changes its fundamental representation (from numbers to letters). This could simulate scenarios like predicting categorical states after a period of numerical measurement.

* **Model Behavior:** The model has learned the sequential structure well enough to maintain a fixed, multi-step lookahead. The consistent 3-step offset might be an architectural feature (e.g., a specific prediction horizon) or an emergent property of its training. Its ability to extrapolate the alphabetic sequence indicates it has learned the underlying pattern of progression, not just memorized labels.

* **Underlying Mechanism:** The composition of the prediction stacks (containing recent past predictions) is characteristic of models that maintain a hidden state or memory. This allows them to base new predictions on both the current input and their own recent outputs.

* **Anomaly/Outlier:** There are no visual outliers in the pattern; the model's behavior is remarkably consistent. The primary "anomaly" is the domain shift in the ground truth itself, which the model handles seamlessly.

In essence, the diagram provides a visual proof-of-concept for a model's capability to perform robust, multi-step-ahead sequence prediction across a changing label space.