## Bar Chart: Subtractor Performance vs. Core Count

### Overview

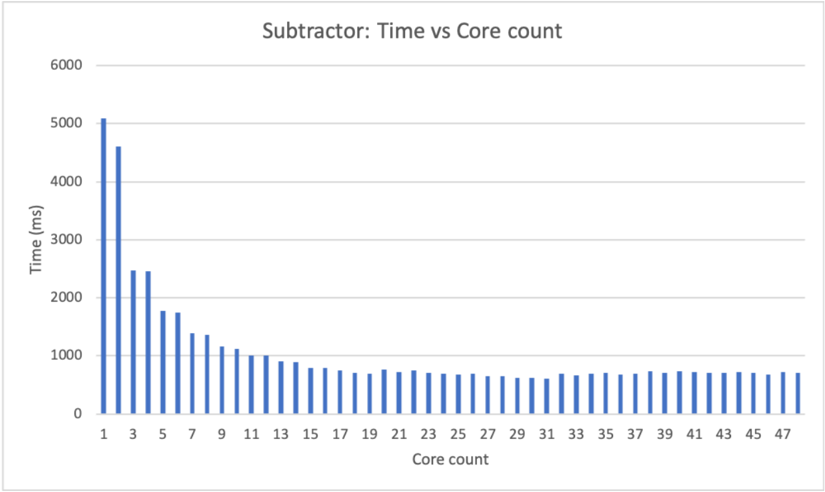

The image displays a vertical bar chart titled "Subtractor: Time vs Core count." It illustrates the relationship between the number of processing cores used (x-axis) and the execution time in milliseconds (y-axis) for a computational task labeled "Subtractor." The chart demonstrates a clear performance scaling trend.

### Components/Axes

* **Chart Title:** "Subtractor: Time vs Core count" (centered at the top).

* **Y-Axis:**

* **Label:** "Time (ms)" (rotated vertically on the left side).

* **Scale:** Linear scale from 0 to 6000.

* **Major Gridlines & Tick Marks:** At 0, 1000, 2000, 3000, 4000, 5000, and 6000 ms.

* **X-Axis:**

* **Label:** "Core count" (centered at the bottom).

* **Scale:** Discrete, linear scale representing core counts.

* **Tick Marks & Labels:** Labeled at every odd number from 1 to 47 (1, 3, 5, ..., 47). Bars exist for every integer core count from 1 to 48, but only odd numbers are labeled.

* **Data Series:** A single series represented by blue vertical bars. Each bar's height corresponds to the execution time for a specific core count.

* **Legend:** Not present. The chart contains only one data series.

### Detailed Analysis

**Trend Verification:** The data series shows a strong, inverse relationship. The line formed by the bar tops slopes steeply downward from left to right initially, then flattens into a near-horizontal plateau. This indicates that execution time decreases rapidly as cores are added from 1 to approximately 15, after which further increases in core count yield minimal time reduction.

**Spatial Grounding & Approximate Data Points:**

* **Core Count 1:** The tallest bar, located at the far left. Its top aligns just above the 5000 ms gridline. **Approximate Value: 5050 ms.**

* **Core Count 2:** The second bar. Its top is between the 4000 and 5000 ms lines, closer to 5000. **Approximate Value: 4600 ms.**

* **Core Count 3:** The third bar. Its top is roughly midway between 2000 and 3000 ms. **Approximate Value: 2500 ms.**

* **Core Count 4:** The fourth bar. Its height is very similar to core count 3. **Approximate Value: 2450 ms.**

* **Core Count 5:** The fifth bar. Its top is below the 2000 ms line. **Approximate Value: 1750 ms.**

* **Core Count 7:** The seventh bar. **Approximate Value: 1350 ms.**

* **Core Count 9:** The ninth bar. **Approximate Value: 1100 ms.**

* **Core Count 11:** The eleventh bar. **Approximate Value: 1000 ms.**

* **Core Count 13:** The thirteenth bar. **Approximate Value: 900 ms.**

* **Core Count 15:** The fifteenth bar. **Approximate Value: 800 ms.**

* **Core Count 17 to 48:** From approximately core count 17 onward, the bar heights stabilize. They fluctuate slightly but remain consistently in the range of **600 ms to 800 ms**. The lowest point appears around core counts 29-31, near **600 ms**. The final bar (core count 48) is approximately **750 ms**.

### Key Observations

1. **Diminishing Returns:** The most significant performance gain occurs when moving from 1 to 2 cores (a ~450 ms reduction) and from 2 to 3 cores (a dramatic ~2100 ms reduction). Gains become progressively smaller after ~15 cores.

2. **Performance Plateau:** After approximately 15-20 cores, adding more cores does not result in a meaningful decrease in execution time. The time stabilizes in the 600-800 ms range.

3. **Initial Anomaly:** The time for 2 cores is only slightly less than for 1 core, while the drop to 3 cores is massive. This suggests a potential overhead or non-parallelizable component that is efficiently overcome only with 3 or more cores.

4. **Consistency:** The plateau region shows very low variance, indicating stable performance scaling behavior at higher core counts.

### Interpretation

This chart visualizes the **Amdahl's Law** or **Gustafson's Law** principle in parallel computing. The "Subtractor" task has a portion that can be parallelized (the steep decline) and a portion that is serial or has fixed overhead (the plateau).

* **What the data suggests:** The task benefits greatly from parallelization up to a point. The optimal resource allocation for this specific task appears to be around 15-20 cores. Using more than 20 cores is inefficient, as it consumes additional computational resources without reducing runtime, indicating the parallelizable portion of the work has been fully saturated.

* **How elements relate:** The x-axis (Core count) is the independent variable representing resource投入. The y-axis (Time) is the dependent variable representing performance. The inverse curve is the direct result of applying more resources to the parallelizable workload.

* **Notable outliers:** The relatively small improvement from 1 to 2 cores compared to the leap from 2 to 3 is the most notable anomaly. This could indicate a specific implementation detail, such as a two-threaded process that still contends for a shared resource, which is alleviated when a third thread is introduced.

* **Practical Implication:** For a system engineer, this chart provides clear guidance: allocating more than ~20 cores to this specific "Subtractor" process is wasteful. The ideal configuration balances performance (near 600 ms) with resource cost.