## Diagram: Neural Network Architecture with Reflection and Abduction

### Overview

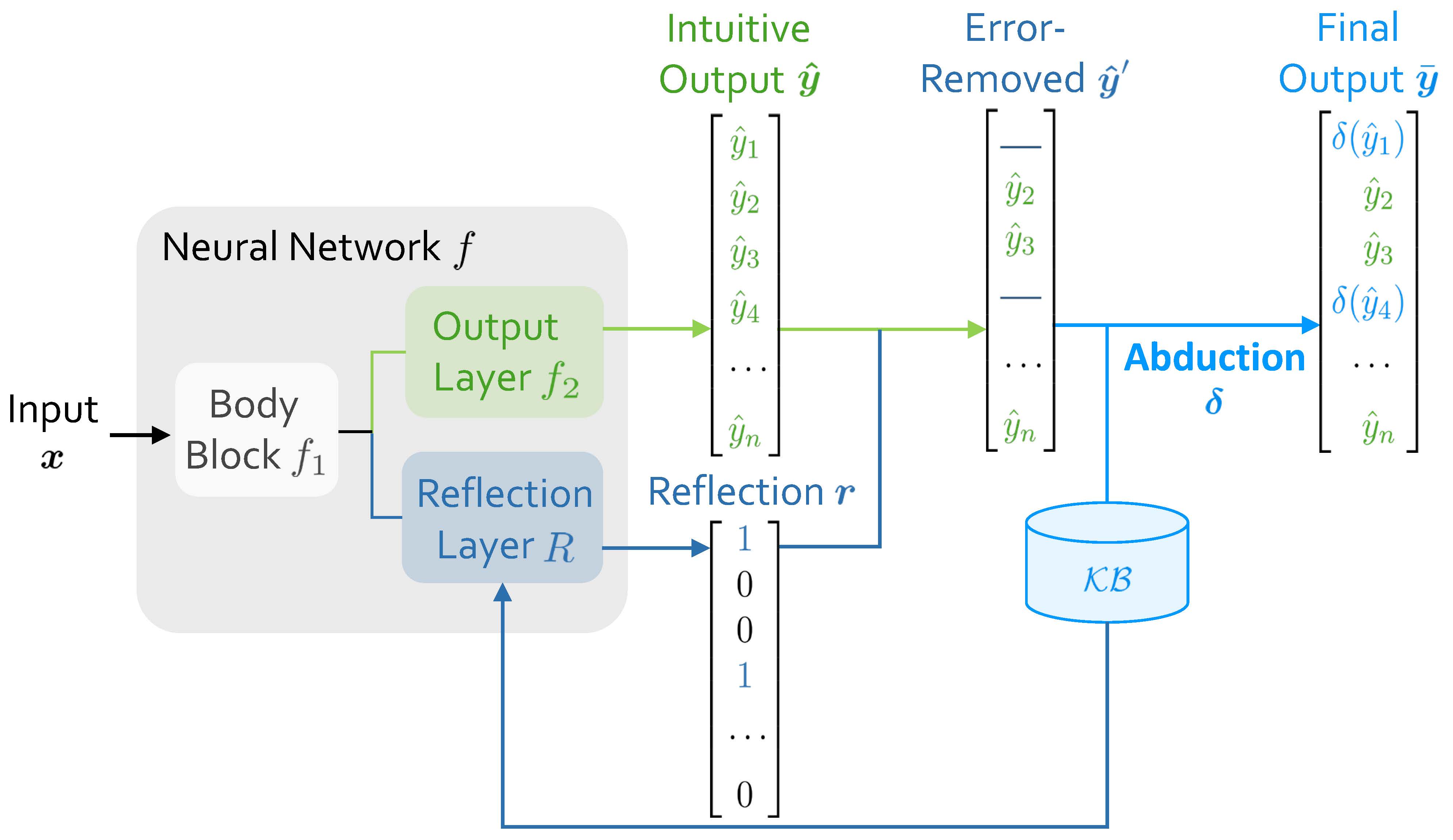

The image is a technical diagram illustrating a neural network architecture that incorporates a reflection mechanism and an abduction process for error correction. The system processes an input `x` through a neural network `f`, producing an initial intuitive output, which is then refined using a reflection vector and external knowledge to generate a final, corrected output.

### Components/Axes

The diagram is organized into several key components, flowing from left to right:

1. **Input**: Labeled "Input `x`" on the far left.

2. **Neural Network `f`**: A large, light-gray rounded rectangle containing:

* **Body Block `f₁`**: The initial processing unit.

* **Output Layer `f₂`**: A green box that receives data from the Body Block.

* **Reflection Layer `R`**: A blue box that also receives data from the Body Block.

3. **Intuitive Output `ŷ`**: A vertical vector (list) in green, positioned to the right of the Neural Network. It contains elements: `ŷ₁`, `ŷ₂`, `ŷ₃`, `ŷ₄`, `...`, `ŷₙ`.

4. **Reflection `r`**: A vertical binary vector in blue, positioned below the Intuitive Output. It contains the values: `1`, `0`, `0`, `1`, `...`, `0`.

5. **Error-Removed `ŷ'`**: A vertical vector in blue, positioned to the right of the Intuitive Output. It shows a modified version of `ŷ`, where some elements (like `ŷ₁` and `ŷ₄`) are replaced with horizontal lines (`—`), indicating removal or masking.

6. **Knowledge Base `KB`**: A blue cylinder icon, representing an external database or knowledge store.

7. **Abduction `δ`**: A process label in blue, associated with an arrow pointing from the Error-Removed vector to the Final Output.

8. **Final Output `ȳ`**: A vertical vector in blue on the far right. It contains elements: `δ(ŷ₁)`, `ŷ₂`, `ŷ₃`, `δ(ŷ₄)`, `...`, `ŷₙ`.

### Detailed Analysis

**Data Flow and Component Relationships:**

1. **Initial Processing**: The input `x` enters the **Body Block `f₁`**. The output of `f₁` splits into two parallel paths within the Neural Network `f`.

2. **Dual Output Generation**:

* Path 1 (Green): Goes to the **Output Layer `f₂`**, which generates the **Intuitive Output `ŷ`**. This is the network's initial prediction vector.

* Path 2 (Blue): Goes to the **Reflection Layer `R`**, which generates the **Reflection `r`**. This is a binary vector (containing 1s and 0s) that acts as a selector or mask.

3. **Error Removal / Masking**: The **Intuitive Output `ŷ`** and the **Reflection `r`** are combined. The diagram shows the **Error-Removed `ŷ'`** vector. The visual logic suggests that where `r` has a `0`, the corresponding element in `ŷ` is retained (e.g., `ŷ₂`, `ŷ₃`). Where `r` has a `1`, the corresponding element in `ŷ` is marked for correction (replaced with `—` in `ŷ'`, e.g., positions 1 and 4).

4. **Knowledge-Based Correction**: The **Error-Removed `ŷ'`** and the **Knowledge Base `KB`** feed into the **Abduction `δ`** process. Abduction is a form of logical inference that seeks the most likely explanation.

5. **Final Output Generation**: The **Abduction `δ`** process uses the knowledge base to correct the masked elements. The result is the **Final Output `ȳ`**. In this vector:

* Elements that were retained (`ŷ₂`, `ŷ₃`, ..., `ŷₙ`) remain unchanged.

* Elements that were masked (`ŷ₁`, `ŷ₄`) are replaced by the function `δ` applied to them: `δ(ŷ₁)` and `δ(ŷ₄)`. This implies `δ` uses context from `KB` and the other elements to infer a corrected value.

### Key Observations

* **Dual-Path Architecture**: The core neural network `f` has a parallel structure, producing both a prediction (`ŷ`) and a self-assessment or confidence mask (`r`).

* **Selective Correction**: The system does not correct all outputs. The Reflection `r` vector (`1,0,0,1,...`) specifically targets the 1st and 4th elements for correction, while leaving the 2nd, 3rd, and nth elements untouched.

* **Role of Abduction**: The correction mechanism (`δ`) is explicitly labeled as "Abduction," indicating it performs inference to the best explanation, rather than simple interpolation or averaging. It relies on an external **Knowledge Base (`KB`)**.

* **Notation Consistency**: The diagram uses consistent color coding (green for intuitive/initial, blue for reflective/corrected) and mathematical notation (`ŷ` for estimate, `ȳ` for final, `δ` for function).

### Interpretation

This diagram depicts a **self-correcting neural network system**. Its purpose is to improve the reliability of a model's output by identifying and correcting potential errors.

* **How it works**: The system first makes a prediction (`ŷ`). Simultaneously, a reflection layer assesses this prediction, generating a binary vector (`r`) that flags specific outputs as potentially erroneous (where `r=1`). These flagged outputs are then "removed" or masked. An abduction process (`δ`), powered by an external knowledge base (`KB`), infers the most plausible correct values for only those flagged positions, producing the final output (`ȳ`).

* **What the data suggests**: The architecture suggests that the model is designed for tasks where:

1. Outputs are structured (e.g., a sequence, a set of class probabilities).

2. Errors are sparse (only a few elements need correction, as shown by the few `1`s in `r`).

3. External, structured knowledge (`KB`) is available to inform corrections.

* **Notable Implications**: This is not a standard feedforward network. It introduces a form of **internal critique** (the Reflection Layer) and **external validation** (the Knowledge Base via Abduction). The system explicitly separates the generation of a hypothesis from its verification and correction, mimicking a scientific or diagnostic reasoning process. The use of "abduction" is significant, as it implies the correction is based on finding the most plausible explanation given the knowledge, not just optimizing a loss function.