## Diagram: Neural Network Error Correction and Abduction Process

### Overview

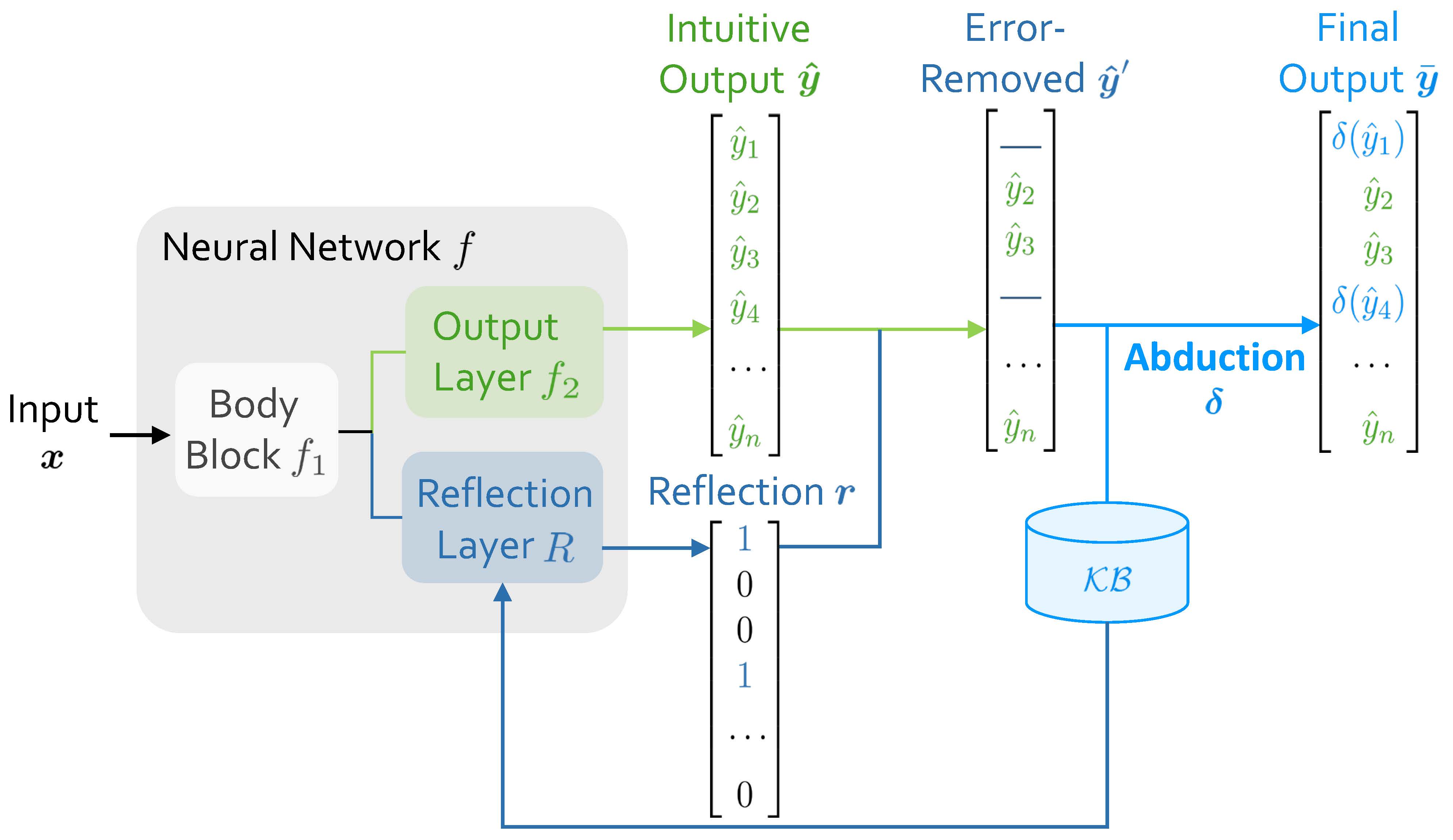

The diagram illustrates a multi-stage neural network architecture with error correction and knowledge integration. It shows the flow from input data through body processing, reflection, error removal, and abduction to produce a final output. Key components include body blocks, output layers, reflection mechanisms, and a knowledge base (KB).

### Components/Axes

1. **Input**: Labeled as "Input x" feeding into the system.

2. **Body Block (f₁)**: Processes input data.

3. **Output Layer (f₂)**: Generates "Intuitive Output ŷ" (ŷ₁ to ŷₙ).

4. **Reflection Layer (R)**: Produces a binary reflection vector **r** (1s and 0s).

5. **Error-Removed Output (ŷ')**: Derived from ŷ by removing specific elements (marked with dashes).

6. **Abduction (δ)**: A function applied to ŷ' to integrate knowledge from KB.

7. **Final Output (ȳ)**: Result of δ(ŷ'), with elements δ(ŷ₁), δ(ŷ₂), etc.

8. **Knowledge Base (KB)**: A cylindrical component connected via abduction.

### Detailed Analysis

- **Intuitive Output ŷ**: Contains n elements (ŷ₁ to ŷₙ), represented as a vertical list.

- **Error-Removed ŷ'**: Identical to ŷ but with one or more elements removed (e.g., ŷ₁ is omitted in the example).

- **Reflection Vector r**: Binary vector (1, 0, 0, 1, ..., 0) indicating which elements of ŷ are retained or modified.

- **Abduction δ**: Transforms ŷ' into the final output ȳ, with δ applied element-wise (e.g., δ(ŷ₁), δ(ŷ₂)).

- **KB Connection**: The KB is linked to the abduction process, suggesting it provides contextual or corrective information.

### Key Observations

1. **Error Correction**: The reflection layer (R) and error-removed output (ŷ') suggest a mechanism to identify and remove erroneous predictions.

2. **Binary Reflection**: The reflection vector **r** uses binary values (1/0), possibly indicating selection or masking of specific outputs.

3. **Abduction Process**: The final output ȳ depends on both the error-corrected ŷ' and the KB, implying knowledge integration.

4. **Function δ**: Applied to individual elements of ŷ', indicating a transformation (e.g., normalization, activation, or correction).

### Interpretation

This architecture combines neural network processing with error correction and knowledge-based refinement. The reflection layer likely acts as an attention mechanism, selecting which outputs to retain or adjust. The abduction step integrates external knowledge (KB) to refine the final output, suggesting a hybrid approach that balances data-driven predictions with domain-specific rules. The use of δ functions highlights non-linear transformations at multiple stages, enabling adaptive error handling and knowledge integration.