## Line Chart: Average Accuracy vs. Training Step for Different 'n' Values

### Overview

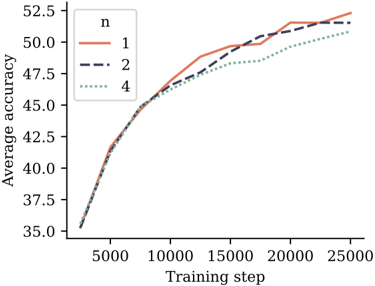

The image displays a line chart comparing the training progress of three different models or configurations, differentiated by a parameter labeled 'n'. The chart plots "Average accuracy" against "Training step," showing how performance evolves over the course of training for each configuration.

### Components/Axes

* **Chart Type:** Multi-line chart.

* **Y-Axis:**

* **Label:** "Average accuracy"

* **Scale:** Linear, ranging from 35.0 to 52.5.

* **Major Ticks:** 35.0, 37.5, 40.0, 42.5, 45.0, 47.5, 50.0, 52.5.

* **X-Axis:**

* **Label:** "Training step"

* **Scale:** Linear, ranging from 0 to 25000.

* **Major Ticks:** 0, 5000, 10000, 15000, 20000, 25000.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Title:** "n"

* **Series:**

1. **n=1:** Represented by a solid orange line.

2. **n=2:** Represented by a dark blue dashed line.

3. **n=4:** Represented by a green dotted line.

### Detailed Analysis

The chart shows the learning curves for three configurations. All three lines begin at approximately the same point (Training step ~0, Average accuracy ~35.0) and follow a similar logarithmic growth pattern—rapid initial improvement that gradually slows.

* **Trend for n=1 (Solid Orange Line):** This line shows the strongest overall growth. It rises steeply, crossing above the n=2 line around step 12,500. It continues to climb, ending at the highest final accuracy of approximately 52.5 at step 25,000.

* **Trend for n=2 (Dashed Dark Blue Line):** This line follows a path very close to n=1 for the first half of training. After step 12,500, its growth rate becomes slightly less than n=1. It finishes at an accuracy of approximately 51.5 at step 25,000.

* **Trend for n=4 (Dotted Green Line):** This line exhibits the slowest growth rate from the beginning. It consistently remains below the other two lines after the initial steps. It ends at the lowest final accuracy of approximately 51.0 at step 25,000.

**Approximate Data Points (Visual Estimation):**

* **At Step 5,000:** All lines are tightly clustered around an accuracy of 41.0-42.0.

* **At Step 15,000:**

* n=1: ~49.5

* n=2: ~49.0

* n=4: ~48.0

* **At Step 25,000 (Final):**

* n=1: ~52.5

* n=2: ~51.5

* n=4: ~51.0

### Key Observations

1. **Performance Hierarchy:** A clear inverse relationship is visible between the parameter 'n' and the final model accuracy. Lower 'n' values (n=1) result in higher final accuracy.

2. **Convergence Point:** All three models start at the same performance level and show very similar progress for the first ~5,000 steps before their paths begin to diverge noticeably.

3. **Crossover Event:** The line for n=1 overtakes the line for n=2 somewhere between step 10,000 and step 15,000, indicating a point where the n=1 configuration's learning trajectory becomes superior.

4. **Diminishing Returns:** All curves show classic diminishing returns; the gain in accuracy per training step decreases as training progresses.

### Interpretation

This chart likely illustrates the effect of a hyperparameter 'n' (which could represent model size, number of layers, ensemble size, or a regularization parameter) on the learning efficiency and final performance of a machine learning model.

The data suggests that for this specific task and training regime, a smaller 'n' (n=1) is more effective, leading to both faster learning after an initial period and a higher final accuracy. The configuration with n=4 performs the worst, which could indicate issues like overfitting (if 'n' relates to model complexity), optimization difficulties, or that the added complexity is not beneficial for the given data.

The tight clustering at the start implies that the initial learning phase is dominated by factors common to all models (e.g., learning basic features), while the later divergence highlights how the 'n' parameter influences the models' capacity to learn more nuanced patterns and achieve higher performance. The crossover between n=1 and n=2 is particularly interesting, suggesting that the benefits of the n=1 configuration manifest more strongly in the later stages of training.