## Diagram: Text-Knowledge Alignment via LLMs

### Overview

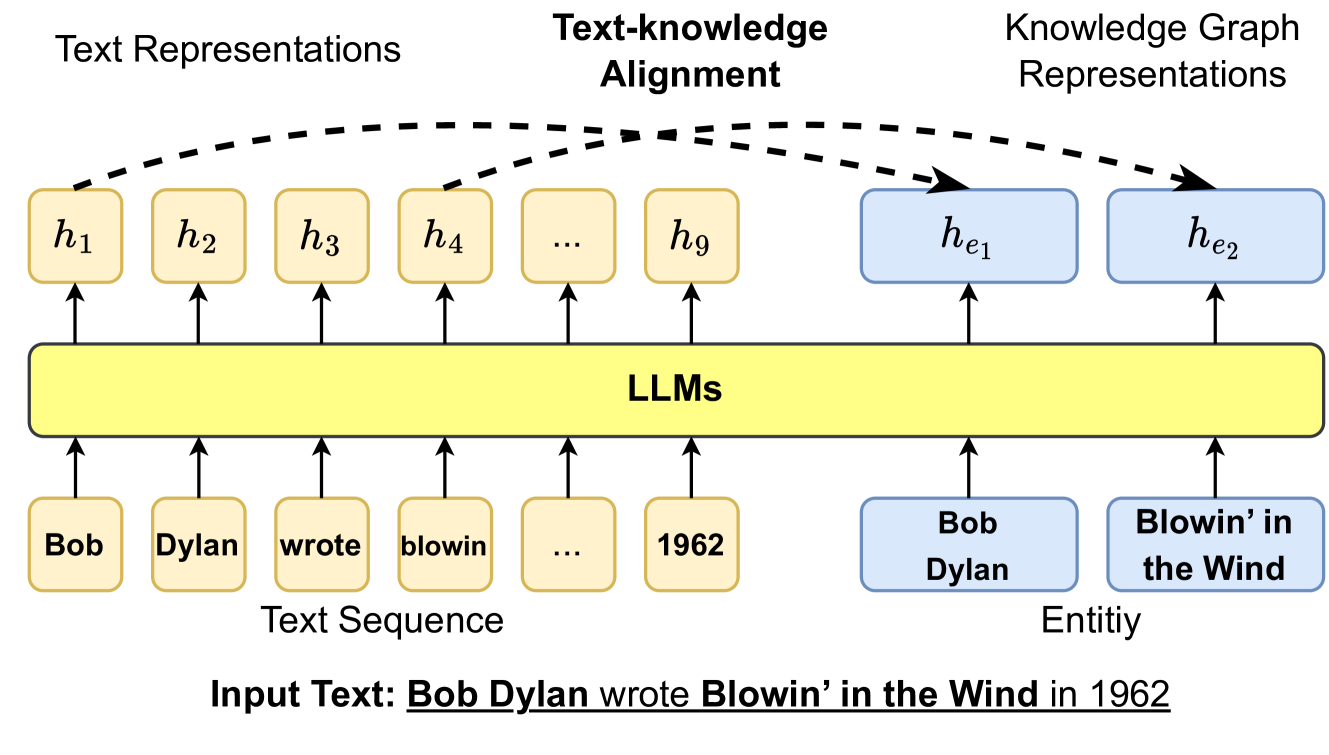

This image is a technical diagram illustrating a process for aligning textual representations from a Large Language Model (LLM) with structured knowledge graph representations. It demonstrates how an input sentence is processed to create parallel representations for both its textual tokens and its constituent named entities, with a mechanism to align them.

### Components/Axes

The diagram is organized into three horizontal layers and two vertical columns.

**Top Layer (Titles):**

* **Left:** "Text Representations"

* **Center:** "Text-knowledge Alignment"

* **Right:** "Knowledge Graph Representations"

**Middle Layer (Processing & Representations):**

* **Central Block:** A large, yellow, horizontal rectangle labeled "**LLMs**". This is the core processing unit.

* **Left Column (Text Path):**

* A series of yellow, rounded rectangles above the LLM block, labeled sequentially: **h₁**, **h₂**, **h₃**, **h₄**, **...**, **h₉**. These represent the hidden state vectors (text representations) output by the LLM for each input token.

* Arrows point upward from the LLM block to each of these `h` boxes.

* **Right Column (Knowledge Graph Path):**

* Two blue, rounded rectangles above the LLM block, labeled: **hₑ₁** and **hₑ₂**. These represent the hidden state vectors for knowledge graph entities.

* Arrows point upward from the LLM block to each of these `hₑ` boxes.

* **Alignment Indicators:** Three dashed, black, curved arrows originate from the text representation boxes (**h₁**, **h₂**, **h₄**) and point towards the knowledge graph representation boxes (**hₑ₁**, **hₑ₂**). This visually depicts the "Text-knowledge Alignment" process.

**Bottom Layer (Inputs):**

* **Left Column (Text Sequence Input):**

* A series of yellow, rounded rectangles below the LLM block, containing the tokenized input text: "**Bob**", "**Dylan**", "**wrote**", "**blowin**", "**...**", "**1962**".

* The label "**Text Sequence**" is centered below this series.

* Arrows point upward from each token box into the LLM block.

* **Right Column (Entity Input):**

* Two blue, rounded rectangles below the LLM block, containing named entities: "**Bob Dylan**" and "**Blowin’ in the Wind**".

* The label "**Entitiy**" (note: likely a typo for "Entity") is centered below this series.

* Arrows point upward from each entity box into the LLM block.

**Footer (Example):**

* A line of text at the very bottom provides the concrete example being processed: "**Input Text: Bob Dylan wrote Blowin’ in the Wind in 1962**". The entities "Bob Dylan" and "Blowin’ in the Wind" are underlined in this sentence, corresponding to the blue entity boxes above.

### Detailed Analysis

The diagram depicts a dual-path processing flow:

1. **Text Processing Path (Left/Yellow):** The input sentence "Bob Dylan wrote Blowin’ in the Wind in 1962" is tokenized into a sequence (`Bob`, `Dylan`, `wrote`, `blowin`, ..., `1962`). Each token is fed into the LLM, which generates a corresponding contextualized text representation vector (`h₁` through `h₉`).

2. **Knowledge Graph Entity Path (Right/Blue):** Pre-identified named entities from the same sentence ("Bob Dylan", "Blowin’ in the Wind") are also processed by the LLM (or a linked component) to produce dedicated entity representation vectors (`hₑ₁`, `hₑ₂`).

3. **Alignment Mechanism (Center/Top):** The core concept is the "Text-knowledge Alignment." Dashed arrows show that specific text representations (e.g., `h₁` for "Bob", `h₂` for "Dylan", `h₄` for "blowin") are being aligned or mapped to their corresponding entity representations (`hₑ₁` for "Bob Dylan", `hₑ₂` for "Blowin’ in the Wind"). This suggests a method to ground the LLM's textual understanding in structured knowledge.

### Key Observations

* **Color Coding:** Yellow is consistently used for text/sequence elements (tokens, text representations). Blue is used for knowledge graph/entity elements (entity names, entity representations).

* **Spatial Grounding:** The "Text-knowledge Alignment" title is centered above the dashed arrows, which themselves span the gap between the left (text) and right (knowledge) columns, clearly indicating the bridging function.

* **Typo:** The label under the blue entity boxes reads "**Entitiy**" instead of "Entity".

* **Abstraction:** The use of "..." in both the text sequence (`...`) and the text representations (`...`) indicates this is a generalized model, not limited to the specific example sentence length.

### Interpretation

This diagram illustrates a method for **knowledge grounding** in Large Language Models. The core problem it addresses is that LLMs learn from text patterns but may not have a robust, structured understanding of real-world entities and facts.

The proposed solution involves:

1. **Dual Representation:** Creating parallel representations for both the raw text tokens and the formal knowledge graph entities mentioned in that text.

2. **Explicit Alignment:** Forcing or encouraging the model to align the hidden states of relevant text tokens (like "Bob" and "Dylan") with the hidden state of the corresponding knowledge entity ("Bob Dylan"). This acts as a bridge between the statistical patterns of language and a structured knowledge base.

The **significance** is that such a system could lead to LLMs that are more factual, less prone to hallucination, and better at tasks requiring precise knowledge (like question answering or information extraction), because their internal representations are explicitly tied to a knowledge graph. The diagram presents this as a modular addition or objective within the LLM framework, highlighting the flow of information from raw input to aligned, knowledge-aware representations.