## Diagram: Text-Knowledge Alignment in LLMs

### Overview

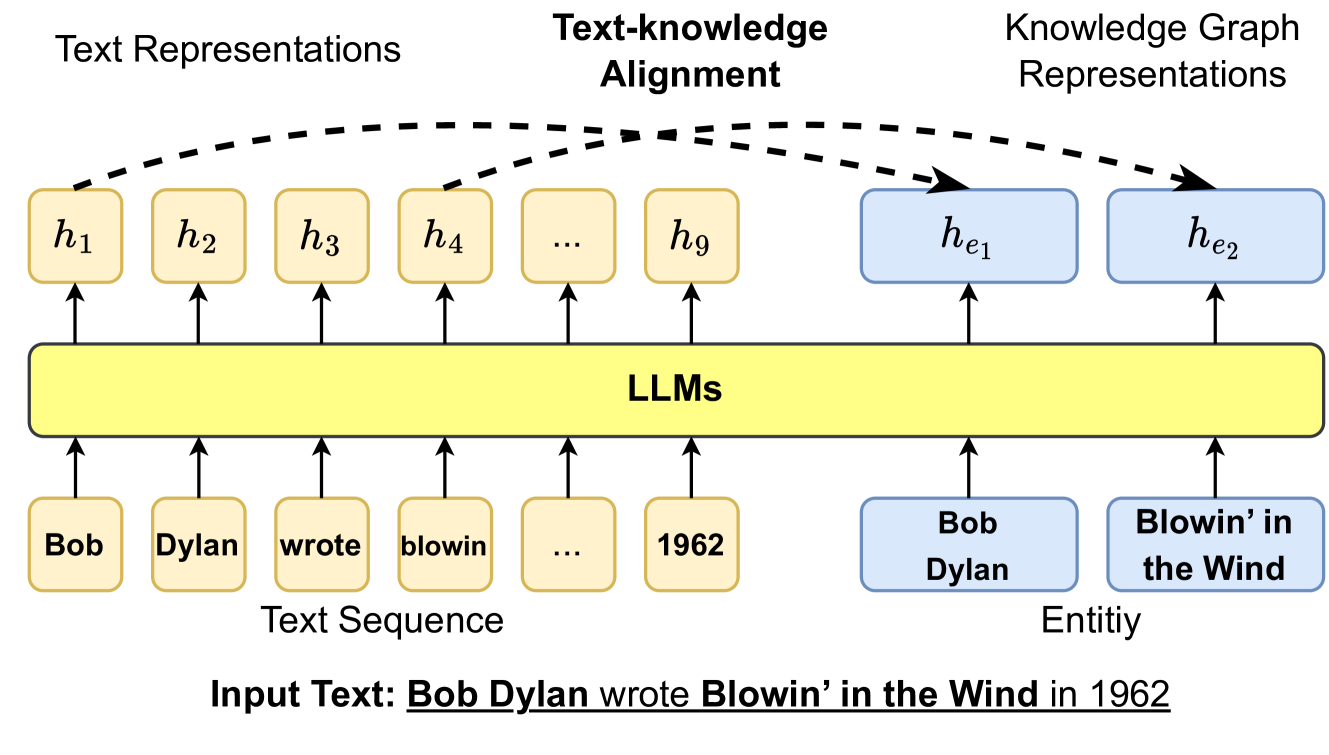

The diagram illustrates the process of text-knowledge alignment in Large Language Models (LLMs), showing how input text is processed into hidden representations, aligned with knowledge, and mapped to entity representations in a knowledge graph. The example input text is: "Bob Dylan wrote Blowin' in the Wind in 1962."

### Components/Axes

1. **Text Sequence**:

- Input tokens: `Bob`, `Dylan`, `wrote`, `blowin`, `1962` (truncated as `...`).

- Hidden states: `h₁` to `h₉` (representing token-level text representations).

2. **Text-Knowledge Alignment**:

- A central yellow block labeled "LLMs" connects text representations (`h₁`–`h₉`) to knowledge graph representations.

- Dashed arrows indicate alignment between text and knowledge.

3. **Knowledge Graph Representations**:

- Entity representations: `h_e1` (Bob Dylan) and `h_e2` (Blowin' in the Wind).

- Arrows show mapping from aligned text to entities.

### Detailed Analysis

- **Text Representations**:

Each token in the input text is associated with a hidden state (`h₁`–`h₉`), forming a sequence of text embeddings.

- **Text-Knowledge Alignment**:

The LLM processes the text sequence and aligns it with external knowledge, represented by the central "LLMs" block.

- **Knowledge Graph Representations**:

Two entities are extracted:

- `h_e1`: "Bob Dylan" (linked to `h₁` and `h₂`).

- `h_e2`: "Blowin' in the Wind" (linked to `h₄` and `h₅`).

The year `1962` is not explicitly mapped to an entity in this diagram.

### Key Observations

- The diagram emphasizes the flow from raw text to structured knowledge, highlighting entity recognition.

- The year `1962` is part of the input text but does not have a corresponding entity representation in the knowledge graph.

- The alignment process is abstracted as a single step (dashed arrows), without granular details.

### Interpretation

This diagram demonstrates how LLMs bridge unstructured text and structured knowledge graphs. The alignment step (`h₁`–`h₉` → `h_e1`, `h_e2`) suggests that the model identifies entities and relationships from text, enabling downstream tasks like question answering or fact verification. The absence of a knowledge representation for `1962` implies that temporal entities may require additional processing or are contextually inferred. The use of dashed arrows for alignment indicates a probabilistic or heuristic relationship rather than a deterministic mapping.

**Note**: No numerical data or trends are present; the diagram focuses on architectural components and conceptual flow.