## Bar Chart: Latency Comparison: FP16 vs. w8a8 by Batch Size

### Overview

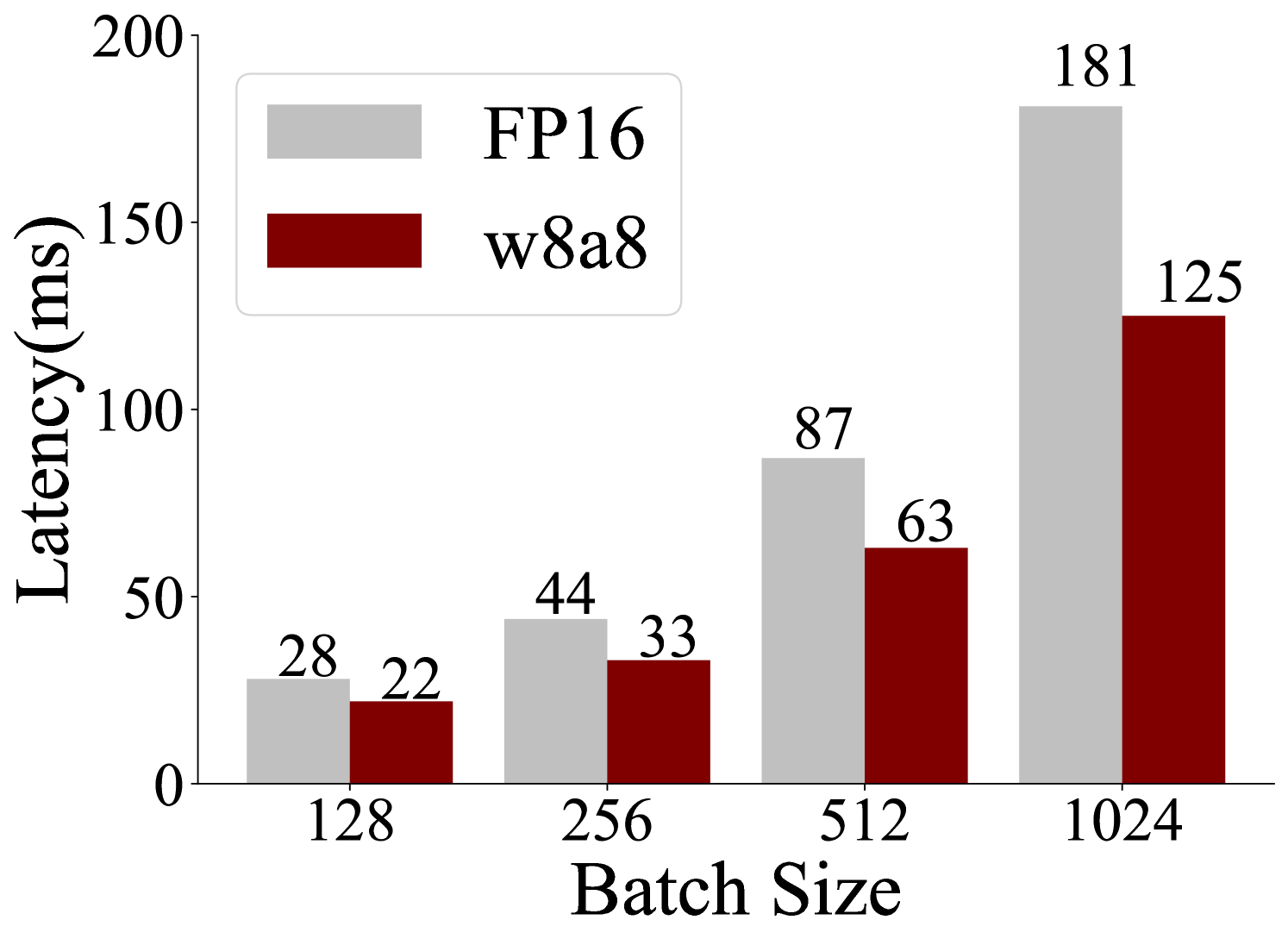

This is a grouped bar chart comparing the inference latency (in milliseconds) of two different numerical precision formats, FP16 and w8a8, across four increasing batch sizes. The chart demonstrates how latency scales with batch size for each format.

### Components/Axes

* **Chart Type:** Grouped vertical bar chart.

* **X-Axis (Horizontal):** Labeled "Batch Size". It has four discrete categories: `128`, `256`, `512`, and `1024`.

* **Y-Axis (Vertical):** Labeled "Latency(ms)". The scale runs from 0 to 200, with major tick marks at 0, 50, 100, 150, and 200.

* **Legend:** Located in the top-left quadrant of the chart area. It contains two entries:

* A light gray rectangle labeled "FP16".

* A dark red (maroon) rectangle labeled "w8a8".

* **Data Labels:** The exact latency value is printed above each bar.

### Detailed Analysis

The chart presents the following data points, confirmed by matching bar color to the legend and reading the labels:

**Batch Size = 128**

* **FP16 (Light Gray Bar):** 28 ms

* **w8a8 (Dark Red Bar):** 22 ms

**Batch Size = 256**

* **FP16 (Light Gray Bar):** 44 ms

* **w8a8 (Dark Red Bar):** 33 ms

**Batch Size = 512**

* **FP16 (Light Gray Bar):** 87 ms

* **w8a8 (Dark Red Bar):** 63 ms

**Batch Size = 1024**

* **FP16 (Light Gray Bar):** 181 ms

* **w8a8 (Dark Red Bar):** 125 ms

**Visual Trend Verification:**

* **FP16 Series:** The light gray bars show a clear, accelerating upward trend. The increase from 128 to 256 is +16 ms, from 256 to 512 is +43 ms, and from 512 to 1024 is +94 ms. The slope steepens significantly at larger batch sizes.

* **w8a8 Series:** The dark red bars also show a consistent upward trend, but the rate of increase is more linear and less steep than FP16. The increases are +11 ms, +30 ms, and +62 ms for the same intervals.

### Key Observations

1. **Consistent Performance Advantage:** For every batch size shown, the w8a8 format exhibits lower latency than the FP16 format.

2. **Diverging Performance Gap:** The absolute difference in latency between FP16 and w8a8 grows as the batch size increases.

* At batch size 128, the difference is 6 ms.

* At batch size 1024, the difference is 56 ms.

3. **Scaling Behavior:** Both formats show increased latency with larger batch sizes, but FP16's latency scales more poorly (non-linearly) compared to the more moderate scaling of w8a8.

### Interpretation

The data suggests that the **w8a8 precision format offers superior latency performance and better scalability** for inference workloads compared to FP16, particularly as the computational load (batch size) increases. This is a critical insight for optimizing machine learning systems where throughput and response time are key.

The widening gap indicates that the efficiency benefits of w8a8 become more pronounced under heavier load. This could be due to factors like reduced memory bandwidth requirements, better cache utilization, or more efficient arithmetic operations inherent to the w8a8 format. For a system designer, this chart provides a clear quantitative argument for adopting w8a8 (or similar quantization schemes) to handle larger batches without incurring the same latency penalty as FP16. The chart effectively communicates that the choice of numerical precision is not just about accuracy, but is a fundamental lever for performance engineering.