# Technical Analysis of Model Performance Across Benchmarks

## Overview

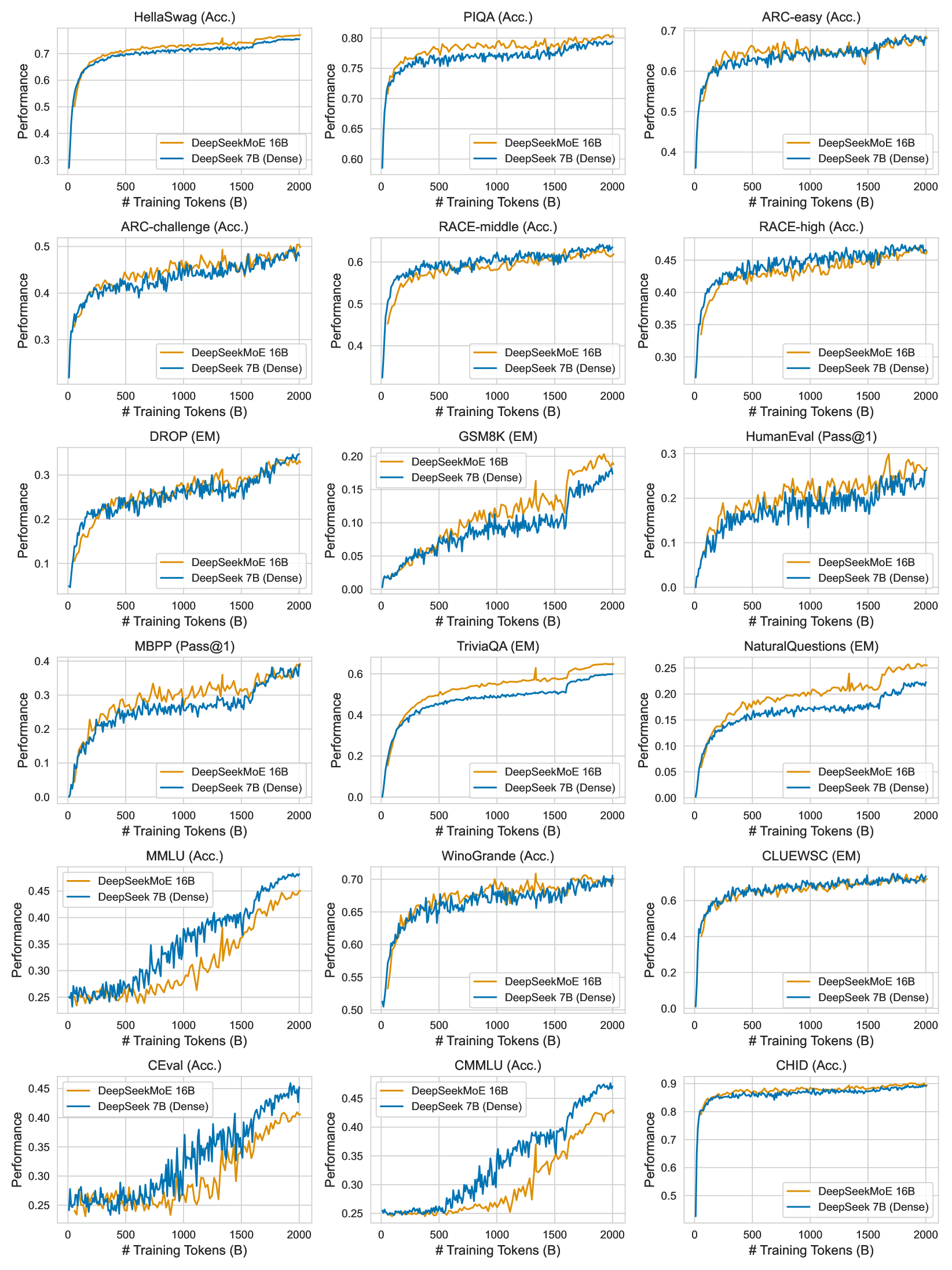

The image contains 15 line graphs comparing the performance of two models:

- **DeepSeekMoE 16B** (orange line)

- **DeepSeek 7B (Dense)** (blue line)

Each graph tracks performance against the number of training tokens (in billions) for specific benchmarks.

---

## Key Trends and Data Points

### 1. **HellaSwag (Acc.)**

- **DeepSeekMoE 16B**: Rapidly increases from ~0.3 to ~0.75 accuracy, plateauing near 0.75.

- **DeepSeek 7B (Dense)**: Slower rise, reaching ~0.65 accuracy, with minor fluctuations.

### 2. **PIQA (Acc.)**

- **DeepSeekMoE 16B**: Steep initial gain to ~0.75, stabilizing near 0.8.

- **DeepSeek 7B (Dense)**: Gradual improvement to ~0.75, with minor oscillations.

### 3. **ARC-easy (Acc.)**

- **DeepSeekMoE 16B**: Consistent lead, peaking at ~0.7.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.65), with similar stability.

### 4. **ARC-challenge (Acc.)**

- **DeepSeekMoE 16B**: Higher performance (~0.5), with sharper initial gains.

- **DeepSeek 7B (Dense)**: Lower (~0.45), with gradual improvement.

### 5. **RACE-middle (Acc.)**

- **DeepSeekMoE 16B**: Peaks at ~0.6, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.55), with similar trends.

### 6. **RACE-high (Acc.)**

- **DeepSeekMoE 16B**: Higher (~0.45), with sharper initial gains.

- **DeepSeek 7B (Dense)**: Lower (~0.4), with gradual improvement.

### 7. **DROP (EM)**

- **DeepSeekMoE 16B**: Peaks at ~0.3, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.25), with similar trends.

### 8. **GSM8K (EM)**

- **DeepSeekMoE 16B**: Peaks at ~0.2, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.15), with gradual improvement.

### 9. **HumanEval (Pass@1)**

- **DeepSeekMoE 16B**: Peaks at ~0.3, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.25), with similar trends.

### 10. **MBPP (Pass@1)**

- **DeepSeekMoE 16B**: Peaks at ~0.4, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.35), with gradual improvement.

### 11. **TriviaQA (EM)**

- **DeepSeekMoE 16B**: Peaks at ~0.6, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.5), with gradual improvement.

### 12. **NaturalQuestions (EM)**

- **DeepSeekMoE 16B**: Peaks at ~0.25, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.2), with gradual improvement.

### 13. **CLUEWSC (EM)**

- **DeepSeekMoE 16B**: Peaks at ~0.6, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.55), with similar trends.

### 14. **MMLU (Acc.)**

- **DeepSeekMoE 16B**: Sharp rise to ~0.45, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Gradual improvement to ~0.4, with similar trends.

### 15. **WinoGrande (Acc.)**

- **DeepSeekMoE 16B**: Peaks at ~0.7, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.65), with gradual improvement.

### 16. **C-Eval (Acc.)**

- **DeepSeekMoE 16B**: Peaks at ~0.45, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.4), with gradual improvement.

### 17. **CMMLU (Acc.)**

- **DeepSeekMoE 16B**: Peaks at ~0.45, with minor fluctuations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.4), with gradual improvement.

### 18. **CHiD (Acc.)**

- **DeepSeekMoE 16B**: Peaks at ~0.9, with minor oscillations.

- **DeepSeek 7B (Dense)**: Slightly lower (~0.85), with gradual improvement.

---

## Legend and Axis Labels

- **X-axis**: `# Training Tokens (B)` (0 to 2000 in increments of 500).

- **Y-axis**: `Performance` (0 to 1.0 in increments of 0.1).

- **Legend**:

- **Orange**: DeepSeekMoE 16B

- **Blue**: DeepSeek 7B (Dense)

---

## Observations

1. **Performance Gap**: DeepSeekMoE 16B consistently outperforms DeepSeek 7B (Dense) across most benchmarks, particularly in accuracy and exact match metrics.

2. **Training Efficiency**: Both models show diminishing returns after ~1500–2000 training tokens, indicating plateauing performance.

3. **Benchmark Variability**: Performance gaps vary by task (e.g., larger in HellaSwag, smaller in others).