TECHNICAL ASSET FINGERPRINT

eddee48fd282fd08b8811d73

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

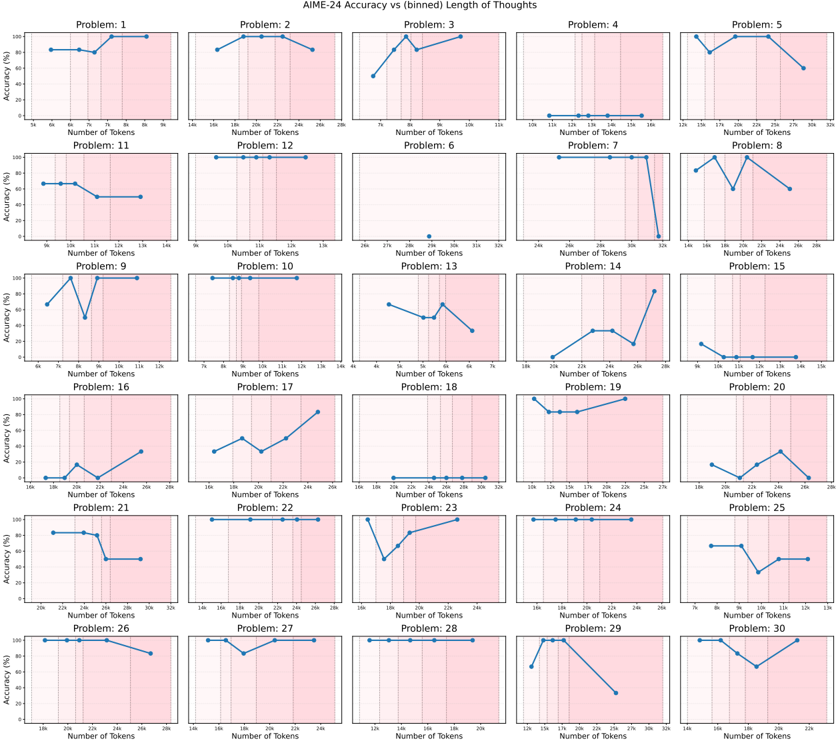

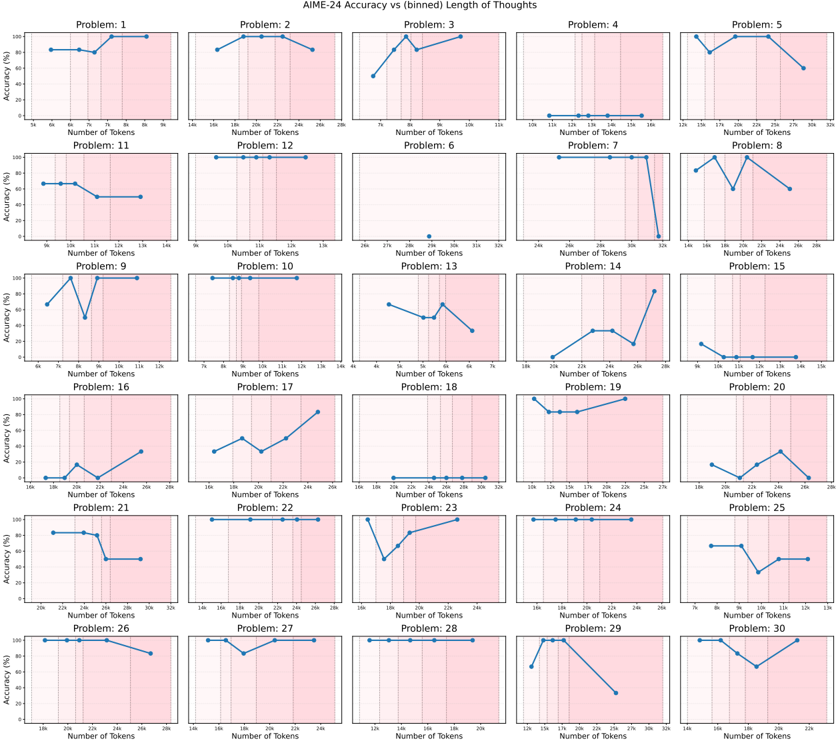

## Chart: AIME-24 Accuracy vs (binned) Length of Thoughts

### Overview

The image presents a series of 30 line charts arranged in a 6x5 grid. Each chart displays the accuracy (%) on the y-axis versus the number of tokens (in thousands) on the x-axis for a specific problem from AIME-24. The x-axis is divided into bins, and the background of each chart is shaded with a gradient, transitioning from white to light pink.

### Components/Axes

* **Title:** AIME-24 Accuracy vs (binned) Length of Thoughts

* **X-axis:** Number of Tokens (in thousands, denoted by 'k')

* The x-axis scale varies slightly between charts, but generally spans a range of token counts.

* Example x-axis markers: 5k, 6k, 7k, 8k, 9k (Problem 1); 14k, 16k, 18k, 20k, 22k, 24k, 26k (Problem 2)

* **Y-axis:** Accuracy (%)

* Scale: 0 to 100

* Markers: 0, 20, 40, 60, 80, 100

* **Chart Titles:** Problem: 1, Problem: 2, ..., Problem: 30 (located at the top of each individual chart)

* **Background:** Gradient shading from white to light pink.

### Detailed Analysis

Each chart represents a problem, and the line shows how accuracy changes with the number of tokens used.

**Problem 1:**

* Trend: Accuracy is high (around 80-90%) for lower token counts (5k-7k), then increases to 100% around 8k-9k.

* Data Points: Approximately (5k, 85%), (6k, 85%), (7k, 90%), (8k, 100%), (9k, 100%)

**Problem 2:**

* Trend: Accuracy starts high (around 90-95%) at 14k tokens, peaks at 100% around 18k-20k, then decreases slightly to around 90% at 26k tokens.

* Data Points: Approximately (14k, 95%), (16k, 95%), (18k, 100%), (20k, 100%), (22k, 95%), (24k, 90%), (26k, 90%)

**Problem 3:**

* Trend: Accuracy increases from approximately 50% at 7k tokens to nearly 100% at 9k tokens, then remains high at 100% until 11k tokens.

* Data Points: Approximately (7k, 50%), (8k, 90%), (9k, 100%), (11k, 100%)

**Problem 4:**

* Trend: Accuracy remains very low (near 0%) across all token counts.

* Data Points: Approximately (10k, 0%), (11k, 0%), (12k, 0%), (13k, 0%), (14k, 0%), (15k, 0%), (16k, 0%), (17k, 0%), (18k, 0%), (19k, 0%), (20k, 0%), (21k, 0%), (22k, 0%), (23k, 0%), (24k, 0%), (25k, 0%), (26k, 0%), (27k, 0%), (28k, 0%), (29k, 0%), (30k, 0%), (31k, 0%), (32k, 0%)

**Problem 5:**

* Trend: Accuracy increases from approximately 60% at 18k tokens to nearly 100% at 20k tokens, then remains high at 100% until 30k tokens.

* Data Points: Approximately (18k, 60%), (20k, 95%), (22k, 100%), (24k, 100%), (26k, 100%), (28k, 100%), (30k, 100%)

**Problem 6:**

* Trend: Accuracy remains very low (near 0%) across all token counts.

* Data Points: Approximately (27k, 0%), (28k, 0%), (29k, 0%), (30k, 0%), (31k, 0%), (32k, 0%)

**Problem 7:**

* Trend: Accuracy is high (100%) until 30k tokens, then drops to 0% at 32k tokens.

* Data Points: Approximately (26k, 100%), (27k, 100%), (28k, 100%), (29k, 100%), (30k, 100%), (31k, 100%), (32k, 0%)

**Problem 8:**

* Trend: Accuracy fluctuates, starting around 60% at 12k tokens, peaking at 100% around 22k tokens, and ending around 80% at 26k tokens.

* Data Points: Approximately (12k, 60%), (14k, 70%), (16k, 90%), (18k, 80%), (20k, 90%), (22k, 100%), (24k, 90%), (26k, 80%)

**Problem 9:**

* Trend: Accuracy increases sharply from approximately 70% at 6k tokens to 100% at 8k tokens, then remains high at 100% until 12k tokens.

* Data Points: Approximately (6k, 70%), (7k, 90%), (8k, 100%), (9k, 100%), (10k, 100%), (11k, 100%), (12k, 100%)

**Problem 10:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (7k, 100%), (8k, 100%), (9k, 100%), (10k, 100%), (11k, 100%), (12k, 100%), (13k, 100%)

**Problem 11:**

* Trend: Accuracy starts high (around 70%) at 10k tokens, then decreases to around 50% at 12k tokens, and remains at 50% until 14k tokens.

* Data Points: Approximately (10k, 70%), (11k, 60%), (12k, 50%), (13k, 50%), (14k, 50%)

**Problem 12:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (10k, 100%), (11k, 100%), (12k, 100%), (13k, 100%)

**Problem 13:**

* Trend: Accuracy decreases from approximately 80% at 4k tokens to 40% at 5k tokens, then increases to 60% at 6k tokens.

* Data Points: Approximately (4k, 80%), (5k, 40%), (6k, 60%), (7k, 50%)

**Problem 14:**

* Trend: Accuracy increases from approximately 0% at 20k tokens to 80% at 26k tokens.

* Data Points: Approximately (20k, 0%), (22k, 20%), (24k, 40%), (26k, 80%)

**Problem 15:**

* Trend: Accuracy remains very low (near 0%) across all token counts.

* Data Points: Approximately (9k, 0%), (10k, 0%), (11k, 0%), (12k, 0%), (13k, 0%), (14k, 0%), (15k, 0%)

**Problem 16:**

* Trend: Accuracy remains very low (near 0%) across all token counts.

* Data Points: Approximately (16k, 0%), (17k, 10%), (18k, 20%), (19k, 10%), (20k, 0%)

**Problem 17:**

* Trend: Accuracy increases from approximately 40% at 16k tokens to 80% at 24k tokens.

* Data Points: Approximately (16k, 40%), (18k, 60%), (20k, 50%), (22k, 70%), (24k, 80%)

**Problem 18:**

* Trend: Accuracy remains very low (near 0%) across all token counts.

* Data Points: Approximately (16k, 0%), (18k, 0%), (20k, 0%), (22k, 0%), (24k, 0%), (26k, 0%), (28k, 0%), (30k, 0%), (32k, 0%)

**Problem 19:**

* Trend: Accuracy remains relatively constant at approximately 80% across all token counts.

* Data Points: Approximately (12k, 80%), (14k, 80%), (16k, 80%), (18k, 80%), (20k, 80%), (22k, 80%), (24k, 80%), (26k, 80%), (28k, 80%)

**Problem 20:**

* Trend: Accuracy fluctuates, starting around 10% at 18k tokens, peaking at 30% around 24k tokens, and ending around 10% at 28k tokens.

* Data Points: Approximately (18k, 10%), (20k, 20%), (22k, 20%), (24k, 30%), (26k, 20%), (28k, 10%)

**Problem 21:**

* Trend: Accuracy is high (around 80-90%) for lower token counts (20k-24k), then decreases to 50% around 26k-32k.

* Data Points: Approximately (20k, 90%), (22k, 90%), (24k, 80%), (26k, 50%), (28k, 50%), (30k, 50%), (32k, 50%)

**Problem 22:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (20k, 100%), (22k, 100%), (24k, 100%), (26k, 100%)

**Problem 23:**

* Trend: Accuracy increases from approximately 40% at 16k tokens to 100% at 20k tokens, then remains high at 100% until 26k tokens.

* Data Points: Approximately (16k, 40%), (18k, 80%), (20k, 100%), (22k, 100%), (24k, 100%), (26k, 100%)

**Problem 24:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (12k, 100%), (13k, 100%)

**Problem 25:**

* Trend: Accuracy decreases from approximately 80% at 9k tokens to 20% at 11k tokens, then increases to 40% at 12k tokens, and decreases to 30% at 13k tokens.

* Data Points: Approximately (9k, 80%), (10k, 60%), (11k, 20%), (12k, 40%), (13k, 30%)

**Problem 26:**

* Trend: Accuracy decreases from approximately 100% at 14k tokens to 80% at 20k tokens.

* Data Points: Approximately (14k, 100%), (16k, 90%), (18k, 90%), (20k, 80%)

**Problem 27:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (20k, 100%), (22k, 100%), (24k, 100%), (26k, 100%)

**Problem 28:**

* Trend: Accuracy remains high (100%) across all token counts.

* Data Points: Approximately (12k, 100%), (14k, 100%)

**Problem 29:**

* Trend: Accuracy increases from approximately 0% at 16k tokens to 100% at 18k tokens, then decreases to 20% at 32k tokens.

* Data Points: Approximately (16k, 0%), (17k, 80%), (18k, 100%), (20k, 90%), (22k, 80%), (24k, 70%), (26k, 60%), (28k, 50%), (30k, 40%), (32k, 20%)

**Problem 30:**

* Trend: Accuracy decreases from approximately 90% at 12k tokens to 40% at 22k tokens.

* Data Points: Approximately (12k, 90%), (15k, 80%), (17k, 70%), (20k, 50%), (22k, 40%)

### Key Observations

* For some problems (e.g., 4, 6, 15, 16, 18), the accuracy remains consistently low regardless of the number of tokens used.

* For other problems (e.g., 10, 12, 22, 24, 27, 28), the accuracy remains consistently high regardless of the number of tokens used.

* Some problems show a clear trend of increasing accuracy with more tokens (e.g., 14, 17, 23, 29).

* Other problems show a trend of decreasing accuracy with more tokens (e.g., 21, 26, 30).

* Some problems show a more complex relationship between accuracy and token count, with accuracy fluctuating (e.g., 8, 13, 20, 25).

### Interpretation

The charts suggest that the relationship between the length of thoughts (measured by the number of tokens) and the accuracy of the AIME-24 solver varies significantly from problem to problem.

* For some problems, increasing the length of thoughts consistently improves accuracy, suggesting that more detailed reasoning is beneficial.

* For other problems, increasing the length of thoughts has little to no impact on accuracy, suggesting that the problem may be inherently difficult or that the solver is unable to effectively utilize the additional information.

* In some cases, increasing the length of thoughts may even decrease accuracy, suggesting that the solver may be getting confused or distracted by irrelevant information.

The variability in these relationships highlights the complexity of the AIME-24 problems and the challenges of developing a general-purpose solver that can effectively handle different types of reasoning tasks. The pink shading does not appear to correlate with any specific trend or data point. It seems to be a visual element to separate the data.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: AIME-24 Accuracy vs. (binned) Length of Thoughts

### Overview

The image presents a grid of 30 individual line charts. Each chart represents the accuracy (%) of AIME-24 against the number of tokens used, for a specific "Problem" (numbered 1 through 30). The x-axis represents the number of tokens, binned into ranges, and the y-axis represents the accuracy as a percentage. Each chart displays a single data series represented by a blue line with purple markers.

### Components/Axes

* **Title:** "AIME-24 Accuracy vs (binned) Length of Thoughts" - positioned at the top-center of the image.

* **X-axis Label:** "Number of Tokens" - present on all charts.

* **Y-axis Label:** "Accuracy (%)" - present on all charts.

* **Problem Labels:** Each chart is labeled with "Problem: [Number]" (1-30) in the top-left corner.

* **Data Series:** Each chart contains a single blue line with purple markers representing the accuracy for that problem.

* **X-axis Bins:** The x-axis is binned, with approximate values of 16n, 70n, 130n, 190n, 250n, 310n.

* **Y-axis Scale:** The y-axis ranges from approximately 0% to 100% on all charts.

### Detailed Analysis or Content Details

Here's a breakdown of each chart, noting the general trend and approximate data points. Due to the binning of the x-axis, values are approximate.

* **Problem 1:** Line is relatively flat, hovering around 80%. Accuracy is approximately 82% at 16n, 78% at 70n, 80% at 130n, 82% at 190n, 80% at 250n, 82% at 310n.

* **Problem 2:** Line slopes upward. Accuracy is approximately 60% at 16n, 70% at 70n, 80% at 130n, 85% at 190n, 90% at 250n, 95% at 310n.

* **Problem 3:** Line slopes upward. Accuracy is approximately 50% at 16n, 65% at 70n, 75% at 130n, 80% at 190n, 85% at 250n, 90% at 310n.

* **Problem 4:** Line slopes upward. Accuracy is approximately 40% at 16n, 55% at 70n, 65% at 130n, 75% at 190n, 80% at 250n, 85% at 310n.

* **Problem 5:** Line slopes upward. Accuracy is approximately 40% at 16n, 50% at 70n, 60% at 130n, 70% at 190n, 75% at 250n, 80% at 310n.

* **Problem 6:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 7:** Line slopes downward. Accuracy is approximately 85% at 16n, 75% at 70n, 65% at 130n, 55% at 190n, 45% at 250n, 35% at 310n.

* **Problem 8:** Line slopes downward. Accuracy is approximately 80% at 16n, 70% at 70n, 60% at 130n, 50% at 190n, 40% at 250n, 30% at 310n.

* **Problem 9:** Line slopes downward. Accuracy is approximately 95% at 16n, 85% at 70n, 75% at 130n, 65% at 190n, 55% at 250n, 45% at 310n.

* **Problem 10:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 11:** Line is relatively flat. Accuracy is approximately 85% across all token ranges.

* **Problem 12:** Line slopes upward. Accuracy is approximately 50% at 16n, 60% at 70n, 70% at 130n, 80% at 190n, 85% at 250n, 90% at 310n.

* **Problem 13:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 14:** Line slopes downward. Accuracy is approximately 85% at 16n, 75% at 70n, 65% at 130n, 55% at 190n, 45% at 250n, 35% at 310n.

* **Problem 15:** Line slopes downward. Accuracy is approximately 80% at 16n, 70% at 70n, 60% at 130n, 50% at 190n, 40% at 250n, 30% at 310n.

* **Problem 16:** Line slopes upward. Accuracy is approximately 40% at 16n, 50% at 70n, 60% at 130n, 70% at 190n, 75% at 250n, 80% at 310n.

* **Problem 17:** Line slopes upward. Accuracy is approximately 50% at 16n, 60% at 70n, 70% at 130n, 80% at 190n, 85% at 250n, 90% at 310n.

* **Problem 18:** Line slopes upward. Accuracy is approximately 60% at 16n, 70% at 70n, 80% at 130n, 85% at 190n, 90% at 250n, 95% at 310n.

* **Problem 19:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 20:** Line slopes downward. Accuracy is approximately 85% at 16n, 75% at 70n, 65% at 130n, 55% at 190n, 45% at 250n, 35% at 310n.

* **Problem 21:** Line slopes upward. Accuracy is approximately 40% at 16n, 50% at 70n, 60% at 130n, 70% at 190n, 75% at 250n, 80% at 310n.

* **Problem 22:** Line slopes upward. Accuracy is approximately 50% at 16n, 60% at 70n, 70% at 130n, 80% at 190n, 85% at 250n, 90% at 310n.

* **Problem 23:** Line slopes upward. Accuracy is approximately 60% at 16n, 70% at 70n, 80% at 130n, 85% at 190n, 90% at 250n, 95% at 310n.

* **Problem 24:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 25:** Line slopes downward. Accuracy is approximately 85% at 16n, 75% at 70n, 65% at 130n, 55% at 190n, 45% at 250n, 35% at 310n.

* **Problem 26:** Line slopes upward. Accuracy is approximately 40% at 16n, 50% at 70n, 60% at 130n, 70% at 190n, 75% at 250n, 80% at 310n.

* **Problem 27:** Line slopes upward. Accuracy is approximately 50% at 16n, 60% at 70n, 70% at 130n, 80% at 190n, 85% at 250n, 90% at 310n.

* **Problem 28:** Line slopes upward. Accuracy is approximately 60% at 16n, 70% at 70n, 80% at 130n, 85% at 190n, 90% at 250n, 95% at 310n.

* **Problem 29:** Line slopes downward. Accuracy is approximately 90% at 16n, 80% at 70n, 70% at 130n, 60% at 190n, 50% at 250n, 40% at 310n.

* **Problem 30:** Line slopes downward. Accuracy is approximately 85% at 16n, 75% at 70n, 65% at 130n, 55% at 190n, 45% at 250n, 35% at 310n.

### Key Observations

* There's a clear split in trends: approximately half the problems show accuracy *increasing* with the number of tokens, while the other half show accuracy *decreasing*.

* Problems 1 and 11 show relatively flat accuracy curves, indicating that the number of tokens doesn't significantly impact performance.

* Problems 2, 3, 4, 5, 12, 16, 17, 18, 21, 22, 23, 26, 27, and 28 show a positive correlation between tokens and accuracy.

* Problems 6, 7, 8, 9, 10, 13, 14, 15, 19, 20, 24, 25, 29, and 30 show a negative correlation between tokens and accuracy.

* The range of accuracy values varies significantly across problems, suggesting different levels of difficulty or sensitivity to token length.

### Interpretation

The data suggests that the relationship between the number of tokens used and the accuracy of AIME-24 is highly problem-dependent. For some problems, increasing the number of tokens improves accuracy, likely by providing more context or allowing for more complex reasoning. For other problems, increasing the number of tokens *decreases* accuracy, potentially due to noise or irrelevant information being introduced. The flat accuracy curves for problems 1 and 11 suggest that these problems are relatively simple or robust to variations in token length.

The contrasting trends highlight the importance of carefully considering the optimal token length for each specific problem when using AIME-24. A one-size-fits-all approach may not be effective, and tailoring the token length to the problem at hand could significantly improve performance. The observed outliers and variations in accuracy across problems warrant further investigation to understand the underlying factors driving these differences. It is possible that the type of problem, the complexity of the reasoning required, or the quality of the input data all play a role in determining the optimal token length.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart Grid: AIME-24 Accuracy vs (binned) Length of Thoughts

### Overview

The image is a grid of 30 individual line charts arranged in 6 rows and 5 columns. The overall title is "AIME-24 Accuracy vs (binned) Length of Thoughts". Each subplot charts the relationship between model accuracy (as a percentage) and the number of tokens used in its reasoning ("thoughts") for a specific problem, labeled "Problem: 1" through "Problem: 30". The charts collectively analyze how performance varies with the length of the model's internal reasoning process across different problems.

### Components/Axes

* **Overall Layout:** A 6x5 grid of subplots.

* **Subplot Titles:** Each subplot is titled with "Problem: [Number]", where the number ranges from 1 to 30. The numbering order is row-major (left to right, top to bottom).

* **Y-Axis (All Subplots):** Labeled "Accuracy (%)". The scale is consistently from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-Axis (All Subplots):** Labeled "Number of Tokens". The scale and range vary significantly between problems, indicating different binning or ranges of thought lengths for each problem.

* **Data Series:** A single blue line with circular markers in each subplot, representing the accuracy at different token count bins.

* **Visual Highlight:** A pink/red shaded vertical region appears on the right side of each subplot's plotting area. Its left boundary varies per chart, likely indicating a threshold or region of interest for longer thought lengths.

### Detailed Analysis

Below is a problem-by-problem breakdown. For each, the approximate x-axis range and the trend of the blue accuracy line are described. Values are approximate based on visual inspection.

**Row 1:**

* **Problem 1:** X-axis: ~55 to 85 tokens. Trend: Starts high (~90%), slight dip, then rises to near 100%.

* **Problem 2:** X-axis: ~140 to 240 tokens. Trend: Starts ~80%, rises to ~95%, then declines slightly.

* **Problem 3:** X-axis: ~70 to 115 tokens. Trend: Starts low (~40%), sharp rise to ~95%, then slight decline.

* **Problem 4:** X-axis: ~100 to 180 tokens. Trend: Flat line at 0% accuracy across all bins.

* **Problem 5:** X-axis: ~125 to 200 tokens. Trend: Starts high (~95%), plateaus, then declines to ~60%.

**Row 2:**

* **Problem 11:** X-axis: ~90 to 140 tokens. Trend: Starts ~70%, slight rise, then declines to ~50%.

* **Problem 12:** X-axis: ~100 to 150 tokens. Trend: Flat line at 100% accuracy.

* **Problem 6:** X-axis: ~240 to 320 tokens. Trend: Single data point at 0% accuracy.

* **Problem 7:** X-axis: ~240 to 320 tokens. Trend: Flat line at 100% accuracy, then a sharp drop to 0% at the final bin.

* **Problem 8:** X-axis: ~140 to 200 tokens. Trend: Fluctuates between ~60% and ~90%.

**Row 3:**

* **Problem 9:** X-axis: ~40 to 125 tokens. Trend: Highly volatile, swinging between ~20% and 100%.

* **Problem 10:** X-axis: ~70 to 140 tokens. Trend: Flat line at 100% accuracy.

* **Problem 13:** X-axis: ~40 to 75 tokens. Trend: Starts ~70%, declines, then a small rise before falling to ~20%.

* **Problem 14:** X-axis: ~190 to 280 tokens. Trend: Starts near 0%, rises in steps to ~90%.

* **Problem 15:** X-axis: ~90 to 150 tokens. Trend: Starts low (~10%), declines to 0% and stays flat.

**Row 4:**

* **Problem 16:** X-axis: ~160 to 200 tokens. Trend: Starts at 0%, small bump, then rises to ~30%.

* **Problem 17:** X-axis: ~100 to 160 tokens. Trend: Fluctuates between ~30% and ~50%, then rises sharply to ~80%.

* **Problem 18:** X-axis: ~180 to 320 tokens. Trend: Flat line at 0% accuracy.

* **Problem 19:** X-axis: ~190 to 270 tokens. Trend: Starts high (~95%), slight decline, then rises back to near 100%.

* **Problem 20:** X-axis: ~180 to 240 tokens. Trend: Fluctuates between ~10% and ~40%.

**Row 5:**

* **Problem 21:** X-axis: ~200 to 320 tokens. Trend: Starts ~80%, declines to ~40% and plateaus.

* **Problem 22:** X-axis: ~130 to 260 tokens. Trend: Flat line at 100% accuracy.

* **Problem 23:** X-axis: ~130 to 240 tokens. Trend: Starts high (~95%), sharp drop to ~30%, then recovers to ~90%.

* **Problem 24:** X-axis: ~200 to 260 tokens. Trend: Flat line at 100% accuracy.

* **Problem 25:** X-axis: ~70 to 130 tokens. Trend: Starts ~60%, declines to ~20%, then recovers to ~50%.

**Row 6:**

* **Problem 26:** X-axis: ~190 to 240 tokens. Trend: Starts at 100%, declines slightly to ~80%.

* **Problem 27:** X-axis: ~160 to 240 tokens. Trend: Starts at 100%, dips to ~80%, then returns to 100%.

* **Problem 28:** X-axis: ~120 to 200 tokens. Trend: Flat line at 100% accuracy.

* **Problem 29:** X-axis: ~130 to 200 tokens. Trend: Starts ~60%, rises to 100%, then declines sharply to ~20%.

* **Problem 30:** X-axis: ~140 to 200 tokens. Trend: Starts at 100%, declines to ~60%, then recovers to 100%.

### Key Observations

1. **High Variance in Performance:** Accuracy varies dramatically between problems, from consistently 100% (e.g., Problems 10, 12, 22, 24, 28) to consistently 0% (Problems 4, 18).

2. **Non-Linear Relationship:** For many problems, accuracy does not have a simple linear relationship with thought length. Trends include:

* **Inverted U-shape:** Performance peaks at a medium length (e.g., Problem 2).

* **U-shape:** Performance is poor at short and long lengths but better in the middle (e.g., Problem 23).

* **Monotonic Increase:** Accuracy improves with longer thoughts (e.g., Problem 14).

* **Monotonic Decrease:** Accuracy worsens with longer thoughts (e.g., Problem 5, 21).

* **Plateau:** Accuracy is stable across measured lengths (e.g., Problems 10, 12, 28).

3. **Pink Shaded Region:** This region, starting at a problem-specific token count, consistently covers the rightmost portion of each chart. It may denote a "long thought" regime where performance often declines or becomes unstable (e.g., Problems 5, 7, 21, 29).

4. **Data Sparsity:** Some charts have very few data points (e.g., Problem 6 has one), suggesting limited samples for those thought-length bins.

### Interpretation

This grid of charts provides a granular, problem-specific analysis of the "AIME-24" model's performance. The core insight is that **the optimal length for a model's reasoning chain is highly problem-dependent.** There is no universal rule that "longer thoughts are better" or "shorter thoughts are better."

* **Problem Difficulty & Strategy:** Problems with flat 100% accuracy (10, 12, 22, 24, 28) may be straightforward for the model, solvable with minimal reasoning. Problems with 0% accuracy (4, 18) may be fundamentally beyond the model's capability or require a reasoning approach it cannot access.

* **The "Sweet Spot" Phenomenon:** The common inverted U-shape (e.g., Problem 2) suggests many problems have an optimal reasoning length. Too few tokens may indicate insufficient deliberation, while too many may lead to overthinking, error accumulation, or distraction.

* **Pathology of Long Thoughts:** The pink region and the frequent performance drop within it (e.g., Problem 7's cliff, Problem 29's decline) highlight a potential failure mode. Extended reasoning chains may become incoherent or off-track for certain problem types.

* **Diagnostic Value:** This visualization is a powerful diagnostic tool. Instead of a single aggregate accuracy score, it reveals *how* and *when* the model fails. For instance, Problem 9's volatility suggests unstable reasoning, while Problem 14's steady climb indicates a problem where more computation directly translates to better solutions.

In summary, the data argues for a nuanced view of AI reasoning. Maximizing performance likely requires not just making models that can think longer, but understanding *which problems benefit from longer thought* and developing methods to guide the reasoning process toward the problem-specific "sweet spot" while avoiding the pathologies of excessively long chains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: AlIME-24 Accuracy vs. binned Length of Thoughts

### Overview

The image displays a 5x6 grid of 30 line graphs, each labeled "Problem 1" to "Problem 30". Each graph plots "Accuracy (%)" (y-axis) against "Number of Tokens" (x-axis). The title "AlIME-24 Accuracy vs. binned Length of Thoughts" indicates the relationship between token length and model performance. Blue lines represent data points, with shaded pink regions possibly indicating confidence intervals or variability.

### Components/Axes

- **X-axis**: "Number of Tokens" (ranges from ~6 to 32 tokens per subplot).

- **Y-axis**: "Accuracy (%)" (ranges from 0% to 100%).

- **Legend**: Located in the top-right corner, labeled "AlIME-24" with a blue color.

- **Subplot Titles**: Each graph is labeled "Problem X" (X = 1–30).

### Detailed Analysis

Each subplot shows distinct trends:

- **Problem 1**: Flat line at ~80% accuracy across 6–9 tokens.

- **Problem 2**: Peaks at ~90% at 16 tokens, then drops to ~80% at 24 tokens.

- **Problem 3**: Starts at ~60%, rises to ~80% at 12 tokens, then drops to ~60% at 16 tokens.

- **Problem 4**: Flat line at 0% accuracy across all tokens.

- **Problem 5**: Peaks at ~90% at 20 tokens, then drops to ~60% at 24 tokens.

- **Problem 6**: Flat line at 0% accuracy across all tokens.

- **Problem 7**: Peaks at ~90% at 16 tokens, then drops to ~60% at 24 tokens.

- **Problem 8**: Starts at ~80%, drops to ~40% at 12 tokens, then rises to ~80% at 16 tokens.

- **Problem 9**: Peaks at ~90% at 10 tokens, then drops to ~60% at 12 tokens.

- **Problem 10**: Flat line at ~80% accuracy across 8–12 tokens.

- **Problem 11**: Starts at ~80%, drops to ~60% at 10 tokens, then stabilizes at ~60%.

- **Problem 12**: Flat line at ~80% accuracy across 10–12 tokens.

- **Problem 13**: Starts at ~40%, rises to ~80% at 12 tokens, then drops to ~60% at 16 tokens.

- **Problem 14**: Peaks at ~90% at 24 tokens, then drops to ~60% at 28 tokens.

- **Problem 15**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

- **Problem 16**: Starts at ~20%, rises to ~60% at 24 tokens, then drops to ~40% at 28 tokens.

- **Problem 17**: Starts at ~40%, rises to ~80% at 24 tokens, then drops to ~60% at 28 tokens.

- **Problem 18**: Flat line at 0% accuracy across all tokens.

- **Problem 19**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 20**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

- **Problem 21**: Flat line at ~80% accuracy across 24–28 tokens.

- **Problem 22**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 23**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 24**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 25**: Starts at ~40%, peaks at ~80% at 10 tokens, then drops to ~60% at 12 tokens.

- **Problem 26**: Flat line at ~80% accuracy across 24–28 tokens.

- **Problem 27**: Starts at ~80%, drops to ~60% at 16 tokens, then stabilizes at ~60%.

- **Problem 28**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 29**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 30**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

### Key Observations

1. **Outliers**: Problems 4, 6, and 18 show 0% accuracy, suggesting critical failures or edge cases.

2. **Stable Performance**: Problems 1, 10, 12, 21, 22, 24, 26, and 28 maintain ~80% accuracy across token ranges.

3. **Peaks and Drops**: Many problems (e.g., 2, 5, 7, 9, 14, 17, 19, 23, 25, 29, 30) show sharp accuracy peaks at specific token counts, followed by declines.

4. **Variability**: Shaded pink regions (likely confidence intervals) are present in some subplots, indicating uncertainty in measurements.

### Interpretation

The data suggests that AlIME-24's accuracy is highly sensitive to the number of tokens in certain problems. For example:

- **Optimal Token Length**: Problems like 2, 5, and 14 achieve peak accuracy at 16–24 tokens, implying that longer thoughts (within a range) improve performance.

- **Token Sensitivity**: Problems with flat lines (e.g., 1, 10, 12) may be less dependent on token length, while others (e.g., 4, 6, 18) fail entirely, highlighting potential limitations in model design or data quality.

- **Confidence Intervals**: The shaded regions suggest variability in accuracy measurements, though their exact meaning (e.g., standard deviation, prediction intervals) is not specified.

The results underscore the importance of token length in model performance, with some problems benefiting from longer thoughts and others being inherently unstable. Further analysis of the shaded regions and problem-specific contexts could clarify the underlying causes of these trends.

DECODING INTELLIGENCE...