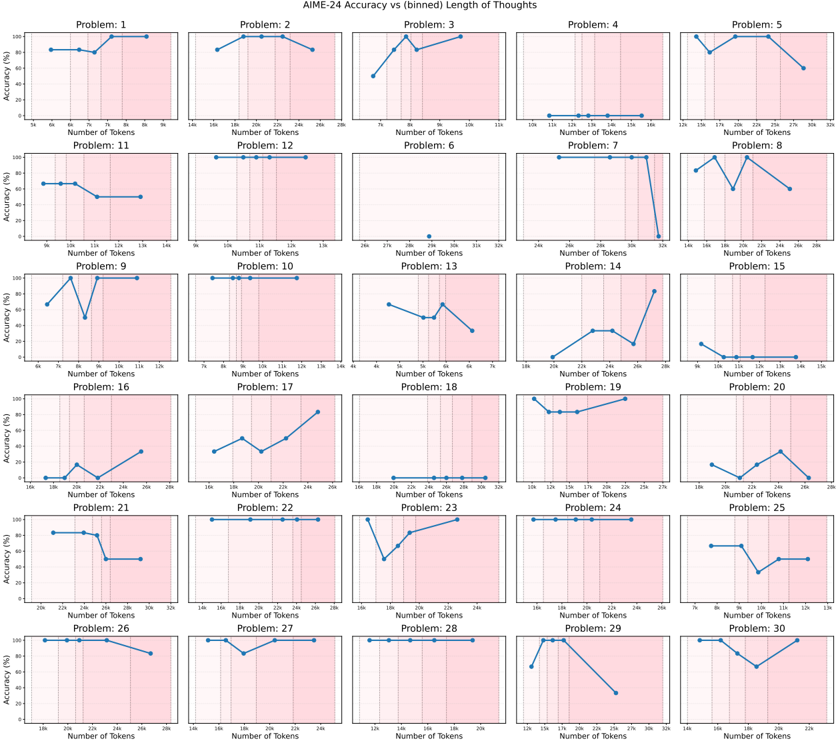

## Line Graphs: AlIME-24 Accuracy vs. binned Length of Thoughts

### Overview

The image displays a 5x6 grid of 30 line graphs, each labeled "Problem 1" to "Problem 30". Each graph plots "Accuracy (%)" (y-axis) against "Number of Tokens" (x-axis). The title "AlIME-24 Accuracy vs. binned Length of Thoughts" indicates the relationship between token length and model performance. Blue lines represent data points, with shaded pink regions possibly indicating confidence intervals or variability.

### Components/Axes

- **X-axis**: "Number of Tokens" (ranges from ~6 to 32 tokens per subplot).

- **Y-axis**: "Accuracy (%)" (ranges from 0% to 100%).

- **Legend**: Located in the top-right corner, labeled "AlIME-24" with a blue color.

- **Subplot Titles**: Each graph is labeled "Problem X" (X = 1–30).

### Detailed Analysis

Each subplot shows distinct trends:

- **Problem 1**: Flat line at ~80% accuracy across 6–9 tokens.

- **Problem 2**: Peaks at ~90% at 16 tokens, then drops to ~80% at 24 tokens.

- **Problem 3**: Starts at ~60%, rises to ~80% at 12 tokens, then drops to ~60% at 16 tokens.

- **Problem 4**: Flat line at 0% accuracy across all tokens.

- **Problem 5**: Peaks at ~90% at 20 tokens, then drops to ~60% at 24 tokens.

- **Problem 6**: Flat line at 0% accuracy across all tokens.

- **Problem 7**: Peaks at ~90% at 16 tokens, then drops to ~60% at 24 tokens.

- **Problem 8**: Starts at ~80%, drops to ~40% at 12 tokens, then rises to ~80% at 16 tokens.

- **Problem 9**: Peaks at ~90% at 10 tokens, then drops to ~60% at 12 tokens.

- **Problem 10**: Flat line at ~80% accuracy across 8–12 tokens.

- **Problem 11**: Starts at ~80%, drops to ~60% at 10 tokens, then stabilizes at ~60%.

- **Problem 12**: Flat line at ~80% accuracy across 10–12 tokens.

- **Problem 13**: Starts at ~40%, rises to ~80% at 12 tokens, then drops to ~60% at 16 tokens.

- **Problem 14**: Peaks at ~90% at 24 tokens, then drops to ~60% at 28 tokens.

- **Problem 15**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

- **Problem 16**: Starts at ~20%, rises to ~60% at 24 tokens, then drops to ~40% at 28 tokens.

- **Problem 17**: Starts at ~40%, rises to ~80% at 24 tokens, then drops to ~60% at 28 tokens.

- **Problem 18**: Flat line at 0% accuracy across all tokens.

- **Problem 19**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 20**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

- **Problem 21**: Flat line at ~80% accuracy across 24–28 tokens.

- **Problem 22**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 23**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 24**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 25**: Starts at ~40%, peaks at ~80% at 10 tokens, then drops to ~60% at 12 tokens.

- **Problem 26**: Flat line at ~80% accuracy across 24–28 tokens.

- **Problem 27**: Starts at ~80%, drops to ~60% at 16 tokens, then stabilizes at ~60%.

- **Problem 28**: Flat line at ~80% accuracy across 16–24 tokens.

- **Problem 29**: Starts at ~80%, drops to ~60% at 16 tokens, then rises to ~80% at 24 tokens.

- **Problem 30**: Starts at ~40%, peaks at ~80% at 12 tokens, then drops to ~40% at 16 tokens.

### Key Observations

1. **Outliers**: Problems 4, 6, and 18 show 0% accuracy, suggesting critical failures or edge cases.

2. **Stable Performance**: Problems 1, 10, 12, 21, 22, 24, 26, and 28 maintain ~80% accuracy across token ranges.

3. **Peaks and Drops**: Many problems (e.g., 2, 5, 7, 9, 14, 17, 19, 23, 25, 29, 30) show sharp accuracy peaks at specific token counts, followed by declines.

4. **Variability**: Shaded pink regions (likely confidence intervals) are present in some subplots, indicating uncertainty in measurements.

### Interpretation

The data suggests that AlIME-24's accuracy is highly sensitive to the number of tokens in certain problems. For example:

- **Optimal Token Length**: Problems like 2, 5, and 14 achieve peak accuracy at 16–24 tokens, implying that longer thoughts (within a range) improve performance.

- **Token Sensitivity**: Problems with flat lines (e.g., 1, 10, 12) may be less dependent on token length, while others (e.g., 4, 6, 18) fail entirely, highlighting potential limitations in model design or data quality.

- **Confidence Intervals**: The shaded regions suggest variability in accuracy measurements, though their exact meaning (e.g., standard deviation, prediction intervals) is not specified.

The results underscore the importance of token length in model performance, with some problems benefiting from longer thoughts and others being inherently unstable. Further analysis of the shaded regions and problem-specific contexts could clarify the underlying causes of these trends.