# Technical Architecture Diagram: CPU Optimization Stack

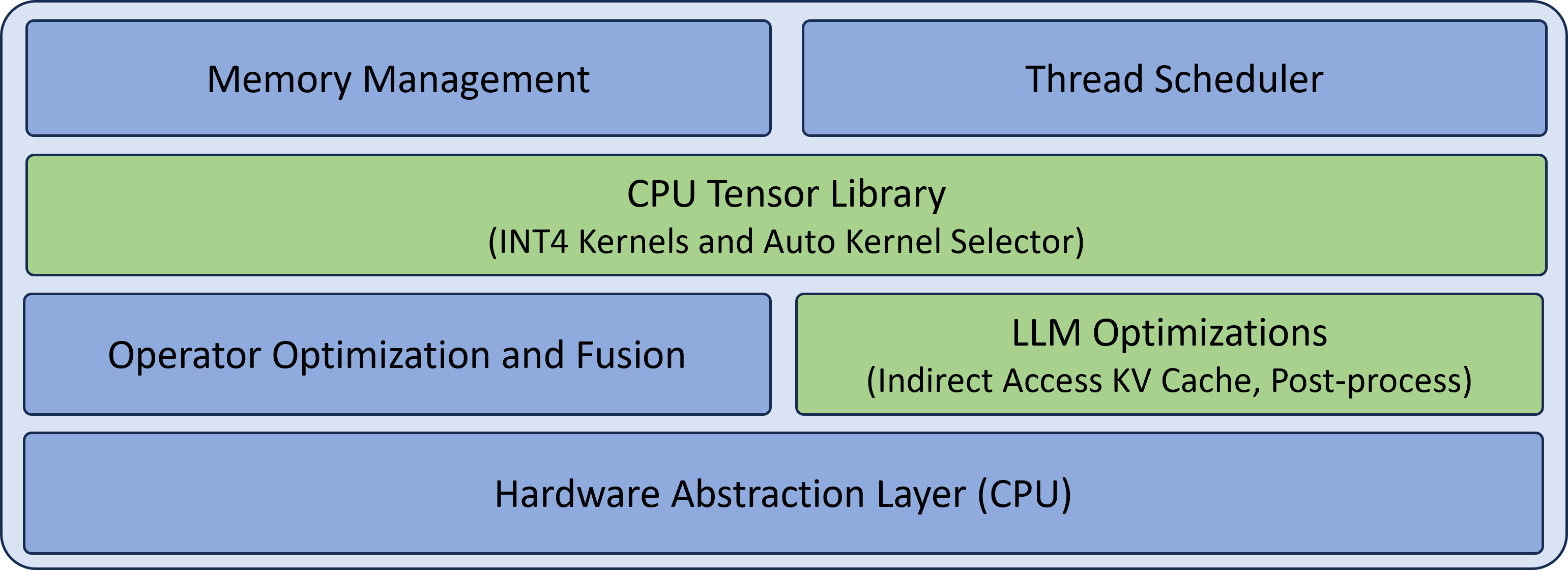

This image illustrates a multi-layered software architecture stack focused on CPU-based tensor operations and Large Language Model (LLM) optimizations. The diagram is organized into four horizontal tiers.

## Component Breakdown by Layer

### Layer 1: Top Level (Management & Scheduling)

This layer consists of two side-by-side blue blocks responsible for system-level resource handling.

* **Memory Management** (Left): Handles allocation and tracking of memory resources.

* **Thread Scheduler** (Right): Manages execution threads across CPU cores.

### Layer 2: Core Library Tier

This layer consists of a single, full-width green block representing the primary computational engine.

* **CPU Tensor Library**: The central component for tensor operations.

* **Sub-components**: INT4 Kernels and Auto Kernel Selector. This indicates support for 4-bit integer quantization and a mechanism to automatically choose the most efficient kernel for a given task.

### Layer 3: Optimization Tier

This layer consists of two side-by-side blocks that refine execution efficiency.

* **Operator Optimization and Fusion** (Blue, Left): Focuses on combining multiple operations into single kernels to reduce memory bandwidth overhead.

* **LLM Optimizations** (Green, Right): Specific enhancements for Large Language Models.

* **Sub-components**: Indirect Access KV Cache, Post-process. This highlights specialized handling of the Key-Value cache and final output processing.

### Layer 4: Bottom Level (Hardware Interface)

This layer consists of a single, full-width blue block serving as the foundation of the stack.

* **Hardware Abstraction Layer (CPU)**: Provides a standardized interface between the software stack and the underlying physical CPU hardware.

---

## Visual Legend & Logic

* **Blue Blocks**: Represent general infrastructure, management, and hardware abstraction components.

* **Green Blocks**: Represent specialized computational libraries and domain-specific (LLM) optimizations.

* **Spatial Flow**: The stack follows a standard bottom-up hierarchy where the **Hardware Abstraction Layer** supports the optimization and library tiers, which are managed by the top-level **Memory Management** and **Thread Scheduler**.