\n

## Diagram: Chain-of-Thought (CoT) Reasoning Frameworks

### Overview

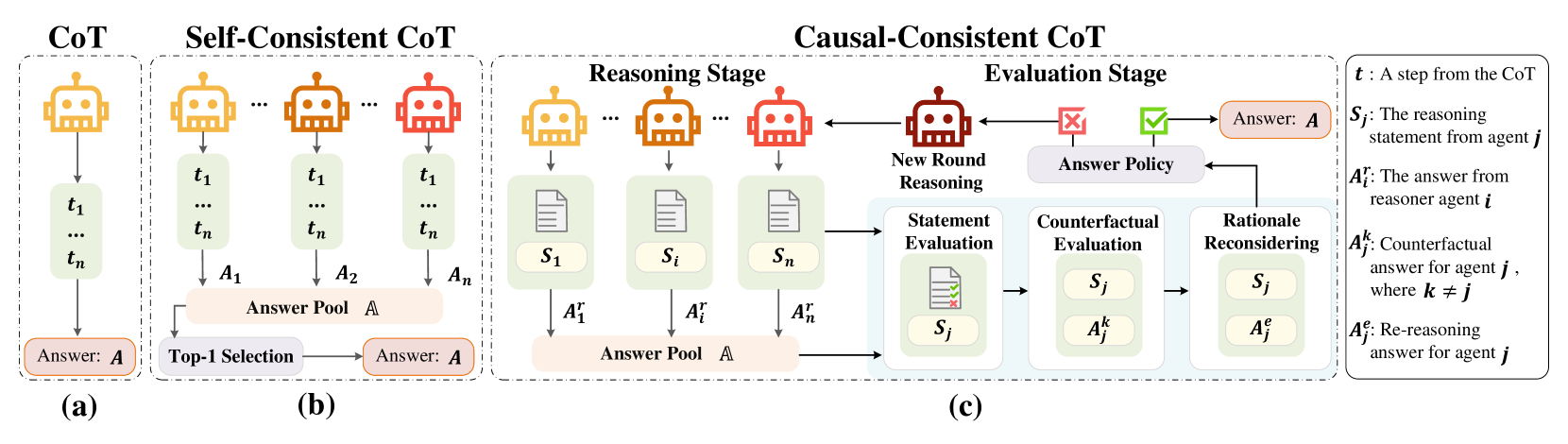

The image presents a comparative diagram illustrating three different Chain-of-Thought (CoT) reasoning frameworks: CoT, Self-Consistent CoT, and Causal-Consistent CoT. The diagram visually outlines the stages and processes involved in each framework, from initial reasoning steps to final answer selection. It highlights the differences in how each approach handles reasoning consistency and evaluation.

### Components/Axes

The diagram is divided into three main sections, labeled (a), (b), and (c), representing the three CoT frameworks. Each section is further divided into "Reasoning Stage" and "Evaluation Stage". Key elements include:

* **Robot Icons:** Represent reasoning agents.

* **t<sub>1</sub>...t<sub>n</sub>:** Represent steps in the CoT process.

* **A<sub>1</sub>...A<sub>n</sub>, A<sup>1</sup><sub>j</sub>...A<sup>k</sup><sub>j</sub>:** Represent answers from reasoning agents.

* **S<sub>1</sub>...S<sub>n</sub>, S<sub>j</sub>, S<sup>k</sup><sub>j</sub>:** Represent reasoning statements.

* **Answer Pool A:** A collection of potential answers.

* **Checkmarks/X Marks:** Indicate evaluation outcomes.

* **Legend (Top-Right):** Defines the symbols used in the diagram:

* `t`: A step from the CoT

* `S<sub>j</sub>`: The reasoning statement from agent j

* `A<sup>i</sup><sub>j</sub>`: The answer from reasoner agent i

* `A<sup>k</sup><sub>j</sub>`: Counterfactual answer for agent j, where k ≠ j

* `A<sup>f</sup><sub>j</sub>`: Re-reasoning answer for agent j

### Detailed Analysis or Content Details

**(a) CoT:**

* The process begins with a series of reasoning steps (t<sub>1</sub>...t<sub>n</sub>) performed by a single robot icon.

* These steps generate an answer (Answer: A) directly.

* The diagram shows a single path from reasoning steps to the final answer.

**(b) Self-Consistent CoT:**

* Multiple reasoning steps (t<sub>1</sub>...t<sub>n</sub>) are performed by multiple robot icons.

* Each set of steps generates an answer (A<sub>1</sub>...A<sub>n</sub>).

* These answers are collected in an "Answer Pool A".

* A "Top-1 Selection" process chooses the final answer (Answer: A) from the pool.

**(c) Causal-Consistent CoT:**

* This framework is divided into "Reasoning Stage" and "Evaluation Stage".

* **Reasoning Stage:** Similar to (b), multiple reasoning steps (t<sub>1</sub>...t<sub>n</sub>) are performed by multiple robot icons, generating reasoning statements (S<sub>1</sub>...S<sub>n</sub>) and answers (A<sup>1</sup><sub>j</sub>...A<sup>k</sup><sub>j</sub>) which are collected in an "Answer Pool A".

* **Evaluation Stage:**

* "New Round Reasoning" is performed by a robot icon.

* "Statement Evaluation" assesses the reasoning statements (S<sub>j</sub>).

* "Counterfactual Evaluation" evaluates answers based on alternative scenarios (A<sup>k</sup><sub>j</sub>).

* "Rationale Reconsidering" refines the reasoning (S<sup>j</sup><sub>j</sub>).

* The final answer (Answer: A) is selected based on the evaluation results, indicated by checkmarks (positive evaluation) and X marks (negative evaluation).

### Key Observations

* The complexity increases from (a) to (c). CoT is the simplest, while Causal-Consistent CoT is the most elaborate.

* Self-Consistent CoT introduces the concept of an "Answer Pool" and selection, improving robustness.

* Causal-Consistent CoT adds a dedicated "Evaluation Stage" with multiple evaluation steps, aiming for more reliable and consistent reasoning.

* The use of checkmarks and X marks in (c) visually represents the evaluation process and the selection of the final answer.

### Interpretation

The diagram illustrates a progression in CoT reasoning frameworks, each building upon the previous one to address limitations and improve performance. The core idea is to move beyond single-path reasoning (CoT) to leverage multiple reasoning paths (Self-Consistent CoT) and then rigorously evaluate those paths to ensure consistency and reliability (Causal-Consistent CoT). The inclusion of counterfactual evaluation in Causal-Consistent CoT suggests an attempt to identify and mitigate potential biases or errors in the reasoning process. The diagram highlights the increasing sophistication of techniques aimed at making large language models more trustworthy and accurate in their reasoning abilities. The diagram does not provide any quantitative data, but rather a qualitative comparison of the frameworks. It is a conceptual illustration of the different approaches.