\n

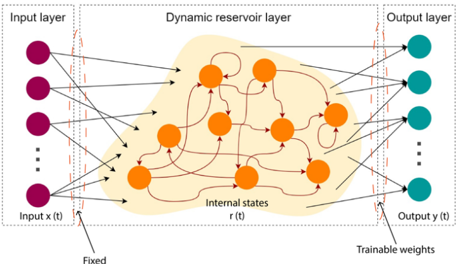

## Diagram: Echo State Network (Reservoir Computing) Architecture

### Overview

The image depicts the architecture of an Echo State Network (ESN), a type of recurrent neural network (RNN) that falls under the broader category of reservoir computing. The diagram illustrates the three main layers: an input layer, a dynamic reservoir layer, and an output layer. The connections between these layers are shown, highlighting the fixed input weights and trainable output weights.

### Components/Axes

The diagram consists of three main sections:

* **Input Layer:** Located on the left side, represented by purple circles. Labeled "Input layer" and "Input x(t)".

* **Dynamic Reservoir Layer:** Occupies the central portion of the diagram, depicted as a light yellow, amorphous shape containing orange circles. Labeled "Dynamic reservoir layer" and "Internal states r(t)".

* **Output Layer:** Situated on the right side, represented by teal circles. Labeled "Output layer" and "Output y(t)".

* **Fixed Weights:** Indicated by lines connecting the input layer to the reservoir layer. Labeled "Fixed".

* **Trainable Weights:** Indicated by lines connecting the reservoir layer to the output layer. Labeled "Trainable weights".

* **Connections within Reservoir:** Numerous curved lines connect the orange circles within the reservoir layer, representing recurrent connections.

* **Ellipsis:** Three dots are present in the input and output layers, indicating that the layers can have more nodes than are explicitly shown.

### Detailed Analysis / Content Details

The diagram shows a fully connected input layer feeding into the dynamic reservoir layer. The reservoir layer consists of approximately 10 orange circles, interconnected by numerous connections. The connections within the reservoir are not explicitly weighted or labeled. The reservoir layer then connects to the output layer, which consists of approximately 5 teal circles.

* **Input Layer:** Contains at least 4 nodes (plus ellipsis indicating more).

* **Reservoir Layer:** Contains approximately 10 nodes. The connections between these nodes are dense and recurrent.

* **Output Layer:** Contains at least 4 nodes (plus ellipsis indicating more).

* **Input to Reservoir Connections:** Each input node is connected to every node in the reservoir layer.

* **Reservoir to Output Connections:** Each reservoir node is connected to every output node.

* **Weighting:** The connections from the input layer to the reservoir layer have fixed weights, while the connections from the reservoir layer to the output layer have trainable weights.

### Key Observations

The key feature of this architecture is the fixed, randomly generated weights within the reservoir layer. This contrasts with traditional RNNs where all weights are trained. The reservoir layer acts as a non-linear transformation of the input signal, and the output layer learns to extract relevant information from the reservoir's internal states. The diagram emphasizes the recurrent connections within the reservoir, which are crucial for maintaining a "memory" of past inputs.

### Interpretation

This diagram illustrates the core concept of reservoir computing, specifically as implemented in an Echo State Network. The reservoir layer, with its fixed random weights and recurrent connections, provides a rich, high-dimensional representation of the input signal. The trainable output weights allow the network to learn to map this representation to the desired output. This approach simplifies training compared to traditional RNNs, as only the output weights need to be adjusted. The diagram highlights the separation of concerns: the reservoir handles the complex non-linear dynamics, while the output layer performs the task-specific learning. The use of fixed weights in the reservoir is a key design choice that enables efficient training and avoids the vanishing/exploding gradient problems often encountered in deep RNNs. The diagram is a conceptual illustration and does not provide specific numerical data or performance metrics. It serves to explain the architecture and the flow of information within the network.