## Diagram: Appalachian Mountains Retrieval Methods

### Overview

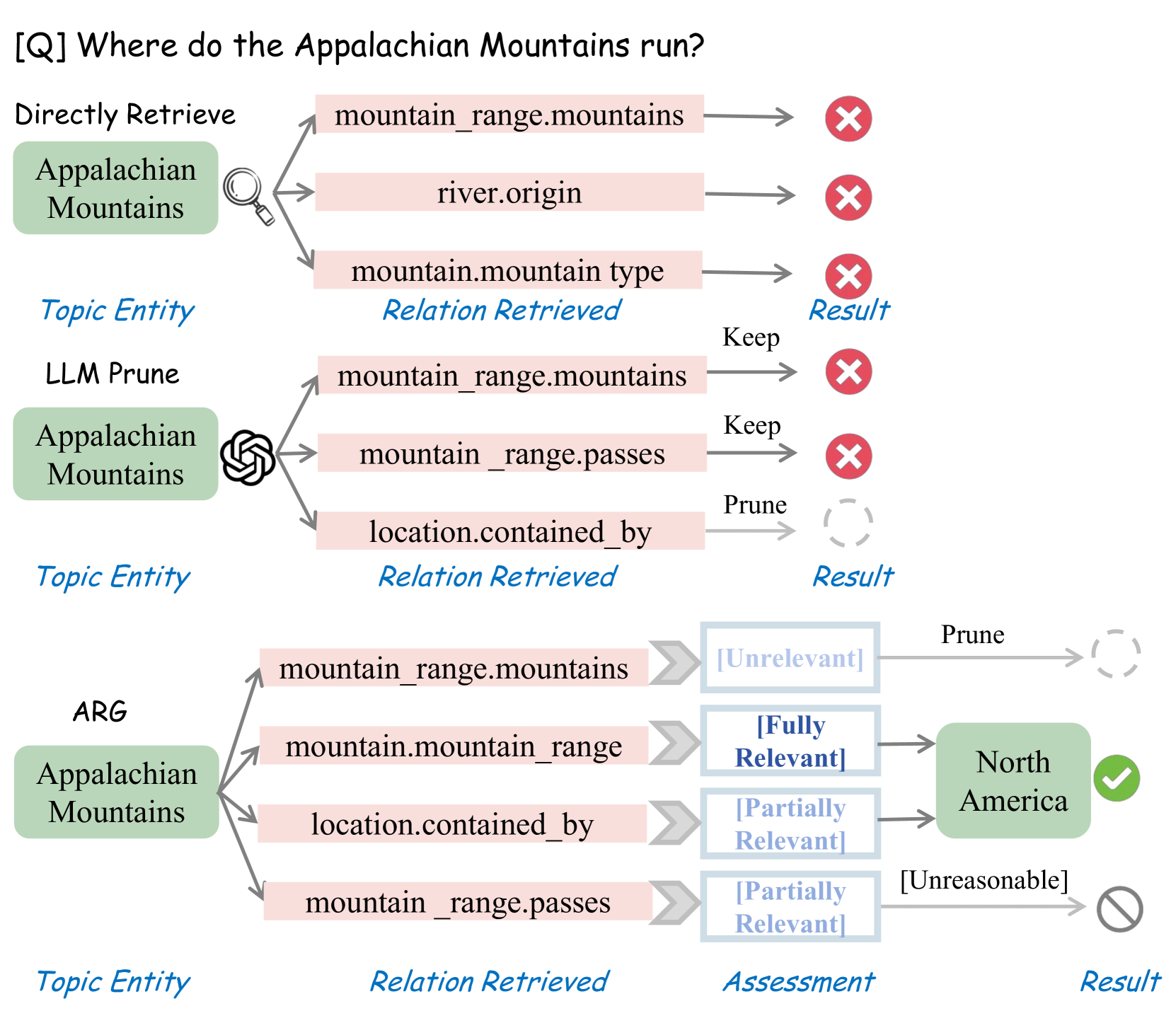

The image presents a diagram comparing three methods for retrieving information about the Appalachian Mountains: "Directly Retrieve," "LLM Prune," and "ARG." Each method starts with the "Appalachian Mountains" as the topic entity and attempts to find relevant relations. The diagram illustrates the different relations retrieved by each method, their assessment (if applicable), and the final result.

### Components/Axes

* **Title:** \[Q] Where do the Appalachian Mountains run?

* **Methods:** Directly Retrieve, LLM Prune, ARG

* **Topic Entity:** Appalachian Mountains (represented by a green rounded rectangle)

* **Relation Retrieved:** (represented by a pink rounded rectangle)

* **Assessment:** (represented by a light blue rounded rectangle)

* **Result:** (represented by a green rounded rectangle or a red "X" in a circle)

* **Assessment Labels:** Unrelevant, Fully Relevant, Partially Relevant, Unreasonable

* **Result Symbols:**

* Red "X" in a circle: Incorrect result

* Green checkmark in a circle: Correct result

* Gray dashed circle: Pruned result

* Gray circle with a line through it: Unreasonable result

### Detailed Analysis

**1. Directly Retrieve:**

* **Topic Entity:** Appalachian Mountains

* **Relation Retrieved:**

* mountain\_range.mountains -> Incorrect Result (Red "X")

* river.origin -> Incorrect Result (Red "X")

* mountain.mountain type -> Incorrect Result (Red "X")

**2. LLM Prune:**

* **Topic Entity:** Appalachian Mountains

* **Relation Retrieved:**

* mountain\_range.mountains -> Keep -> Incorrect Result (Red "X")

* mountain\_range.passes -> Keep -> Incorrect Result (Red "X")

* location.contained\_by -> Prune -> Pruned Result (Gray dashed circle)

**3. ARG:**

* **Topic Entity:** Appalachian Mountains

* **Relation Retrieved:**

* mountain\_range.mountains -> Assessment: \[Unrelevant] -> Prune -> Pruned Result (Gray dashed circle)

* mountain.mountain\_range -> Assessment: \[Fully Relevant] -> North America (Green rounded rectangle with a checkmark)

* location.contained\_by -> Assessment: \[Partially Relevant] -> North America (Green rounded rectangle with a checkmark)

* mountain\_range.passes -> Assessment: \[Partially Relevant] -> \[Unreasonable] (Gray circle with a line through it)

### Key Observations

* The "Directly Retrieve" method fails to produce a correct result.

* The "LLM Prune" method prunes one relation but still fails to produce a correct result.

* The "ARG" method successfully identifies "North America" as a relevant result using the "mountain.mountain\_range" and "location.contained\_by" relations.

### Interpretation

The diagram illustrates a comparison of different knowledge retrieval methods when answering the question "Where do the Appalachian Mountains run?". The "Directly Retrieve" method, without any filtering or reasoning, fails to identify a correct answer. The "LLM Prune" method attempts to improve results by pruning irrelevant relations, but still fails. The "ARG" method, which incorporates assessment of the retrieved relations, successfully identifies "North America" as a relevant answer. This suggests that assessing the relevance of retrieved relations is crucial for accurate knowledge retrieval. The diagram highlights the importance of reasoning and filtering in knowledge retrieval systems.