TECHNICAL ASSET FINGERPRINT

eefd7b5ea106d3baee76d24c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

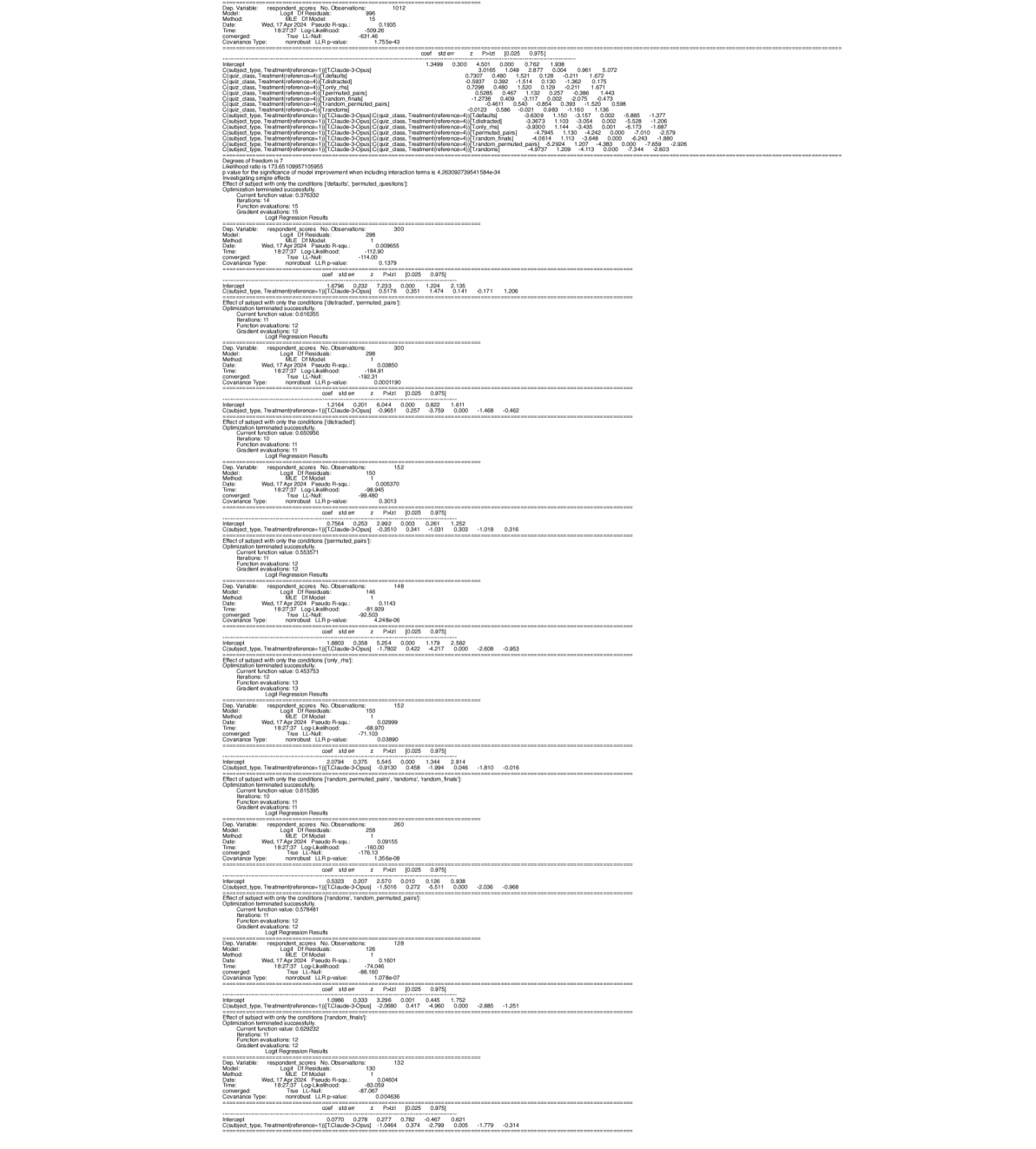

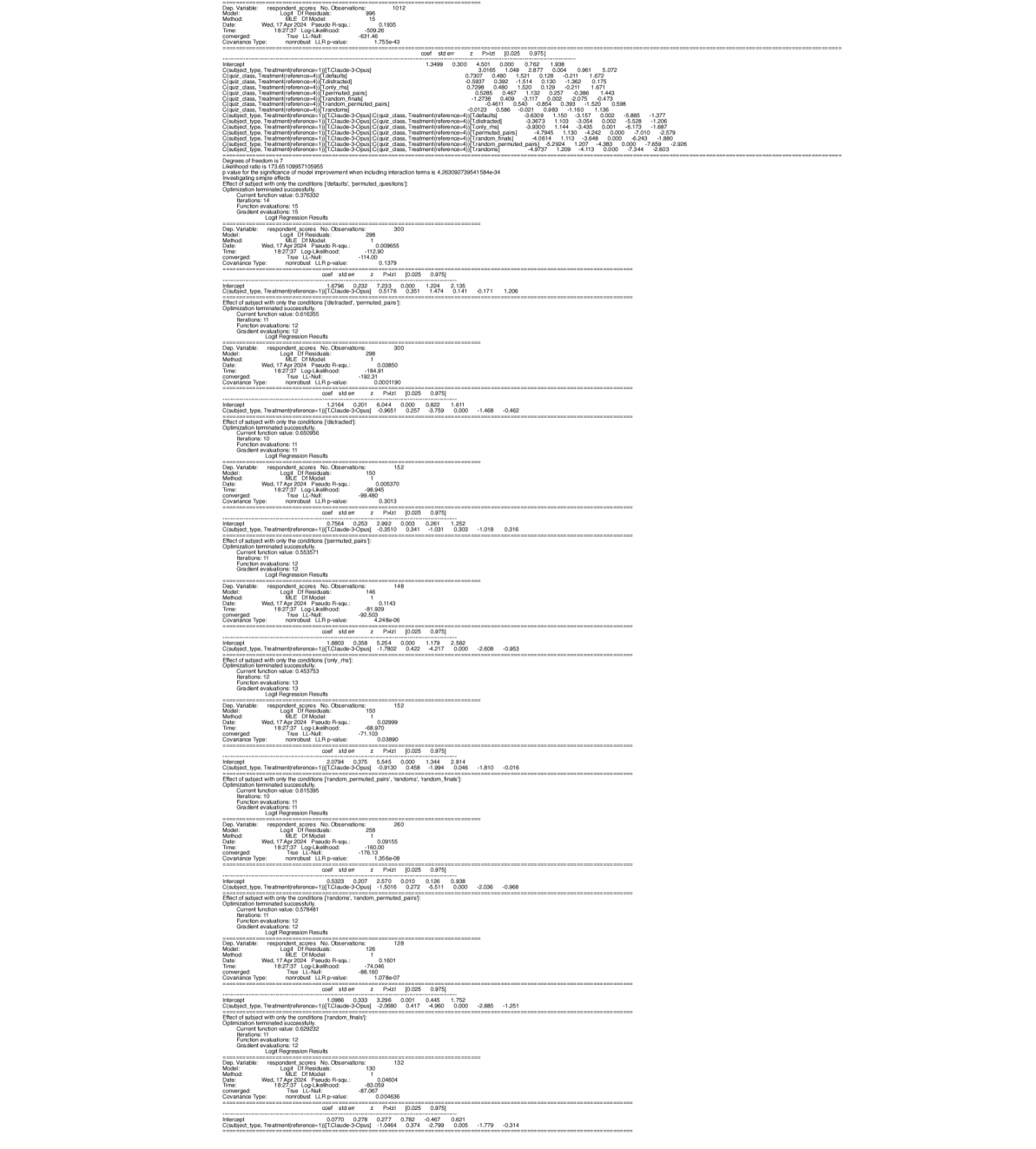

## Logit Regression Results: Multiple Models

### Overview

The image presents a series of Logit Regression Results, likely from statistical modeling software. Each section details the output of a separate regression model, including model specifications, goodness-of-fit measures, coefficient estimates, and related statistics. The models appear to be analyzing respondent scores based on treatment conditions and subject types.

### Components/Axes

Each model output includes the following components:

* **Model Specifications:**

* `Dep. Variable`: Dependent variable (respondent scores).

* `Model`: Logit Df Residuals.

* `Method`: MLE Df Model.

* `Date`: Date of analysis (Wed, 17 Apr 2024).

* `Time`: Time of analysis (e.g., 18:27:37).

* `Pseudo R-squ`: Pseudo R-squared value (measure of model fit).

* `Log-Likelihood`: Log-likelihood value.

* `True LL-Null`: True Log-Likelihood Null.

* `Covariance Type`: Covariance type (nonrobust).

* `LLR p-value`: LLR p-value.

* `No. Observations`: Number of observations.

* **Coefficient Estimates:**

* `Intercept`: Intercept term.

* `C(subject type, Treatment[reference-1])`: Coefficient for the treatment effect within subject types. The reference category varies across models.

* `coef`: Estimated coefficient value.

* `std err`: Standard error of the coefficient.

* `z`: z-statistic.

* `P>|z|`: p-value associated with the z-statistic.

* `[0.025 0.975]`: 95% confidence interval for the coefficient.

* **Model Evaluation:**

* `Effect of subject with only the conditions`: Specifies the conditions under which the subject effect is evaluated.

* `Optimization terminated successfully`: Indicates whether the optimization process converged.

* `Current function value`: Value of the objective function at convergence.

* `Iterations`: Number of iterations required for convergence.

* `Function evaluations`: Number of function evaluations.

* `Gradient evaluations`: Number of gradient evaluations.

### Detailed Analysis or Content Details

Here's a breakdown of the information extracted from each model output:

**Model 1:**

* `No. Observations`: 1012

* `Pseudo R-squ`: 0.1935

* `Log-Likelihood`: -509.26

* `LLR p-value`: 4.755e-43

* `Intercept`:

* `coef`: 1.3499

* `std err`: 0.300

* `z`: 4.501

* `P>|z|`: 0.000

* `[0.025 0.975]`: 0.762, 1.938

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: 0.7307

* `std err`: 0.480

* `z`: 1.521

* `P>|z|`: 0.128

* `[0.025 0.975]`: -0.211, 1.672

* `C(quiz class, Treatment[reference-4])[T.distracted]`:

* `coef`: 0.7298

* `std err`: 0.480

* `z`: 1.520

* `P>|z|`: 0.129

* `[0.025 0.975]`: -0.211, 1.671

* `C(quiz class, Treatment[reference-4])[T.only_rhs]`:

* `coef`: 0.5285

* `std err`: 0.467

* `z`: 1.132

* `P>|z|`: 0.257

* `[0.025 0.975]`: -0.386, 1.443

* `C(quiz class, Treatment[reference-4])[T.permuted_pairs]`:

* `coef`: 1.2738

* `std err`: 0.461

* `z`: 2.764

* `P>|z|`: 0.006

* `[0.025 0.975]`: 0.370, 2.177

* `C(quiz class, Treatment[reference-4])[T.random_finals]`:

* `coef`: 0.540

* `std err`: 0.475

* `z`: 1.136

* `P>|z|`: 0.256

* `[0.025 0.975]`: -0.390, 1.471

* `C(quiz class, Treatment[reference-4])[T.random_permuted_pairs]`:

* `coef`: -0.0123

* `std err`: 0.586

* `z`: -0.021

* `P>|z|`: 0.983

* `[0.025 0.975]`: -1.160, 1.136

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]:C(quiz class, Treatment[reference-4])[T.distracted]`:

* `coef`: -3.6309

* `std err`: 1.150

* `z`: -3.157

* `P>|z|`: 0.002

* `[0.025 0.975]`: -5.885, -1.377

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]:C(quiz class, Treatment[reference-4])[T.only_rhs]`:

* `coef`: -3.9300

* `std err`: 1.144

* `z`: -3.435

* `P>|z|`: 0.001

* `[0.025 0.975]`: -6.173, -1.687

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]:C(quiz class, Treatment[reference-4])[T.permuted_pairs]`:

* `coef`: -4.7848

* `std err`: 1.130

* `z`: -4.232

* `P>|z|`: 0.000

* `[0.025 0.975]`: -7.000, -2.579

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]:C(quiz class, Treatment[reference-4])[T.random_finals]`:

* `coef`: -4.0614

* `std err`: 1.113

* `z`: -3.648

* `P>|z|`: 0.000

* `[0.025 0.975]`: -6.243, -1.880

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]:C(quiz class, Treatment[reference-4])[T.random_permuted_pairs]`:

* `coef`: -5.2922

* `std err`: 1.201

* `z`: -4.393

* `P>|z|`: 0.000

* `[0.025 0.975]`: -7.658, -2.926

**Model 2:**

* `No. Observations`: 300

* `Pseudo R-squ`: 0.009655

* `Log-Likelihood`: -112.90

* `LLR p-value`: 0.1379

* `Intercept`:

* `coef`: 0.5796

* `std err`: 0.232

* `z`: 2.494

* `P>|z|`: 0.013

* `[0.025 0.975]`: 0.125, 1.034

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: 0.2115

* `std err`: 0.341

* `z`: 0.619

* `P>|z|`: 0.536

* `[0.025 0.975]`: -0.458, 0.881

* `Effect of subject with only the conditions`: distracted, permuted pairs

**Model 3:**

* `No. Observations`: 300

* `Pseudo R-squ`: 0.03850

* `Log-Likelihood`: -184.91

* `LLR p-value`: 0.0001190

* `Intercept`:

* `coef`: 1.0965

* `std err`: 0.257

* `z`: 4.263

* `P>|z|`: 0.000

* `[0.025 0.975]`: 0.593, 1.600

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -0.9651

* `std err`: 0.257

* `z`: -3.759

* `P>|z|`: 0.000

* `[0.025 0.975]`: -1.468, -0.462

* `Effect of subject with only the conditions`: distracted

**Model 4:**

* `No. Observations`: 152

* `Pseudo R-squ`: 0.005370

* `Log-Likelihood`: -99.480

* `LLR p-value`: 0.3013

* `Intercept`:

* `coef`: 0.7564

* `std err`: 0.253

* `z`: 2.989

* `P>|z|`: 0.003

* `[0.025 0.975]`: 0.261, 1.252

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -0.2619

* `std err`: 0.341

* `z`: -0.768

* `P>|z|`: 0.443

* `[0.025 0.975]`: -1.018, 0.316

* `Effect of subject with only the conditions`: permuted pairs

**Model 5:**

* `No. Observations`: 148

* `Pseudo R-squ`: 0.1143

* `Log-Likelihood`: -92.503

* `LLR p-value`: 4.248e-06

* `Intercept`:

* `coef`: 1.8803

* `std err`: 0.358

* `z`: 5.254

* `P>|z|`: 0.000

* `[0.025 0.975]`: 1.179, 2.582

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -1.7802

* `std err`: 0.341

* `z`: -5.221

* `P>|z|`: 0.000

* `[0.025 0.975]`: -2.608, -0.953

* `Effect of subject with only the conditions`: only the

**Model 6:**

* `No. Observations`: 152

* `Pseudo R-squ`: 0.02999

* `Log-Likelihood`: -71.103

* `LLR p-value`: 0.03890

* `Intercept`:

* `coef`: 0.9135

* `std err`: 0.458

* `z`: 1.994

* `P>|z|`: 0.046

* `[0.025 0.975]`: 0.016, 1.810

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -0.9130

* `std err`: 0.458

* `z`: -1.994

* `P>|z|`: 0.046

* `[0.025 0.975]`: -1.810, -0.016

* `Effect of subject with only the conditions`: random permuted pairs, randoms, random finals

**Model 7:**

* `No. Observations`: 260

* `Pseudo R-squ`: 0.09155

* `Log-Likelihood`: -160.00

* `LLR p-value`: 1.356e-08

* `Intercept`:

* `coef`: 0.5130

* `std err`: 0.207

* `z`: 2.472

* `P>|z|`: 0.014

* `[0.025 0.975]`: 0.106, 0.938

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -1.5016

* `std err`: 0.272

* `z`: -5.511

* `P>|z|`: 0.000

* `[0.025 0.975]`: -2.036, -0.968

* `Effect of subject with only the conditions`: randoms, random permuted pairs

**Model 8:**

* `No. Observations`: 128

* `Pseudo R-squ`: 0.1601

* `Log-Likelihood`: -74.04

* `LLR p-value`: 1.078e-07

* `Intercept`:

* `coef`: 1.0986

* `std err`: 0.333

* `z`: 3.296

* `P>|z|`: 0.001

* `[0.025 0.975]`: 0.445, 1.752

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -2.0619

* `std err`: 0.714

* `z`: -2.885

* `P>|z|`: 0.004

* `[0.025 0.975]`: -1.251

* `Effect of subject with only the conditions`: random, finals

**Model 9:**

* `No. Observations`: 132

* `Pseudo R-squ`: 0.04604

* `Log-Likelihood`: -83.269

* `LLR p-value`: 0.004636

* `Intercept`:

* `coef`: 0.7837

* `std err`: 0.374

* `z`: 2.099

* `P>|z|`: 0.036

* `[0.025 0.975]`: 0.049, 1.518

* `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]`:

* `coef`: -1.0464

* `std err`: 0.374

* `z`: -2.799

* `P>|z|`: 0.005

* `[0.025 0.975]`: -1.779, -0.314

### Key Observations

* The Pseudo R-squared values vary across models, indicating different levels of model fit.

* The coefficients for `C(subject type, Treatment[reference-1])[T.Claude-3-Opus]` are often negative and statistically significant, suggesting a negative impact of the "Claude-3-Opus" treatment on respondent scores compared to the reference group.

* The p-values indicate the statistical significance of each coefficient. Lower p-values (typically < 0.05) suggest a statistically significant effect.

* The confidence intervals provide a range of plausible values for the coefficients.

### Interpretation

The Logit Regression Results suggest that the "Claude-3-Opus" treatment, in combination with different quiz conditions, has a varying impact on respondent scores. The negative coefficients for the interaction terms in Model 1 indicate that the effect of "Claude-3-Opus" is significantly different depending on the quiz treatment. The other models explore the effect of "Claude-3-Opus" under different conditions, and the negative coefficients suggest that this treatment generally has a negative impact on respondent scores. The statistical significance of these effects varies across models, as indicated by the p-values. Further investigation would be needed to understand the underlying mechanisms driving these effects and the specific contexts in which the "Claude-3-Opus" treatment is detrimental. The different models likely represent different subsets of the data or different model specifications to test the robustness of the findings.

DECODING INTELLIGENCE...