TECHNICAL ASSET FINGERPRINT

ef442600329d62f356f9ea37

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart Type: Line Plots and Scatter Plot

### Overview

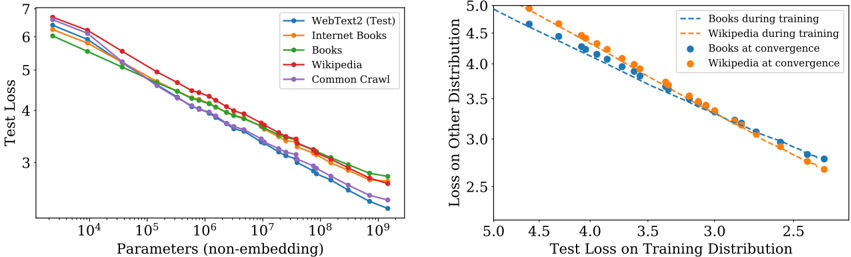

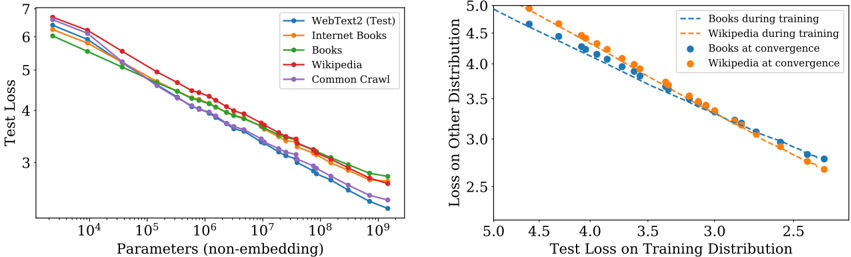

The image contains two plots. The left plot is a line plot showing the test loss as a function of the number of parameters for different datasets. The right plot is a scatter plot showing the loss on other distributions versus the test loss on the training distribution for books and Wikipedia datasets during training and at convergence.

### Components/Axes

**Left Plot:**

* **X-axis:** Parameters (non-embedding). Logarithmic scale from 10^4 to 10^9.

* **Y-axis:** Test Loss. Linear scale from 3 to 7.

* **Legend (top-right):**

* Blue: WebText2 (Test)

* Orange: Internet Books

* Green: Books

* Red: Wikipedia

* Purple: Common Crawl

**Right Plot:**

* **X-axis:** Test Loss on Training Distribution. Linear scale from 2.5 to 5.0.

* **Y-axis:** Loss on Other Distribution. Linear scale from 2.5 to 5.0.

* **Legend (top-right):**

* Dashed Blue: Books during training

* Dashed Orange: Wikipedia during training

* Solid Blue: Books at convergence

* Solid Orange: Wikipedia at convergence

### Detailed Analysis

**Left Plot: Test Loss vs. Parameters**

* **WebText2 (Test) (Blue):** The line slopes downward. Starts at approximately 6.2 at 10^4 parameters and decreases to approximately 3.2 at 10^9 parameters.

* **Internet Books (Orange):** The line slopes downward. Starts at approximately 6.3 at 10^4 parameters and decreases to approximately 3.5 at 10^9 parameters.

* **Books (Green):** The line slopes downward. Starts at approximately 6.1 at 10^4 parameters and decreases to approximately 3.8 at 10^9 parameters.

* **Wikipedia (Red):** The line slopes downward. Starts at approximately 6.4 at 10^4 parameters and decreases to approximately 3.9 at 10^9 parameters.

* **Common Crawl (Purple):** The line slopes downward. Starts at approximately 5.8 at 10^4 parameters and decreases to approximately 3.3 at 10^9 parameters.

**Right Plot: Loss on Other Distribution vs. Test Loss on Training Distribution**

* **Books during training (Dashed Blue):** The line slopes downward. Starts at approximately (4.8, 4.9) and ends at approximately (2.7, 3.0).

* **Wikipedia during training (Dashed Orange):** The line slopes downward. Starts at approximately (4.8, 5.0) and ends at approximately (2.7, 2.8).

* **Books at convergence (Solid Blue):** The points are scattered along a downward trend. The points range from approximately (4.7, 4.7) to (3.3, 3.8).

* **Wikipedia at convergence (Solid Orange):** The points are scattered along a downward trend. The points range from approximately (4.7, 4.8) to (3.3, 3.9).

### Key Observations

* In the left plot, all datasets show a decrease in test loss as the number of parameters increases.

* In the left plot, Wikipedia has the highest test loss for most parameter values, while Common Crawl generally has the lowest.

* In the right plot, both books and Wikipedia show a negative correlation between the test loss on the training distribution and the loss on other distributions.

* In the right plot, the "during training" data points form a more linear trend compared to the "at convergence" data points.

### Interpretation

The left plot demonstrates that increasing the number of parameters in a model generally leads to a reduction in test loss, indicating improved model performance. The different datasets exhibit varying levels of test loss, suggesting that the complexity or characteristics of the data influence model performance.

The right plot suggests a trade-off between performance on the training distribution and performance on other distributions. As the test loss on the training distribution decreases, the loss on other distributions also tends to decrease. The "at convergence" data points indicate the final state of the model after training, while the "during training" data points show the trajectory of the model's performance during the training process. The difference in the trends between "during training" and "at convergence" suggests that the relationship between these losses changes as the model converges.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Training Loss vs. Parameters & Loss Distribution

### Overview

The image presents two charts. The left chart depicts the relationship between the number of parameters (non-embedding) and the test loss for different datasets. The right chart shows the loss on another distribution plotted against the test loss on the training distribution, for Books and Wikipedia datasets during training and at convergence.

### Components/Axes

**Left Chart:**

* **X-axis:** Parameters (non-embedding), logarithmic scale from approximately 10<sup>4</sup> to 10<sup>9</sup>.

* **Y-axis:** Test Loss, linear scale from approximately 2.5 to 7.

* **Data Series:**

* WebText2 (Test) - Blue line

* Internet Books - Orange line

* Books - Green line

* Wikipedia - Yellow line

* Common Crawl - Purple line

**Right Chart:**

* **X-axis:** Test Loss on Training Distribution, linear scale from approximately 2.0 to 5.0.

* **Y-axis:** Loss on Other Distribution, linear scale from approximately 2.5 to 5.0.

* **Data Series:**

* Books during training - Light blue dashed line

* Wikipedia during training - Orange dashed line

* Books at convergence - Blue dots

* Wikipedia at convergence - Orange dots

### Detailed Analysis or Content Details

**Left Chart:**

* **WebText2 (Test):** The blue line starts at approximately 6.2 at 10<sup>4</sup> parameters and decreases steadily to approximately 2.7 at 10<sup>9</sup> parameters.

* **Internet Books:** The orange line starts at approximately 6.0 at 10<sup>4</sup> parameters and decreases to approximately 3.2 at 10<sup>9</sup> parameters.

* **Books:** The green line starts at approximately 6.1 at 10<sup>4</sup> parameters and decreases to approximately 3.0 at 10<sup>9</sup> parameters.

* **Wikipedia:** The yellow line starts at approximately 6.1 at 10<sup>4</sup> parameters and decreases to approximately 3.1 at 10<sup>9</sup> parameters.

* **Common Crawl:** The purple line starts at approximately 6.3 at 10<sup>4</sup> parameters and decreases to approximately 3.3 at 10<sup>9</sup> parameters.

* All lines exhibit a decreasing trend, indicating that increasing the number of parameters generally reduces the test loss. The rate of decrease slows down as the number of parameters increases.

**Right Chart:**

* **Books during training:** The light blue dashed line starts at approximately (4.8, 4.8) and decreases to approximately (2.5, 3.0).

* **Wikipedia during training:** The orange dashed line starts at approximately (4.8, 4.6) and decreases to approximately (2.5, 3.1).

* **Books at convergence:** The blue dots are at approximately (3.0, 3.0), (3.2, 2.8), (3.5, 2.6), (4.0, 2.5), (4.5, 2.4).

* **Wikipedia at convergence:** The orange dots are at approximately (3.0, 3.2), (3.2, 2.9), (3.5, 2.7), (4.0, 2.6), (4.5, 2.5).

* Both datasets show a negative correlation between test loss on the training distribution and loss on the other distribution.

### Key Observations

* In the left chart, WebText2 consistently exhibits the lowest test loss across all parameter ranges.

* The rate of loss reduction diminishes as the number of parameters increases for all datasets.

* In the right chart, the training curves (dashed lines) are relatively linear, while the convergence points (dots) show a slight curvature.

* The convergence points for Books and Wikipedia are close to each other, suggesting similar performance at convergence.

### Interpretation

The left chart demonstrates the scaling behavior of language models with varying dataset sizes. The consistent lower loss of WebText2 suggests that this dataset is more effective for training, potentially due to its quality or diversity. The diminishing returns of increasing parameters indicate a point of saturation where adding more parameters yields less significant improvements in performance.

The right chart illustrates the concept of generalization. The negative correlation between loss on the training distribution and loss on another distribution suggests that models performing well on the training data also tend to generalize better to unseen data. The convergence points indicate that both Books and Wikipedia datasets can achieve comparable performance when trained to convergence. The difference between the training curves and convergence points highlights the impact of training on model generalization. The fact that the convergence points are not perfectly aligned suggests that the datasets have different characteristics that affect their generalization performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart and Scatter Plot: Scaling Laws for Language Models

### Overview

The image contains two distinct charts presented side-by-side. The left chart is a line graph illustrating the relationship between model size (non-embedding parameters) and test loss across five different training datasets. The right chart is a scatter plot with trend lines, showing the correlation between a model's test loss on its training distribution and its loss on a different distribution, specifically for models trained on Books and Wikipedia data.

### Components/Axes

**Left Chart:**

* **Chart Type:** Line Chart (Log-Linear Scale)

* **X-Axis:** `Parameters (non-embedding)`. Scale is logarithmic, ranging from approximately 10^3.5 to 10^9.2. Major tick marks are at 10^4, 10^5, 10^6, 10^7, 10^8, and 10^9.

* **Y-Axis:** `Test Loss`. Scale is linear, ranging from 2 to 7. Major tick marks are at 2, 3, 4, 5, 6, and 7.

* **Legend:** Located in the top-right corner. Contains five entries, each with a distinct color and marker:

* `WebText2 (Test)`: Blue line with circular markers.

* `Internet Books`: Orange line with circular markers.

* `Books`: Green line with circular markers.

* `Wikipedia`: Red line with circular markers.

* `Common Crawl`: Purple line with circular markers.

**Right Chart:**

* **Chart Type:** Scatter Plot with Linear Trend Lines

* **X-Axis:** `Test Loss on Training Distribution`. Scale is linear and reversed, decreasing from left to right. Major tick marks are at 5.0, 4.5, 4.0, 3.5, 3.0, 2.5.

* **Y-Axis:** `Loss on Other Distribution`. Scale is linear, ranging from 2.5 to 5.0. Major tick marks are at 2.5, 3.0, 3.5, 4.0, 4.5, 5.0.

* **Legend:** Located in the top-right corner. Contains four entries:

* `Books during training`: Blue dashed line.

* `Wikipedia during training`: Orange dashed line.

* `Books at convergence`: Blue circular marker.

* `Wikipedia at convergence`: Orange circular marker.

### Detailed Analysis

**Left Chart - Test Loss vs. Model Parameters:**

* **General Trend:** All five data series show a clear, consistent downward trend. As the number of non-embedding parameters increases (moving right on the x-axis), the test loss decreases (moving down on the y-axis). This demonstrates a power-law scaling relationship.

* **Data Series & Approximate Values:**

* **WebText2 (Test) [Blue]:** Starts at ~6.5 loss for ~10^3.8 params. Ends at the lowest point among all series, ~2.2 loss for ~10^9.2 params. It consistently has the lowest loss for models larger than ~10^6 parameters.

* **Internet Books [Orange]:** Starts at ~6.6 loss. Ends at ~2.6 loss. Follows a path very close to, but slightly above, the WebText2 line for most of the range.

* **Books [Green]:** Starts at the lowest initial point, ~6.0 loss. Ends at ~2.7 loss. It begins as the best-performing dataset for small models but is overtaken by WebText2 and Internet Books as model size increases.

* **Wikipedia [Red]:** Starts at the highest initial point, ~6.7 loss. Ends at ~2.8 loss. It remains the highest-loss series across the entire parameter range shown.

* **Common Crawl [Purple]:** Starts at ~6.4 loss. Ends at ~2.5 loss. Its trajectory is very similar to Internet Books, often overlapping or running parallel just above the WebText2 line.

* **Spatial Relationships:** The lines are tightly clustered but maintain a consistent order for models larger than ~10^6 parameters. From lowest to highest loss at the largest model size: WebText2 < Common Crawl ≈ Internet Books < Books < Wikipedia.

**Right Chart - Loss Correlation:**

* **General Trend:** Both data series (`Books during training` and `Wikipedia during training`) show a strong, negative linear correlation. As the test loss on the training distribution decreases (moving right on the x-axis), the loss on the other distribution also decreases (moving down on the y-axis). The points form tight, linear bands.

* **Data Series & Relationships:**

* **Books during training [Blue Dashed Line]:** The trend line has a slope of approximately 1.0 (a 45-degree line). This indicates a near 1:1 relationship: a reduction of 1.0 in training loss corresponds to a reduction of ~1.0 in loss on the other distribution.

* **Wikipedia during training [Orange Dashed Line]:** The trend line is parallel to the Books line but shifted slightly upward. For the same training loss value, models trained on Wikipedia exhibit a marginally higher loss on the other distribution.

* **Convergence Points:**

* `Books at convergence` [Blue Circle]: Plotted at approximately (2.3, 2.8). This point lies slightly above the blue dashed trend line.

* `Wikipedia at convergence` [Orange Circle]: Plotted at approximately (2.3, 2.7). This point lies slightly below the orange dashed trend line.

* **Spatial Relationships:** The two dashed lines are nearly parallel and very close together, with the Wikipedia line slightly above the Books line. The convergence points are located at the far right of the chart (lowest training loss), with the Wikipedia point slightly lower on the y-axis than the Books point.

### Key Observations

1. **Universal Scaling Law:** The left chart provides strong visual evidence for scaling laws in language models: performance (test loss) improves predictably as a power-law function of model size, regardless of the training dataset.

2. **Dataset Hierarchy:** There is a clear and consistent hierarchy in dataset quality/difficulty for this modeling task. WebText2 appears to be the most effective training data for achieving low loss at scale, while Wikipedia appears to be the most challenging.

3. **Strong Generalization Correlation:** The right chart demonstrates that a model's ability to generalize from its training distribution to another distribution is highly predictable and linearly related to its performance on the training distribution itself.

4. **Dataset-Specific Generalization:** While the generalization relationship is linear for both Books and Wikipedia, there is a small but consistent offset. Models trained on Wikipedia generalize slightly worse (higher loss on other distribution) than models trained on Books, given the same training loss.

### Interpretation

These charts together illustrate fundamental principles of neural scaling and generalization.

The **left chart** is a classic demonstration of *scaling laws*. It suggests that increasing model capacity (parameters) is a reliable, predictable method for improving performance, and that this relationship holds across diverse data sources. The consistent ordering of the lines implies intrinsic properties of the datasets—such as quality, diversity, or complexity—create a fixed "difficulty ceiling" that scaling can approach but not overcome. WebText2, likely a curated, high-quality dataset, allows models to achieve the lowest possible loss for a given size.

The **right chart** explores *generalization*. The tight, linear relationship indicates that "learning" (reducing training loss) and "generalizing" (performing well on unseen data from a different distribution) are deeply linked processes for these models. The near 1:1 slope is particularly significant; it suggests that improvements in core modeling capability transfer almost directly to new domains. The small offset between Books and Wikipedia hints that the *nature* of the training data influences the *pattern* of generalization, even if the overall relationship remains linear. The convergence points show the final, optimized performance achievable for each data type.

**In summary:** The data suggests that building better language models is a two-part problem: 1) Scale up model size following a predictable power law, and 2) Use the highest-quality training data possible, as it determines both the absolute performance ceiling and the efficiency of generalization to new tasks. The charts provide a quantitative framework for making these design choices.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart and Scatter Plot: Model Performance vs. Parameters and Generalization

### Overview

The image contains two charts analyzing model performance. The left chart shows test loss trends across datasets as parameters increase. The right chart compares training loss on specific distributions with generalization performance.

### Components/Axes

**Left Chart (Line Chart):**

- **X-axis**: "Parameters (non-embedding)" (log scale: 10⁴ to 10⁹)

- **Y-axis**: "Test Loss" (linear scale: 2.5 to 7)

- **Legend**:

- WebText2 (Test) – Blue

- Internet Books – Orange

- Books – Green

- Wikipedia – Red

- Common Crawl – Purple

**Right Chart (Scatter Plot):**

- **X-axis**: "Test Loss on Training Distribution" (linear scale: 2.5 to 5.0)

- **Y-axis**: "Loss on Other Distribution" (linear scale: 2.5 to 5.0)

- **Legend**:

- Books during training – Dashed Blue

- Wikipedia during training – Dashed Orange

- Books at convergence – Solid Blue

- Wikipedia at convergence – Solid Orange

### Detailed Analysis

**Left Chart Trends:**

- All lines descend as parameters increase, confirming that larger models generally improve test performance.

- **WebText2 (Test)** starts highest (~6.5 at 10⁴ parameters) and ends lowest (~2.5 at 10⁹).

- **Common Crawl** starts lowest (~6.0 at 10⁴) and ends slightly higher (~2.0 at 10⁹).

- Lines diverge slightly at mid-range parameters (10⁶–10⁷), with WebText2 and Wikipedia maintaining the largest gap.

**Right Chart Trends:**

- Dashed lines (training loss) show a strong negative correlation: lower training loss correlates with lower loss on other distributions.

- Solid points (convergence) for Books and Wikipedia are consistently below their respective dashed lines, indicating better generalization when training loss is minimized.

- Books at convergence (solid blue) and Wikipedia at convergence (solid orange) cluster tightly near (2.5, 2.5), suggesting optimal generalization.

### Key Observations

1. **Parameter Efficiency**: WebText2 and Wikipedia datasets show the steepest improvement with parameter growth, while Common Crawl plateaus earlier.

2. **Generalization Gap**: Models trained on Books and Wikipedia achieve significantly lower loss on other distributions at convergence compared to their training performance.

3. **Dataset-Specific Behavior**: WebText2 (Test) and Common Crawl exhibit less pronounced parameter-driven improvements, possibly due to dataset complexity or noise.

### Interpretation

The left chart demonstrates that increasing model size reduces test loss across datasets, with WebText2 and Wikipedia benefiting most. The right chart reveals that minimizing training loss on Books and Wikipedia leads to superior generalization, as evidenced by the convergence points. This suggests that dataset choice and training efficiency (achieving low training loss) are critical for building models that generalize well. The divergence in parameter efficiency highlights dataset-specific challenges, such as WebText2's complexity requiring more parameters for comparable gains.

DECODING INTELLIGENCE...