TECHNICAL ASSET FINGERPRINT

efb1ed365156be156b6b3392

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

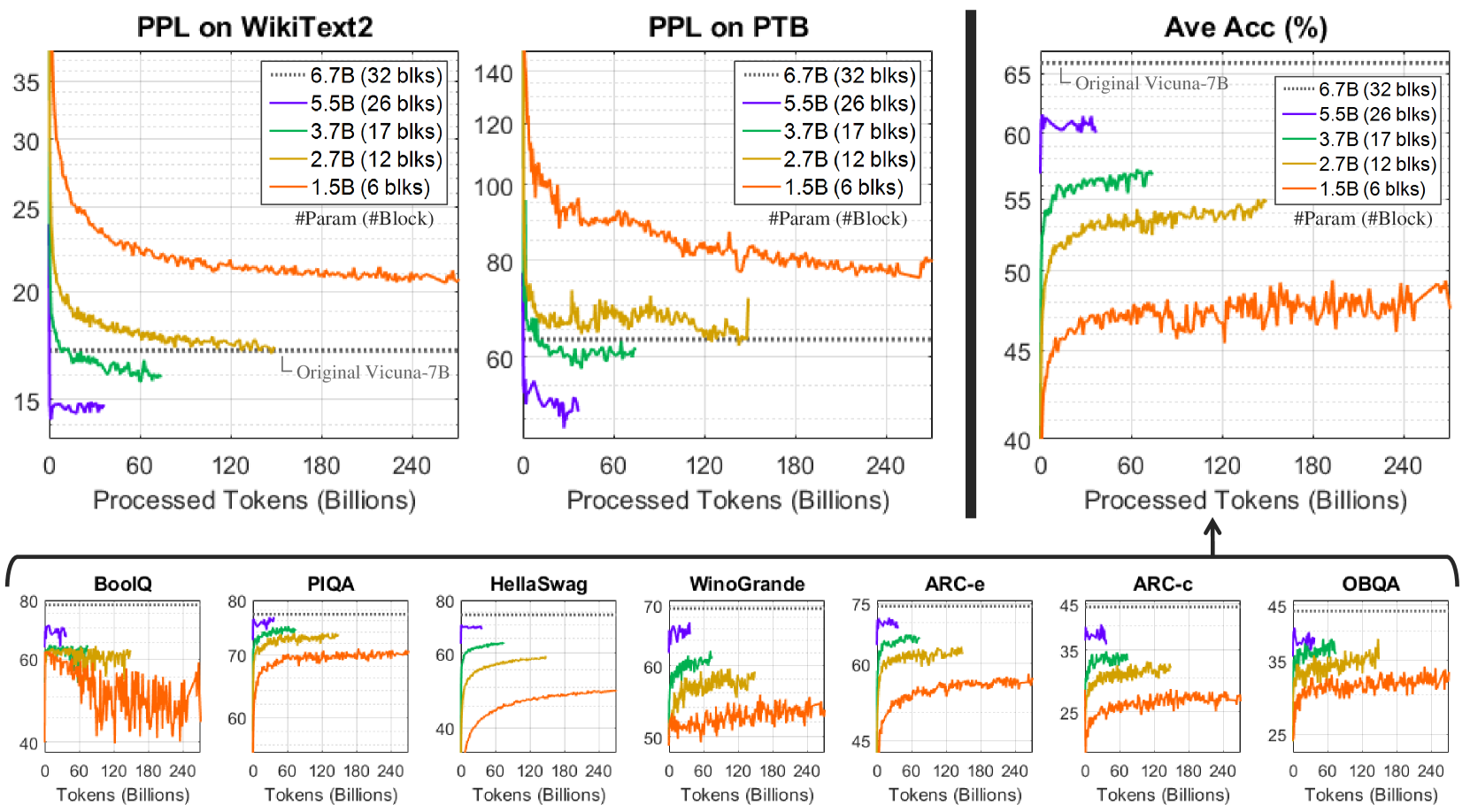

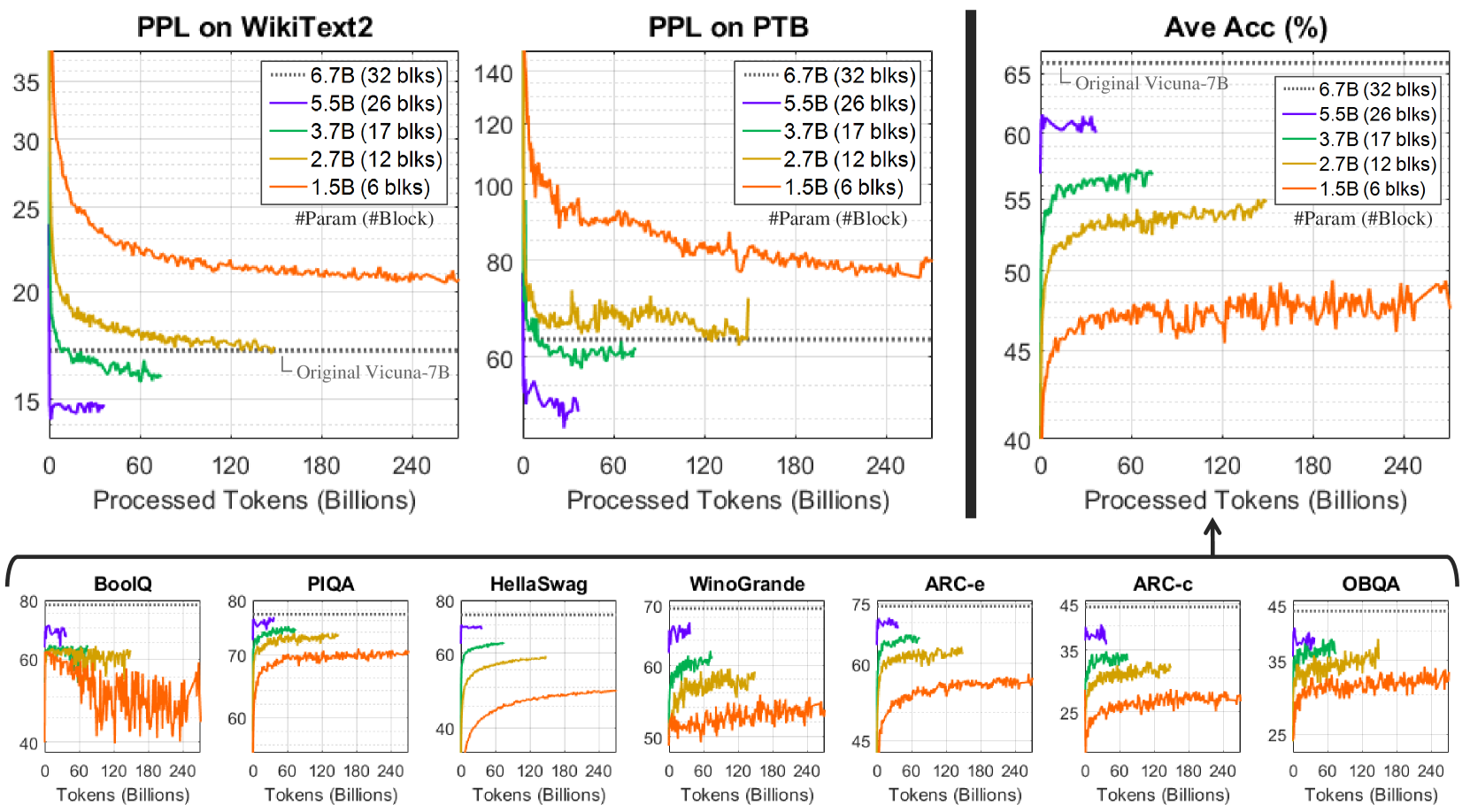

## Chart: Model Performance on Various Datasets

### Overview

The image presents a series of line graphs comparing the performance of different language models, varying in size (number of parameters), on several datasets. The performance metrics include Perplexity (PPL) on WikiText2 and PTB datasets, Average Accuracy (Ave Acc) and accuracy on BoolQ, PIQA, HellaSwag, WinoGrande, ARC-e, ARC-c, and OBQA datasets. The x-axis represents the number of processed tokens in billions.

### Components/Axes

**Top Row Charts:**

* **Titles:** PPL on WikiText2, PPL on PTB, Ave Acc (%)

* **X-axis:** Processed Tokens (Billions) - Ranges from 0 to 240 in increments of 60.

* **Y-axis (PPL on WikiText2):** Ranges from 15 to 35 in increments of 5.

* **Y-axis (PPL on PTB):** Ranges from 60 to 140 in unequal increments.

* **Y-axis (Ave Acc (%)):** Ranges from 40 to 65 in increments of 5.

* **Legend (Top-Right of each chart):**

* Dotted Black: 6.7B (32 blks)

* Blue: 5.5B (26 blks)

* Green: 3.7B (17 blks)

* Yellow: 2.7B (12 blks)

* Orange: 1.5B (6 blks)

* **Horizontal Line:** Original Vicuna-7B (dotted line)

**Bottom Row Charts:**

* **Titles:** BoolQ, PIQA, HellaSwag, WinoGrande, ARC-e, ARC-c, OBQA

* **X-axis:** Tokens (Billions) - Ranges from 0 to 240 in increments of 60.

* **Y-axis (BoolQ):** Ranges from 40 to 80 in increments of 20.

* **Y-axis (PIQA):** Ranges from 60 to 80 in increments of 10.

* **Y-axis (HellaSwag):** Ranges from 40 to 80 in increments of 20.

* **Y-axis (WinoGrande):** Ranges from 50 to 70 in increments of 10.

* **Y-axis (ARC-e):** Ranges from 45 to 75 in increments of 15.

* **Y-axis (ARC-c):** Ranges from 25 to 45 in increments of 10.

* **Y-axis (OBQA):** Ranges from 25 to 45 in increments of 10.

* **Legend:** Same as top row charts.

* **Horizontal Line:** Original Vicuna-7B (dotted line)

### Detailed Analysis

**PPL on WikiText2:**

* **6.7B (32 blks) (Dotted Black):** Starts around 18 and remains relatively stable.

* **5.5B (26 blks) (Blue):** Starts around 15 and remains relatively stable.

* **3.7B (17 blks) (Green):** Starts around 20, decreases, then stabilizes around 17.

* **2.7B (12 blks) (Yellow):** Starts around 25, decreases, then stabilizes around 20.

* **1.5B (6 blks) (Orange):** Starts around 35, decreases significantly, then stabilizes around 22.

**PPL on PTB:**

* **6.7B (32 blks) (Dotted Black):** Starts around 65 and remains relatively stable.

* **5.5B (26 blks) (Blue):** Starts around 50, increases, then stabilizes around 60.

* **3.7B (17 blks) (Green):** Starts around 70, decreases, then stabilizes around 65.

* **2.7B (12 blks) (Yellow):** Starts around 100, decreases, then stabilizes around 70.

* **1.5B (6 blks) (Orange):** Starts around 140, decreases significantly, then stabilizes around 80.

**Ave Acc (%):**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 65.

* **5.5B (26 blks) (Blue):** Starts around 40, increases rapidly, then stabilizes around 62.

* **3.7B (17 blks) (Green):** Starts around 42, increases rapidly, then stabilizes around 57.

* **2.7B (12 blks) (Yellow):** Starts around 42, increases rapidly, then stabilizes around 54.

* **1.5B (6 blks) (Orange):** Starts around 40, increases, then fluctuates around 48.

**BoolQ:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 78.

* **5.5B (26 blks) (Blue):** Starts around 60, increases rapidly, then stabilizes around 75.

* **3.7B (17 blks) (Green):** Starts around 60, increases rapidly, then stabilizes around 65.

* **2.7B (12 blks) (Yellow):** Starts around 60, increases rapidly, then stabilizes around 60.

* **1.5B (6 blks) (Orange):** Starts around 40, fluctuates significantly.

**PIQA:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 78.

* **5.5B (26 blks) (Blue):** Starts around 60, increases rapidly, then stabilizes around 78.

* **3.7B (17 blks) (Green):** Starts around 60, increases rapidly, then stabilizes around 72.

* **2.7B (12 blks) (Yellow):** Starts around 60, increases rapidly, then stabilizes around 70.

* **1.5B (6 blks) (Orange):** Starts around 60, increases rapidly, then stabilizes around 70.

**HellaSwag:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 70.

* **5.5B (26 blks) (Blue):** Starts around 40, increases rapidly, then stabilizes around 70.

* **3.7B (17 blks) (Green):** Starts around 40, increases rapidly, then stabilizes around 65.

* **2.7B (12 blks) (Yellow):** Starts around 40, increases rapidly, then stabilizes around 60.

* **1.5B (6 blks) (Orange):** Starts around 40, increases rapidly, then stabilizes around 55.

**WinoGrande:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 70.

* **5.5B (26 blks) (Blue):** Starts around 50, increases rapidly, then stabilizes around 70.

* **3.7B (17 blks) (Green):** Starts around 50, increases rapidly, then stabilizes around 65.

* **2.7B (12 blks) (Yellow):** Starts around 50, increases rapidly, then stabilizes around 60.

* **1.5B (6 blks) (Orange):** Starts around 50, fluctuates significantly.

**ARC-e:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 72.

* **5.5B (26 blks) (Blue):** Starts around 45, increases rapidly, then stabilizes around 70.

* **3.7B (17 blks) (Green):** Starts around 45, increases rapidly, then stabilizes around 65.

* **2.7B (12 blks) (Yellow):** Starts around 45, increases rapidly, then stabilizes around 60.

* **1.5B (6 blks) (Orange):** Starts around 45, increases rapidly, then stabilizes around 55.

**ARC-c:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 42.

* **5.5B (26 blks) (Blue):** Starts around 25, increases rapidly, then stabilizes around 42.

* **3.7B (17 blks) (Green):** Starts around 25, increases rapidly, then stabilizes around 38.

* **2.7B (12 blks) (Yellow):** Starts around 25, increases rapidly, then stabilizes around 35.

* **1.5B (6 blks) (Orange):** Starts around 25, increases rapidly, then stabilizes around 30.

**OBQA:**

* **6.7B (32 blks) (Dotted Black):** Appears to be a horizontal line at approximately 42.

* **5.5B (26 blks) (Blue):** Starts around 25, increases rapidly, then stabilizes around 42.

* **3.7B (17 blks) (Green):** Starts around 25, increases rapidly, then stabilizes around 38.

* **2.7B (12 blks) (Yellow):** Starts around 25, increases rapidly, then stabilizes around 35.

* **1.5B (6 blks) (Orange):** Starts around 25, increases rapidly, then stabilizes around 30.

### Key Observations

* Larger models (6.7B and 5.5B) generally perform better than smaller models (3.7B, 2.7B, and 1.5B) across all datasets.

* The performance gap between models is more pronounced on some datasets (e.g., PPL on PTB) than others (e.g., PIQA).

* The performance of the 1.5B model is often significantly lower than the other models.

* The "Original Vicuna-7B" model serves as a performance benchmark.

* The PPL (Perplexity) generally decreases as the number of processed tokens increases, indicating improved model performance with more training.

* The Average Accuracy and accuracy on other datasets generally increases as the number of processed tokens increases, indicating improved model performance with more training.

### Interpretation

The data suggests that increasing the size of a language model (number of parameters) generally leads to better performance, as evidenced by lower perplexity and higher accuracy across various datasets. The performance improvement is not uniform across all datasets, indicating that some datasets may be more sensitive to model size than others. The "Original Vicuna-7B" model provides a baseline for comparison, and the models with fewer parameters generally underperform compared to this baseline. The trend of decreasing perplexity and increasing accuracy with more processed tokens highlights the importance of training data in improving model performance. The 1.5B model consistently shows the lowest performance, suggesting a lower bound on model size for achieving acceptable results on these tasks.

DECODING INTELLIGENCE...