\n

## Bar Chart: ProtocolQA Open-Ended Performance

### Overview

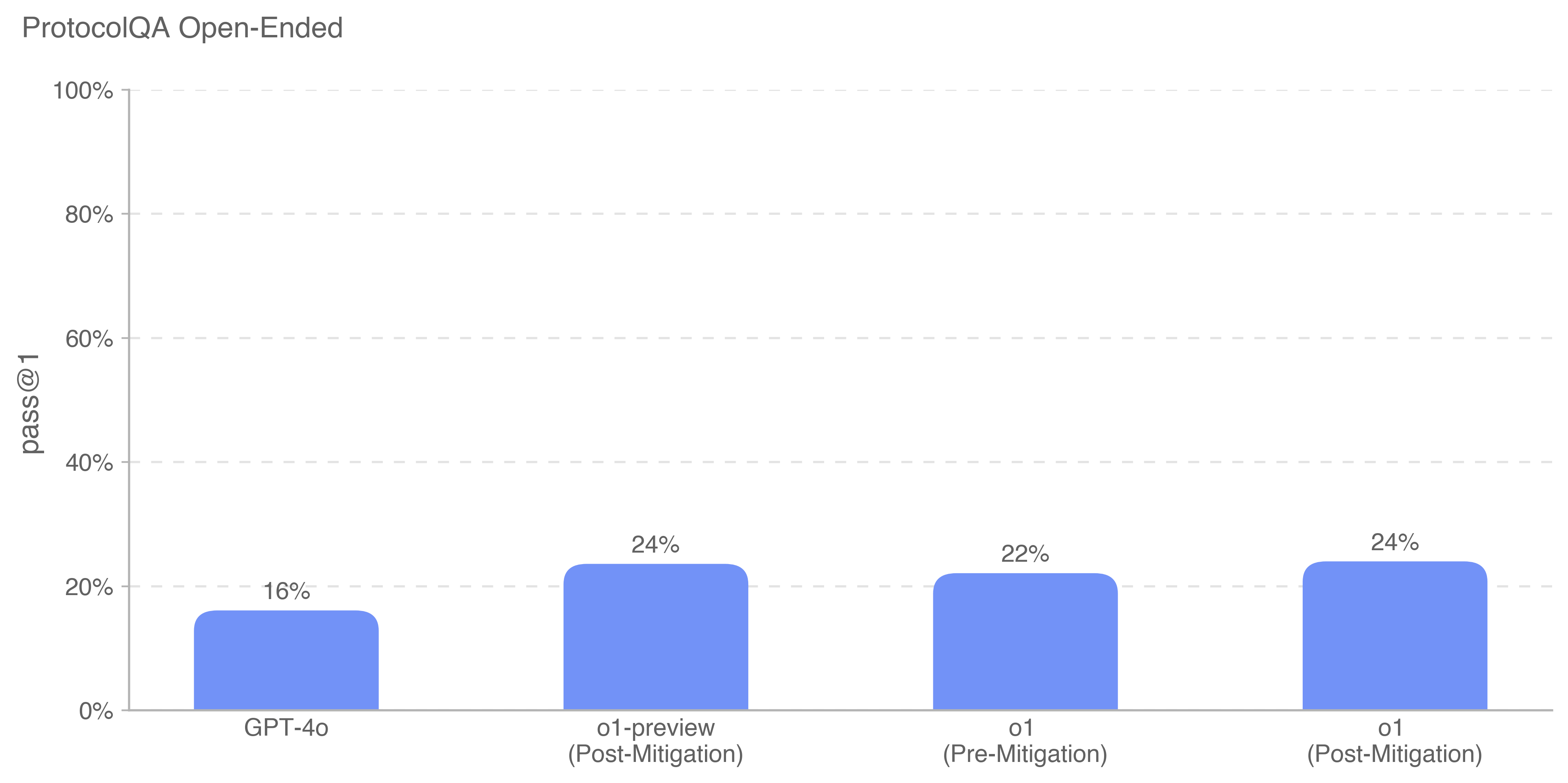

The image displays a vertical bar chart titled "ProtocolQA Open-Ended." It compares the performance of four different AI model variants on a metric called "pass @ 1," presented as a percentage. The chart uses a single color (blue) for all bars, with the exact percentage value annotated above each bar.

### Components/Axes

* **Chart Title:** "ProtocolQA Open-Ended" (located at the top-left).

* **Vertical Axis (Y-axis):**

* **Label:** "pass @ 1" (rotated 90 degrees).

* **Scale:** Percentage scale from 0% to 100%.

* **Major Tick Marks:** 0%, 20%, 40%, 60%, 80%, 100%.

* **Grid Lines:** Horizontal dashed lines extend from each major tick mark across the chart.

* **Horizontal Axis (X-axis):**

* **Categories (from left to right):**

1. GPT-4o

2. o1-preview (Post-Mitigation)

3. o1 (Pre-Mitigation)

4. o1 (Post-Mitigation)

* **Data Series:** A single data series represented by four blue bars. There is no separate legend, as the category labels are placed directly beneath each bar.

### Detailed Analysis

The chart presents the following specific data points:

1. **GPT-4o:** The bar reaches a height corresponding to **16%**.

2. **o1-preview (Post-Mitigation):** The bar reaches a height corresponding to **24%**.

3. **o1 (Pre-Mitigation):** The bar reaches a height corresponding to **22%**.

4. **o1 (Post-Mitigation):** The bar reaches a height corresponding to **24%**.

**Trend Verification:** The visual trend shows that the three "o1" family models (bars 2, 3, and 4) all perform at a higher level than the GPT-4o model (bar 1). Among the o1 models, the "Post-Mitigation" versions (bars 2 and 4) show a slight performance increase over the "Pre-Mitigation" version (bar 3).

### Key Observations

* **Performance Gap:** There is an 8 percentage point gap between the lowest-performing model (GPT-4o at 16%) and the highest-performing models (o1-preview Post-Mitigation and o1 Post-Mitigation, both at 24%).

* **Mitigation Effect:** For the "o1" model, applying "Post-Mitigation" resulted in a 2 percentage point increase (from 22% to 24%). The "o1-preview" model is only shown in its "Post-Mitigation" state.

* **Plateau:** The performance of the two "Post-Mitigation" models (o1-preview and o1) is identical at 24%, suggesting a potential performance ceiling for this specific task under the tested conditions.

### Interpretation

This chart likely comes from a technical report or research paper evaluating AI model capabilities on a specific benchmark called "ProtocolQA," which involves open-ended question answering. The "pass @ 1" metric typically measures the percentage of questions for which the model's first generated response is considered correct.

The data suggests that the "o1" series of models outperforms the earlier "GPT-4o" model on this particular protocol-oriented QA task. The terms "Pre-Mitigation" and "Post-Mitigation" imply that some form of safety or alignment tuning was applied to the models. The results indicate that this mitigation process did not harm performance on this task; in fact, it correlated with a slight improvement for the "o1" model. The identical top performance of both "Post-Mitigation" variants (24%) may indicate that the mitigation techniques used were consistent and that further gains on this specific benchmark might require architectural or training changes beyond mitigation. The overall low absolute scores (all below 25%) suggest that "ProtocolQA Open-Ended" is a challenging benchmark for these models.