## Line Chart: Model Training Accuracy vs. Epochs

### Overview

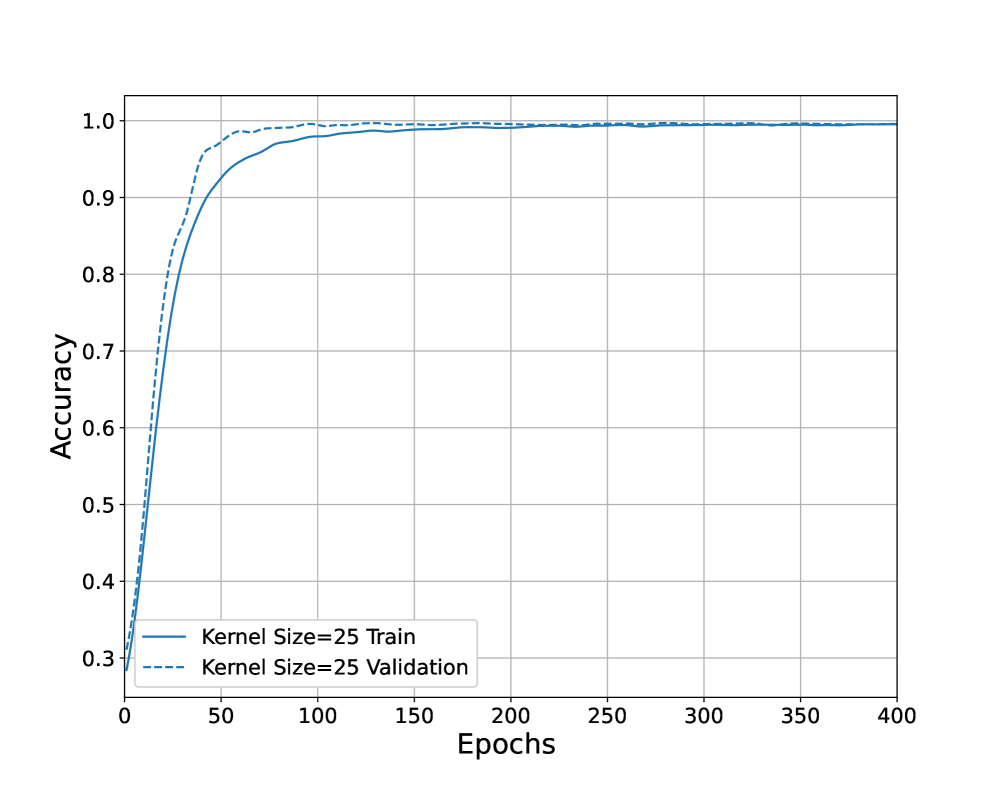

The image displays a line chart plotting the accuracy of a machine learning model over the course of its training. It compares the performance on the training dataset against a validation dataset for a model configured with a kernel size of 25. The chart demonstrates the learning progression and final performance convergence.

### Components/Axes

* **Chart Type:** 2D Line Chart.

* **X-Axis (Horizontal):**

* **Label:** "Epochs"

* **Scale:** Linear scale from 0 to 400.

* **Major Tick Marks:** 0, 50, 100, 150, 200, 250, 300, 350, 400.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy"

* **Scale:** Linear scale from 0.3 to 1.0.

* **Major Tick Marks:** 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0.

* **Legend:**

* **Position:** Bottom-left corner of the plot area.

* **Entry 1:** A solid blue line labeled "Kernel Size=25 Train".

* **Entry 2:** A dashed blue line labeled "Kernel Size=25 Validation".

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

**Data Series 1: Kernel Size=25 Train (Solid Blue Line)**

* **Trend:** The line shows a classic learning curve. It starts at a low accuracy (approximately 0.28 at epoch 0), rises very steeply until around epoch 50, then the rate of increase slows, and it asymptotically approaches a plateau near 1.0.

* **Key Data Points (Approximate):**

* Epoch 0: ~0.28

* Epoch 25: ~0.75

* Epoch 50: ~0.92

* Epoch 100: ~0.97

* Epoch 200: ~0.99

* Epoch 400: ~0.998 (very close to 1.0)

**Data Series 2: Kernel Size=25 Validation (Dashed Blue Line)**

* **Trend:** This line follows a similar trajectory to the training line but exhibits a slightly different initial behavior. It starts at a marginally higher accuracy than the training set, rises even more sharply in the very early epochs, and then converges to the same plateau as the training accuracy.

* **Key Data Points (Approximate):**

* Epoch 0: ~0.30

* Epoch 25: ~0.85

* Epoch 50: ~0.96

* Epoch 100: ~0.985

* Epoch 200: ~0.995

* Epoch 400: ~0.998 (indistinguishable from the training line)

**Relationship Between Series:**

* The validation accuracy (dashed line) is consistently at or slightly above the training accuracy (solid line) for the first ~75 epochs.

* After approximately epoch 100, the two lines become nearly superimposed, indicating the model's performance on unseen data (validation) matches its performance on the training data.

### Key Observations

1. **Rapid Initial Learning:** The most significant gains in accuracy occur within the first 50 epochs for both datasets.

2. **High Final Accuracy:** Both training and validation accuracy converge to a value extremely close to 1.0 (100%), suggesting near-perfect performance on the given task.

3. **No Overfitting:** The validation accuracy does not degrade as training progresses; it tracks the training accuracy perfectly after the initial phase. This is a strong indicator that the model is generalizing well and not memorizing the training data.

4. **Stable Convergence:** After epoch 200, both lines show minimal fluctuation, indicating the training process has stabilized.

### Interpretation

This chart demonstrates a highly successful model training run. The key takeaways are:

* **Effective Learning:** The model architecture (with kernel size 25) is well-suited to the problem, as evidenced by the rapid and sustained increase in accuracy.

* **Excellent Generalization:** The fact that validation accuracy matches training accuracy so closely, and even starts slightly higher, suggests the training and validation datasets are well-representative of the same underlying data distribution. There is no sign of overfitting, which is a common pitfall in machine learning.

* **Sufficient Training Duration:** The plateau in accuracy after ~200 epochs indicates that further training beyond 400 epochs is unlikely to yield significant improvements. The model has reached its performance capacity for this configuration.

* **Potential for Optimization:** While the results are excellent, the near-identical curves might prompt an investigation into whether the validation set is sufficiently challenging or distinct from the training set. The extremely high final accuracy (>99.8%) could also warrant a check for data leakage or an overly simplistic task.

In summary, the image provides clear, quantitative evidence of a model that learns quickly, generalizes perfectly, and achieves maximum performance on its designated task within about 200 training epochs.