## Technical Diagram: Fourier-Based Convolutional Neural Network Layer

### Overview

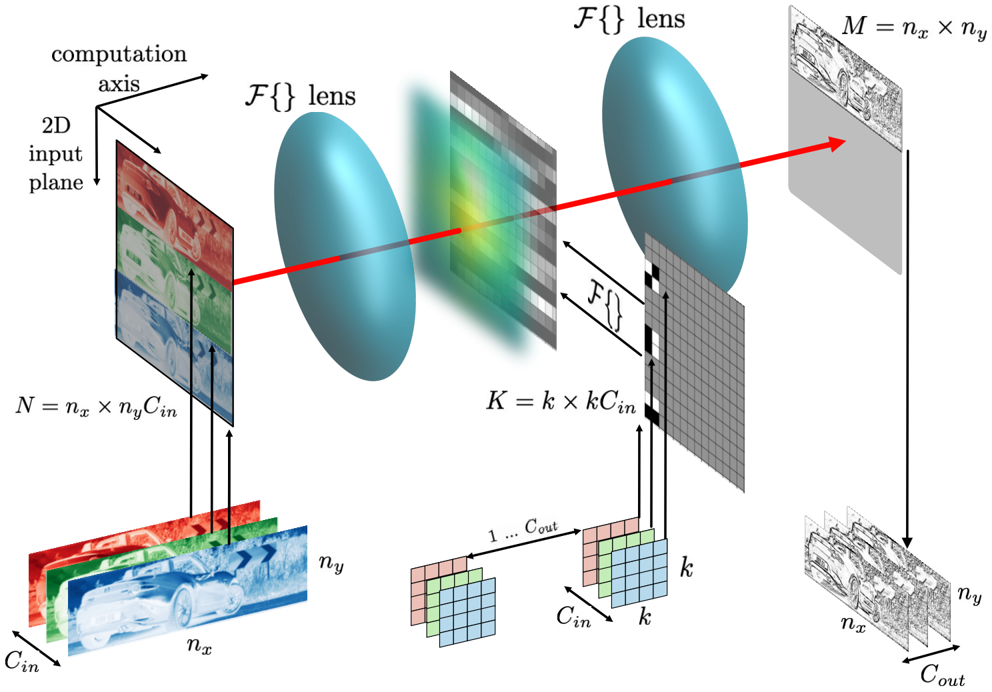

This image is a technical schematic illustrating the forward pass of a convolutional operation implemented in the Fourier domain, likely within a neural network architecture. It depicts the transformation of a multi-channel input image through frequency-domain processing using lenses (representing Fourier transforms) and multiplication with a kernel, resulting in a transformed output feature map. The diagram emphasizes the mathematical relationships and dimensional transformations involved.

### Components/Axes

The diagram is organized into three main spatial regions from left to right, with supporting mathematical notation below.

**1. Left Region (Input Stage):**

* **Label (Top-Left):** "computation axis" with arrows pointing right and down.

* **Label (Top-Left):** "2D input plane".

* **Visual Element:** A stack of three colored 2D image planes (red, green, blue channels) representing an input image.

* **Dimensional Label (Below input stack):** `N = n_x × n_y C_in`. This denotes the total number of elements in the input tensor, where `n_x` and `n_y` are spatial dimensions and `C_in` is the number of input channels.

* **Axis Labels (Bottom-Left):** `C_in` (pointing into the page/depth), `n_x` (horizontal), `n_y` (vertical).

**2. Central Region (Processing Stage):**

* **Primary Visual Elements:** Two large, blue, elliptical shapes labeled `ℱ{} lens`. These represent Fourier Transform operations.

* **Flow:** A red arrow originates from the input plane, passes through the first `ℱ{} lens`, then through a semi-transparent, multi-colored grid (representing the frequency-domain representation of the input), then through a second `ℱ{} lens`, and finally points to the output plane.

* **Kernel/Filter (Below central flow):** A 3D grid structure representing a convolutional kernel.

* **Dimensional Label:** `K = k × k C_in`. This denotes the kernel's spatial size (`k x k`) and its depth matching the input channels (`C_in`).

* **Detailed Kernel View:** A smaller, exploded view shows the kernel's structure with dimensions labeled: `C_in` (depth), `k` (height), `k` (width). An arrow labeled `1 ... C_out` indicates this kernel produces `C_out` output channels.

* **Operation Label (Between lenses and kernel):** `ℱ{}` with arrows pointing from the kernel to the central frequency-domain grid, indicating the kernel is also transformed into the frequency domain.

**3. Right Region (Output Stage):**

* **Visual Element:** A single, grayscale 2D plane representing the output feature map.

* **Dimensional Label (Top-Right):** `M = n_x × n_y`. This denotes the spatial dimensions of the output, which match the input's spatial dimensions (`n_x`, `n_y`).

* **Axis Labels (Bottom-Right):** `n_x` (horizontal), `n_y` (vertical), `C_out` (pointing into the page/depth).

* **Final Output Representation:** A stack of `C_out` grayscale feature maps, showing the result of applying the kernel across all output channels.

### Detailed Analysis

The diagram details a specific computational pathway:

1. A multi-channel (`C_in`) input image of size `n_x` by `n_y` is taken.

2. It undergoes a Fourier Transform (`ℱ{}`), visualized as passing through a lens, converting it to the frequency domain.

3. A convolutional kernel of size `k x k x C_in` is also transformed into the frequency domain (`ℱ{}`).

4. The frequency-domain representations of the input and kernel are multiplied element-wise (implied by their convergence at the central grid).

5. The result undergoes an Inverse Fourier Transform (the second `ℱ{} lens`), converting it back to the spatial domain.

6. The final output is a feature map stack with spatial dimensions `n_x` by `n_y` (same as input) and a new depth of `C_out` channels.

The red arrow provides a clear visual flow for a single channel/slice through this process. The dimensional labels (`N`, `K`, `M`) explicitly define the size of the data tensors at key stages.

### Key Observations

* **Spatial Dimension Preservation:** The output spatial dimensions (`n_x`, `n_y`) are identical to the input's, indicating a "same" convolution (likely achieved via padding in the frequency domain).

* **Channel Transformation:** The number of channels changes from `C_in` to `C_out`, controlled by the kernel's fourth dimension.

* **Computational Metaphor:** The use of "lenses" for Fourier transforms is a common and effective metaphor in signal processing, implying focusing or transforming the data into a different representation space.

* **Kernel Dual Representation:** The kernel is shown both in its spatial form (`k x k x C_in`) and is implied to exist in a frequency-domain form for the multiplication step.

### Interpretation

This diagram is a pedagogical illustration of the **Convolution Theorem** applied to deep learning. It demonstrates that convolution in the spatial domain is equivalent to element-wise multiplication in the frequency domain.

* **What it Suggests:** The primary purpose is to explain the internal mechanics of a Fourier-based convolutional layer, which can be computationally more efficient than direct spatial convolution for large kernels. It visually breaks down an abstract mathematical operation into a sequence of tangible steps: transform, multiply, inverse transform.

* **Relationships:** The elements are causally linked by the red flow arrow. The input and kernel are independent starting points that converge via their frequency-domain representations to produce the output. The `ℱ{} lens` symbols act as the transformative gates between domains.

* **Notable Anomalies/Clarifications:** The diagram simplifies the process. In practice, operations like padding, batching, and handling the Hermitian symmetry of real-valued Fourier transforms are necessary but not shown. The "computation axis" label is somewhat abstract but sets the coordinate system for the 2D planes. The color in the central grid likely represents the magnitude or phase of the complex-valued frequency components.

**Language Note:** All text in the image is in English, using standard mathematical notation.