## Composite Visualization: Super GNN Training & Prediction Analysis

### Overview

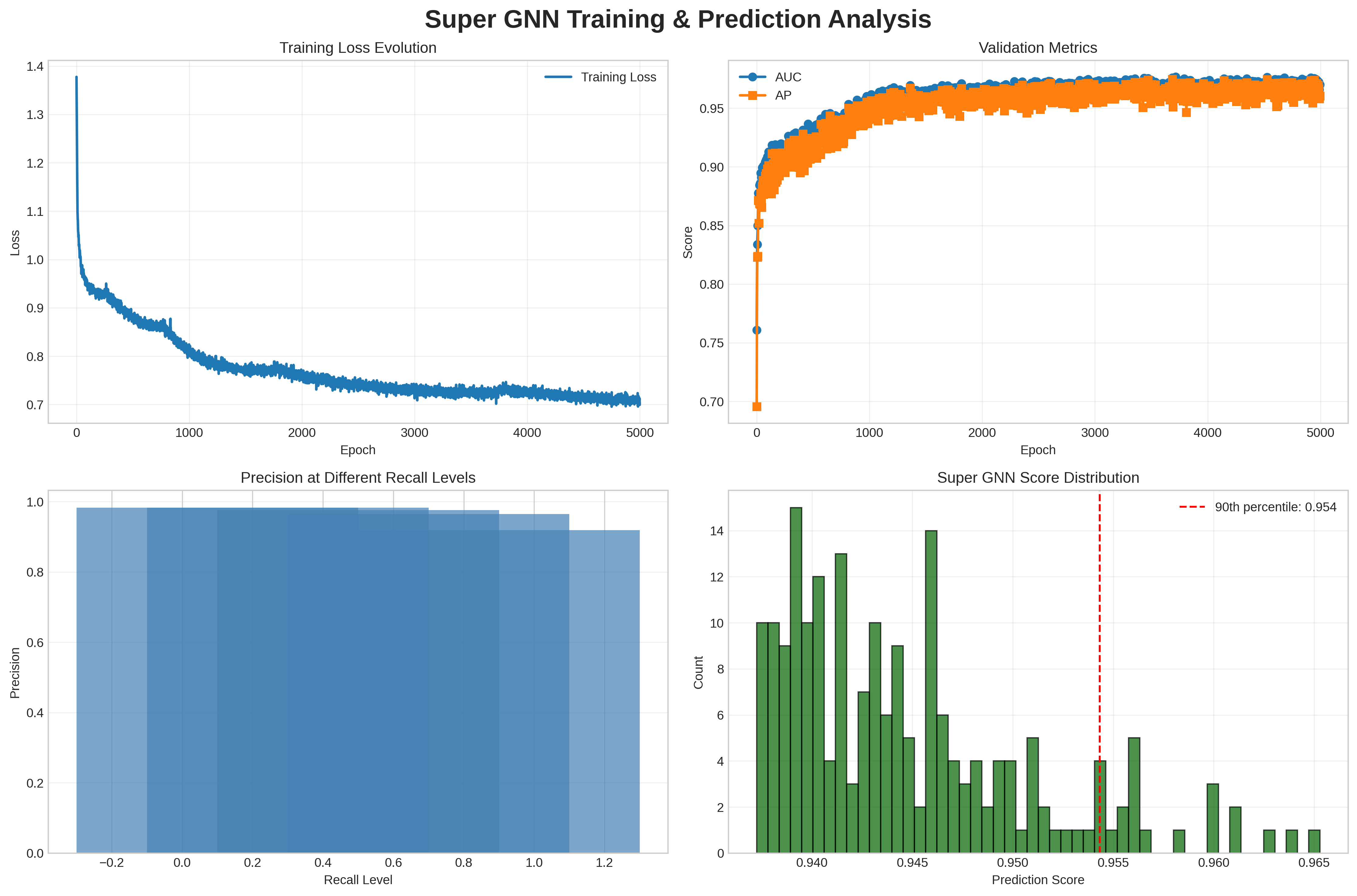

The image contains four subplots arranged in a 2x2 grid, analyzing the training and prediction performance of a Super GNN model. Each subplot provides distinct insights into loss dynamics, validation metrics, precision-recall trade-offs, and score distributions.

---

### Components/Axes

#### Top-Left: Training Loss Evolution

- **Title**: Training Loss Evolution

- **Y-Axis**: Loss (0.7 to 1.4)

- **X-Axis**: Epoch (0 to 5000)

- **Legend**: Blue line labeled "Training Loss"

- **Trend**: Loss starts at ~1.38, drops sharply to ~0.72 by epoch 1000, then gradually declines to ~0.71 by epoch 5000.

#### Top-Right: Validation Metrics

- **Title**: Validation Metrics

- **Y-Axis**: Score (0.7 to 0.95)

- **X-Axis**: Epoch (0 to 5000)

- **Legend**:

- Blue circles: "AUC" (starts at 0.75, peaks at ~0.95 by epoch 1000)

- Orange squares: "AP" (starts at 0.77, peaks at ~0.95 by epoch 1000)

- **Trend**: Both metrics rise sharply initially, plateauing near 0.95 with minor fluctuations.

#### Bottom-Left: Precision at Different Recall Levels

- **Title**: Precision at Different Recall Levels

- **Y-Axis**: Precision (0 to 1)

- **X-Axis**: Recall Level (-0.2 to 1.2)

- **Color Gradient**: Blue (darker = higher precision)

- **Key Data**:

- Highest precision (~1.0) at recall 0.0

- Precision decreases as recall increases (e.g., ~0.9 at recall 0.2, ~0.85 at recall 0.4).

#### Bottom-Right: Super GNN Score Distribution

- **Title**: Super GNN Score Distribution

- **X-Axis**: Prediction Score (0.94 to 0.965)

- **Y-Axis**: Count (0 to 16)

- **Legend**: Red dashed line at 90th percentile (0.954)

- **Distribution**:

- Peak count (~14) at ~0.945

- Counts decrease toward edges (e.g., ~2 at 0.965)

- 90th percentile marked at 0.954.

---

### Key Observations

1. **Training Loss**: Initial instability (spike to 1.38) followed by rapid convergence to stable loss (~0.71).

2. **Validation Metrics**: Both AUC and AP improve rapidly, stabilizing near 0.95, indicating strong generalization.

3. **Precision-Recall Trade-off**: High precision at low recall, but precision degrades as recall increases.

4. **Score Distribution**: Most predictions cluster tightly around 0.95, with a long tail toward higher scores.

---

### Interpretation

- **Training Dynamics**: The sharp initial drop in loss suggests effective early learning, while the plateau indicates model stabilization.

- **Validation Performance**: High and stable AUC/AP scores confirm the model’s robustness on validation data.

- **Precision-Recall Balance**: The heatmap highlights a trade-off: optimizing for recall reduces precision, critical for applications requiring balanced performance.

- **Score Distribution**: The concentration of scores near 0.95 suggests consistent model confidence, with the 90th percentile (0.954) indicating strong upper-bound performance.

The analysis reveals a well-trained model with effective generalization, though the precision-recall trade-off warrants further tuning for specific use cases.