## Pie Charts: Distribution of Approaches for CWQ, WebQSP, and GrailQA

### Overview

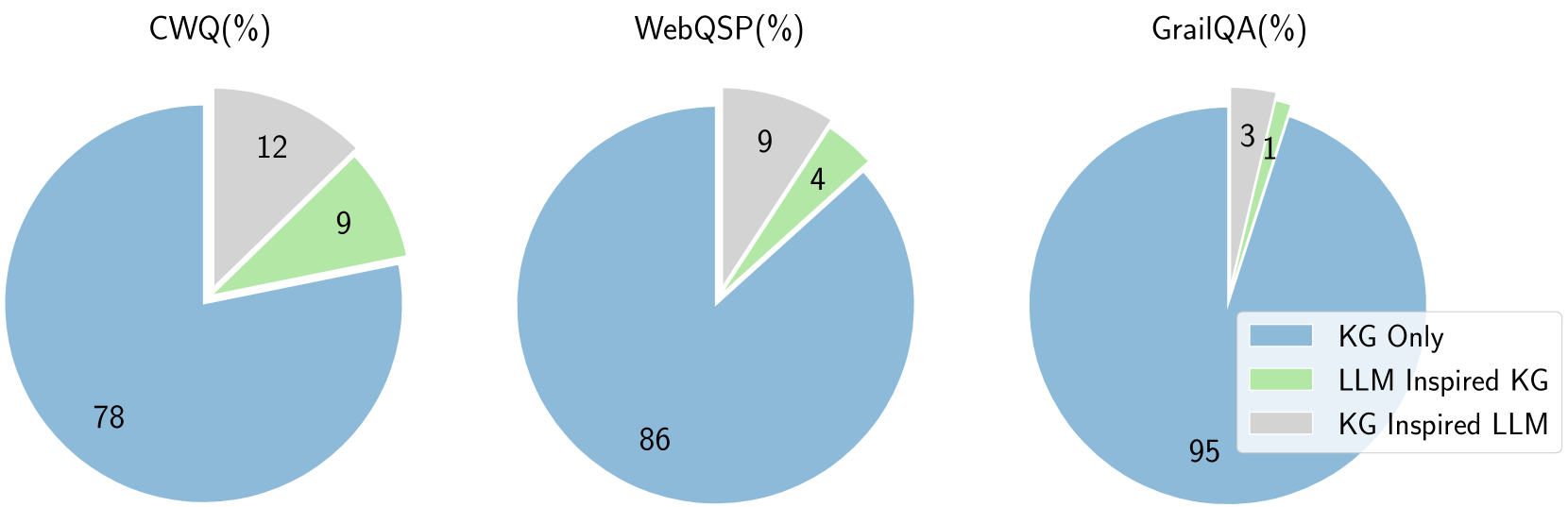

This image displays three pie charts, each representing the percentage distribution of different approaches used for three distinct tasks: CWQ (Complex Question Answering), WebQSP (Web Question Answering with Semantic Parsing), and GrailQA (Question Answering over Knowledge Graphs). The approaches are categorized as "KG Only", "LLM Inspired KG", and "KG Inspired LLM".

### Components/Axes

* **Chart Titles:**

* CWQ(%)

* WebQSP(%)

* GrailQA(%)

* **Legend:** Located in the bottom-right quadrant of the image, it defines the color mapping for the categories:

* Blue: KG Only

* Light Green: LLM Inspired KG

* Light Gray: KG Inspired LLM

* **Data Labels:** Numerical percentages are displayed within each slice of the pie charts.

### Detailed Analysis

**1. CWQ (%)**

* **KG Only:** Represented by the largest blue slice, it accounts for **78%**.

* **LLM Inspired KG:** Represented by the light green slice, it accounts for **9%**.

* **KG Inspired LLM:** Represented by the light gray slice, it accounts for **12%**.

* *Sum Check:* 78 + 9 + 12 = 99%. There is a slight discrepancy, likely due to rounding or a minor omission in the visual representation.

**2. WebQSP (%)**

* **KG Only:** Represented by the largest blue slice, it accounts for **86%**.

* **LLM Inspired KG:** Represented by the light green slice, it accounts for **4%**.

* **KG Inspired LLM:** Represented by the light gray slice, it accounts for **9%**.

* *Sum Check:* 86 + 4 + 9 = 99%. Similar to CWQ, there is a slight discrepancy.

**3. GrailQA (%)**

* **KG Only:** Represented by the largest blue slice, it accounts for **95%**.

* **LLM Inspired KG:** Represented by the light green slice, it accounts for **1%**.

* **KG Inspired LLM:** Represented by the light gray slice, it accounts for **3%**.

* *Sum Check:* 95 + 1 + 3 = 99%. Again, a slight discrepancy is observed.

### Key Observations

* **Dominance of "KG Only":** Across all three tasks (CWQ, WebQSP, and GrailQA), the "KG Only" approach is overwhelmingly dominant, representing the largest proportion of the distribution. This proportion increases from CWQ (78%) to WebQSP (86%) and further to GrailQA (95%).

* **Low Adoption of Hybrid Approaches:** The "LLM Inspired KG" and "KG Inspired LLM" approaches, which combine knowledge graph (KG) and large language model (LLM) techniques, represent a significantly smaller portion of the distribution in all cases.

* **Trend in "KG Only":** There is a clear upward trend in the percentage of "KG Only" usage as we move from CWQ to WebQSP to GrailQA.

* **Trend in Hybrid Approaches:** Conversely, the combined percentage of "LLM Inspired KG" and "KG Inspired LLM" decreases as we move from CWQ (9% + 12% = 21%) to WebQSP (4% + 9% = 13%) to GrailQA (1% + 3% = 4%).

* **GrailQA's Specialization:** GrailQA shows the most pronounced reliance on "KG Only", with hybrid approaches being minimal.

### Interpretation

The data presented in these pie charts suggests a strong preference and established effectiveness of using knowledge graphs exclusively for the tasks of CWQ, WebQSP, and GrailQA. The increasing dominance of "KG Only" from CWQ to GrailQA indicates that for tasks that are more directly aligned with structured knowledge representation (like GrailQA, which is explicitly about question answering over knowledge graphs), purely KG-based methods are considered the most robust or efficient.

The low percentages for "LLM Inspired KG" and "KG Inspired LLM" could imply several things:

1. **Maturity of KG-based methods:** Existing KG-based solutions might be highly optimized and performant for these specific question-answering tasks, making the integration of LLMs less critical or even detrimental if not implemented carefully.

2. **Challenges in integration:** Effectively combining LLMs with KGs might still be an active area of research and development, with practical implementations not yet widespread or superior to standalone KG approaches.

3. **Task specificity:** The nature of these question-answering tasks might inherently favor structured, symbolic reasoning provided by KGs over the more probabilistic and pattern-based reasoning of LLMs, especially when dealing with factual queries.

The observed trend suggests that as the task becomes more focused on structured knowledge retrieval and reasoning (moving towards GrailQA), the reliance on pure KG approaches intensifies, while the utility of LLM-inspired methods diminishes. This could indicate that for highly structured domains, LLMs might be more useful for tasks like natural language understanding or generation *around* the KG, rather than for core reasoning or retrieval within it. The slight discrepancies in the sums (99%) are likely due to rounding in the original data or visualization.