TECHNICAL ASSET FINGERPRINT

f12a3779464ae29f30b8e56f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

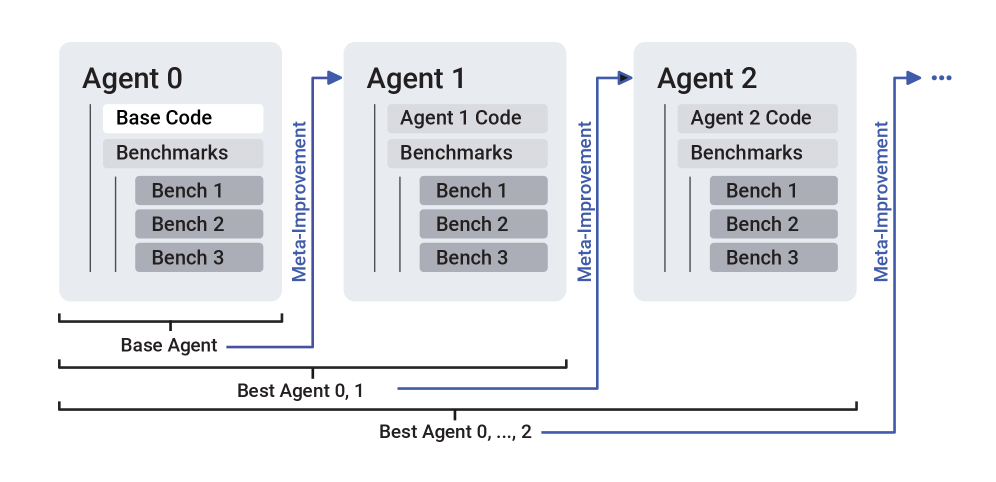

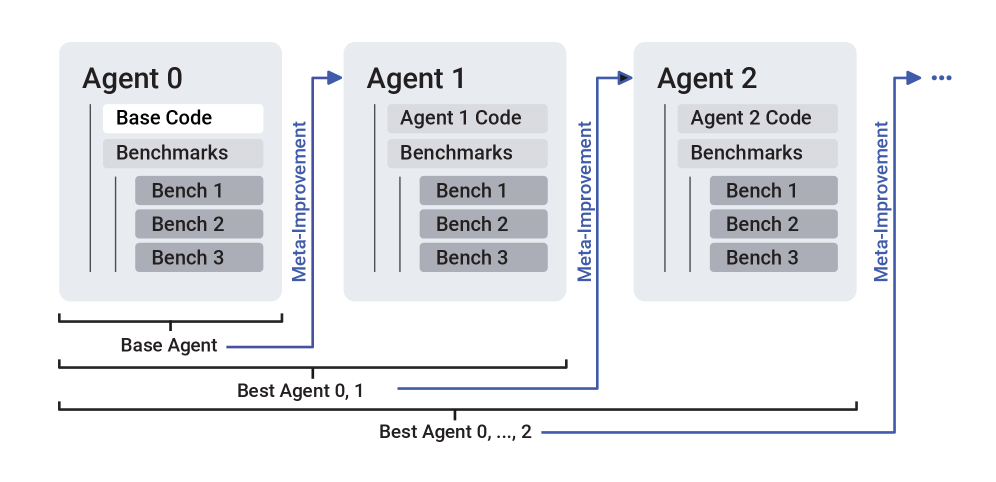

## Diagram: Agent Improvement Process

### Overview

The image is a diagram illustrating an iterative agent improvement process. It shows how a base agent's code and benchmarks are used to create subsequent agents, with each iteration incorporating meta-improvements based on benchmark results.

### Components/Axes

* **Agents:** The diagram depicts Agent 0, Agent 1, and Agent 2, with an ellipsis indicating that the process continues.

* **Agent Code:** Each agent has associated code (e.g., "Base Code" for Agent 0, "Agent 1 Code" for Agent 1).

* **Benchmarks:** Each agent is evaluated using a set of benchmarks.

* **Bench 1, Bench 2, Bench 3:** Specific benchmarks used for evaluation.

* **Meta-Improvement:** A blue arrow indicates the process of meta-improvement, where the results of the benchmarks are used to improve the agent's code in the next iteration.

* **Base Agent:** A horizontal line indicates the scope of the "Base Agent" for Agent 0.

* **Best Agent 0, 1:** A horizontal line indicates the scope of the "Best Agent 0, 1" spanning Agent 0 and Agent 1.

* **Best Agent 0, ..., 2:** A horizontal line indicates the scope of the "Best Agent 0, ..., 2" spanning Agent 0, Agent 1, and Agent 2.

### Detailed Analysis

The diagram shows a sequential process:

1. **Agent 0 (Base Agent):** Starts with "Base Code" and is evaluated using "Benchmarks" including "Bench 1", "Bench 2", and "Bench 3".

2. **Meta-Improvement (Agent 0 to Agent 1):** The results of the benchmarks are used to generate "Agent 1 Code".

3. **Agent 1:** Uses "Agent 1 Code" and is evaluated using the same "Benchmarks".

4. **Meta-Improvement (Agent 1 to Agent 2):** The results of the benchmarks are used to generate "Agent 2 Code".

5. **Agent 2:** Uses "Agent 2 Code" and is evaluated using the same "Benchmarks".

6. **Continuation:** The process continues iteratively, as indicated by the ellipsis.

The "Best Agent" lines indicate that the best agent from each iteration is retained and used as a basis for further improvement.

### Key Observations

* The diagram illustrates an iterative improvement process.

* Each agent's code is based on the previous agent's code and the results of the benchmarks.

* The "Best Agent" lines suggest a selection process where the best-performing agent is chosen at each stage.

### Interpretation

The diagram represents a meta-learning or reinforcement learning process where an agent's performance is iteratively improved based on benchmark results. The "Meta-Improvement" step signifies the learning process, where the agent's code is modified to better perform on the benchmarks. The "Best Agent" lines suggest a form of selection or inheritance, where the best-performing agent is carried forward to the next iteration. This process aims to create an agent that performs optimally on the given benchmarks.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: Iterative Agent Improvement Process

### Overview

This image presents a flow diagram illustrating an iterative process for developing and improving "Agents" through a mechanism called "Meta-Improvement." It shows a sequence of agents (Agent 0, Agent 1, Agent 2, and so on) where each agent's code is evaluated against a consistent set of benchmarks, and the results inform the creation or refinement of the subsequent agent. The diagram also highlights the concept of a "Best Agent" that accumulates performance across iterations.

### Components/Axes

The diagram is structured horizontally, depicting a progression from left to right.

* **Agent Blocks (Top-Center):**

* Three primary blocks are visible: "Agent 0", "Agent 1", and "Agent 2". A "..." symbol to the right of "Agent 2" indicates the continuation of this process for further agents.

* Each agent block contains two main sub-components:

* **Code Component:** A rectangular box at the top of each agent block.

* For "Agent 0", this box is labeled "Base Code" (white background).

* For "Agent 1", this box is labeled "Agent 1 Code" (light gray background).

* For "Agent 2", this box is labeled "Agent 2 Code" (light gray background).

* **Benchmarks Component:** A rectangular box below the code component, labeled "Benchmarks" (light gray background).

* Nested within the "Benchmarks" component are three smaller, darker gray rectangular boxes, consistently labeled: "Bench 1", "Bench 2", "Bench 3". These are present for Agent 0, Agent 1, and Agent 2.

* **Meta-Improvement Arrows (Blue):**

* Horizontal blue arrows connect the output of one agent's evaluation to the input of the next agent. Each arrow is labeled "Meta-Improvement" (text oriented vertically, reading bottom-to-top).

* The first arrow originates from the right side of the "Agent 0" block and points rightwards into the "Agent 1" block.

* The second arrow originates from the right side of the "Agent 1" block and points rightwards into the "Agent 2" block.

* The third arrow originates from the right side of the "Agent 2" block and points rightwards towards the "..." symbol, indicating the process continues.

* **Best Agent Brackets (Bottom):**

* Three horizontal brackets with labels are positioned at the bottom of the diagram, indicating cumulative scope.

* **Base Agent:** A short black bracket spans horizontally directly beneath the "Agent 0" block, labeled "Base Agent".

* **Best Agent 0, 1:** A longer black bracket spans horizontally beneath both "Agent 0" and "Agent 1" blocks, labeled "Best Agent 0, 1".

* **Best Agent 0, ..., 2:** The longest black bracket spans horizontally beneath "Agent 0", "Agent 1", and "Agent 2" blocks, extending further to the right under the "..." symbol. It is labeled "Best Agent 0, ..., 2".

### Detailed Analysis

The diagram illustrates a sequential, iterative development process.

1. **Agent 0** starts with "Base Code" and is evaluated against "Benchmarks" (Bench 1, Bench 2, Bench 3).

2. The results or insights from Agent 0's performance on these benchmarks are fed into a "Meta-Improvement" process.

3. This "Meta-Improvement" process then generates or refines the code for **Agent 1** ("Agent 1 Code"). Agent 1 is subsequently evaluated against the *same* set of "Benchmarks" (Bench 1, Bench 2, Bench 3).

4. The cycle repeats: Agent 1's performance informs the "Meta-Improvement" process, which in turn leads to **Agent 2** ("Agent 2 Code"), also evaluated against the identical "Benchmarks".

5. The "..." indicates that this iterative process of "Meta-Improvement" and agent generation/evaluation continues indefinitely or for a specified number of iterations.

The horizontal brackets at the bottom indicate the scope of "best agent" selection:

* "Base Agent" refers specifically to Agent 0.

* "Best Agent 0, 1" implies a comparison or selection between Agent 0 and Agent 1, likely choosing the one with superior performance.

* "Best Agent 0, ..., 2" suggests a cumulative selection, identifying the best performing agent among Agent 0, Agent 1, Agent 2, and potentially all subsequent agents generated in the process.

### Key Observations

* **Iterative Refinement:** The core concept is an iterative loop where each new agent is an improvement or modification of the previous one, guided by performance feedback.

* **Consistent Evaluation:** All agents are evaluated using the exact same set of benchmarks, ensuring a fair and comparable assessment of improvement.

* **Meta-Learning/Optimization:** "Meta-Improvement" is the critical mechanism driving the evolution of agents, suggesting an automated or semi-automated process that learns how to improve the agents themselves.

* **Cumulative Best:** The "Best Agent" labels indicate a strategy of retaining the highest-performing agent found so far across all iterations.

### Interpretation

This diagram depicts a common paradigm in artificial intelligence, machine learning, and software engineering, particularly in areas like AutoML, evolutionary computation, or self-improving systems.

The "Base Code" for "Agent 0" represents an initial model, algorithm, or software component. Its performance is measured against a standard suite of "Benchmarks" (Bench 1, Bench 2, Bench 3). The "Meta-Improvement" step is crucial; it signifies a higher-level learning or optimization process. This process analyzes the results from the benchmarks (e.g., identifying weaknesses, strengths, or areas for optimization) and uses this meta-knowledge to generate or modify the code for the next agent. For instance, "Meta-Improvement" could be a hyperparameter optimization algorithm, an architecture search algorithm, or a system that learns to write better code based on past performance.

The continuous loop implies that the system is designed to progressively enhance the agent's capabilities. The "Best Agent 0, ..., 2" bracket suggests that the ultimate goal is not just to create a new agent, but to identify and retain the most effective agent discovered throughout the entire iterative process. This framework allows for automated discovery of better solutions without constant human intervention in the core code generation, relying instead on the "Meta-Improvement" layer to drive progress. The consistency of benchmarks is vital for objectively measuring this progress and ensuring that improvements are genuine and not merely artifacts of changing evaluation criteria.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Iterative Agent Improvement Process

### Overview

The image depicts a diagram illustrating an iterative process of agent improvement through meta-improvement. It shows a sequence of agents (Agent 0, Agent 1, Agent 2, and continuing as indicated by "..."), each building upon the code and benchmarks of the previous agent. The diagram highlights the concept of "meta-improvement" as the driving force behind the progression.

### Components/Axes

The diagram consists of several key components:

* **Agents:** Represented as rectangular blocks labeled "Agent 0", "Agent 1", "Agent 2", and "...".

* **Code & Benchmarks:** Within each agent block, there are sections labeled "Base Code", "Benchmarks", "Bench 1", "Bench 2", and "Bench 3".

* **Meta-Improvement Arrows:** Blue arrows connecting the agents, labeled "Meta-Improvement", indicating the direction of improvement.

* **Base Agent Label:** A label below Agent 0, reading "Base Agent".

* **Best Agent 0, 1 Label:** A label spanning Agent 0 and Agent 1, reading "Best Agent 0, 1".

* **Best Agent 0, ..., 2 Label:** A label spanning Agent 0, Agent 1, and Agent 2, reading "Best Agent 0, ..., 2".

There are no explicit axes in this diagram. The arrangement is primarily spatial, illustrating a sequential process.

### Detailed Analysis or Content Details

The diagram shows a progression of agents, starting with Agent 0, which contains "Base Code" and "Benchmarks" along with three specific benchmarks labeled "Bench 1", "Bench 2", and "Bench 3".

Agent 1 builds upon Agent 0, containing "Agent 1 Code" and "Benchmarks", and also includes "Bench 1", "Bench 2", and "Bench 3". The arrow labeled "Meta-Improvement" points from Agent 0 to Agent 1.

Agent 2 continues the progression, containing "Agent 2 Code" and "Benchmarks", and again includes "Bench 1", "Bench 2", and "Bench 3". Another "Meta-Improvement" arrow points from Agent 1 to Agent 2.

The ellipsis ("...") after Agent 2 indicates that this process continues indefinitely.

The labels "Best Agent 0, 1" and "Best Agent 0, ..., 2" suggest that the best performing agent at each stage is selected and used as the basis for the next iteration.

### Key Observations

* The diagram emphasizes an iterative process of improvement.

* Each agent retains the same set of benchmarks ("Bench 1", "Bench 2", "Bench 3"), suggesting these benchmarks are used to evaluate the performance of each agent.

* The "Meta-Improvement" arrows indicate that the improvement is not simply incremental code changes, but a more fundamental process of optimization.

* The diagram does not provide any quantitative data about the performance of the agents.

### Interpretation

This diagram illustrates a concept of automated agent improvement, likely within a machine learning or artificial intelligence context. The "Base Code" represents the initial starting point, and the "Benchmarks" provide a standardized way to measure performance. The "Meta-Improvement" process suggests an algorithm or mechanism that analyzes the performance of each agent and generates improved code for the next iteration.

The labels "Best Agent 0, 1" and "Best Agent 0, ..., 2" indicate a selection process where the best-performing agent at each stage is chosen to continue the improvement cycle. This is a common technique in evolutionary algorithms and reinforcement learning.

The diagram is conceptual and does not provide specific details about the implementation of the meta-improvement process. It serves as a high-level overview of the iterative agent improvement workflow. The consistent use of the same benchmarks across agents suggests a focus on consistent and comparable evaluation. The diagram implies a continuous cycle of improvement, with each agent building upon the strengths of its predecessors.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Iterative Agent Meta-Improvement Process

### Overview

The image is a technical flowchart illustrating a sequential, iterative process for improving AI agents. It depicts a cycle where an initial "Base Agent" (Agent 0) is evaluated, and through a process labeled "Meta-Improvement," it generates a successor (Agent 1). This process repeats, with each new agent being an improved version of the previous one, based on benchmark performance. The diagram emphasizes cumulative selection, where the "best" agent from all previous iterations is used as the foundation for the next meta-improvement step.

### Components/Axes

The diagram is structured horizontally from left to right, representing progression through time or iterations.

**1. Agent Blocks (Primary Components):**

* **Agent 0 (Leftmost):** Labeled "Agent 0". Contains a vertical stack:

* "Base Code" (top, white background)

* "Benchmarks" (middle, light gray background)

* A sub-list under Benchmarks: "Bench 1", "Bench 2", "Bench 3" (each in darker gray boxes).

* **Agent 1 (Center):** Labeled "Agent 1". Contains a vertical stack:

* "Agent 1 Code" (top, light gray background)

* "Benchmarks" (middle, light gray background)

* A sub-list under Benchmarks: "Bench 1", "Bench 2", "Bench 3" (each in darker gray boxes).

* **Agent 2 (Rightmost):** Labeled "Agent 2". Contains a vertical stack:

* "Agent 2 Code" (top, light gray background)

* "Benchmarks" (middle, light gray background)

* A sub-list under Benchmarks: "Bench 1", "Bench 2", "Bench 3" (each in darker gray boxes).

* **Ellipsis (Far Right):** An arrow points from Agent 2 to an ellipsis ("..."), indicating the process continues indefinitely.

**2. Process Arrows (Meta-Improvement):**

* A blue arrow labeled "Meta-Improvement" (text written vertically) originates from the "Base Agent" bracket and points to the "Agent 1 Code" block.

* A second blue arrow labeled "Meta-Improvement" originates from the "Best Agent 0, 1" bracket and points to the "Agent 2 Code" block.

* A third blue arrow labeled "Meta-Improvement" originates from the "Best Agent 0, ..., 2" bracket and points towards the ellipsis.

**3. Selection Brackets (Bottom):**

* **"Base Agent" Bracket:** A horizontal bracket spans the width of the "Agent 0" block. A line connects its center to the first "Meta-Improvement" arrow.

* **"Best Agent 0, 1" Bracket:** A wider horizontal bracket spans the combined width of "Agent 0" and "Agent 1". A line connects its center to the second "Meta-Improvement" arrow.

* **"Best Agent 0, ..., 2" Bracket:** The widest horizontal bracket spans the combined width of "Agent 0", "Agent 1", and "Agent 2". A line connects its center to the third "Meta-Improvement" arrow.

### Detailed Analysis

* **Agent Structure:** Each agent (0, 1, 2) has an identical internal structure for evaluation: a code block ("Base Code" or "Agent N Code") and a "Benchmarks" block containing three specific benchmarks ("Bench 1", "Bench 2", "Bench 3").

* **Progression of Code:** The code block's label changes from "Base Code" (Agent 0) to "Agent 1 Code" and "Agent 2 Code", indicating the code itself is being modified or replaced in each iteration.

* **Meta-Improvement Flow:** The "Meta-Improvement" process is not applied to the immediately preceding agent alone. The brackets indicate it is applied to the **best agent identified from all previous iterations**.

* To create Agent 1: Meta-improvement is applied to the "Base Agent" (Agent 0).

* To create Agent 2: Meta-improvement is applied to the best agent chosen from the pool of Agent 0 and Agent 1 ("Best Agent 0, 1").

* To create the next agent (after Agent 2): Meta-improvement will be applied to the best agent chosen from the pool of Agent 0, Agent 1, and Agent 2 ("Best Agent 0, ..., 2").

* **Spatial Grounding:** The legend/labels ("Meta-Improvement") are placed vertically alongside the blue arrows that connect the selection brackets (bottom) to the code blocks of the *next* agent (top-right relative to the bracket).

### Key Observations

1. **Iterative and Cumulative:** The process is explicitly iterative, with each cycle producing a new agent. The selection mechanism for the meta-improvement input is cumulative, always considering the entire history of agents.

2. **Consistent Benchmarking:** The same set of three benchmarks ("Bench 1", "Bench 2", "Bench 3") is used to evaluate every agent, providing a consistent performance metric across iterations.

3. **Directional Flow:** The flow is strictly left-to-right (chronological) and bottom-to-top (from selection to application of improvement). The "Meta-Improvement" arrows always point from the selected best agent(s) to the code of the next agent.

4. **Open-Ended Process:** The ellipsis ("...") signifies that this is a potentially infinite loop of self-improvement.

### Interpretation

This diagram models a **recursive self-improvement system for AI agents**. The core idea is that an agent's code can be automatically improved ("Meta-Improvement") based on its performance against a fixed set of benchmarks. Crucially, the system does not simply improve the last agent; it maintains a population (or history) and selects the overall best performer as the starting point for the next improvement cycle. This is a safeguard against regression—if a new agent (e.g., Agent 1) performs worse than a previous one (Agent 0), the system would revert to using Agent 0 as the "Best Agent" for the next meta-improvement step.

The process suggests a research or engineering framework where the goal is to autonomously generate increasingly capable agents. The fixed benchmarks act as the objective function guiding the improvement. The "Meta-Improvement" step itself is a black box in this diagram; it represents the algorithm or process (e.g., another AI, a genetic algorithm, program synthesis) that takes an agent's code and its performance data and produces a modified, hopefully better, version of the code. The diagram's primary message is about the **selection and iteration protocol**, not the specific mechanism of improvement.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Agent Development and Benchmarking Process

### Overview

The diagram illustrates a multi-agent iterative improvement process where each agent's code is refined through benchmark evaluations and meta-improvement cycles. Three agents (0, 1, 2) are shown with explicit connections, suggesting a scalable system (indicated by "..." for additional agents).

### Components/Axes

1. **Agents**:

- Agent 0 (Base Agent)

- Agent 1

- Agent 2

- ... (implied continuation)

2. **Code Components**:

- Base Code (Agent 0)

- Agent 1 Code

- Agent 2 Code

3. **Evaluation Framework**:

- Benchmarks (3 per agent: Bench 1, Bench 2, Bench 3)

4. **Meta-Improvement Arrows**:

- Directed from Agent 0 → Agent 1 → Agent 2 → ...

- Label: "Meta-Improvement"

### Detailed Analysis

- **Agent 0**:

- Contains "Base Code" (highlighted)

- Three benchmarks (Bench 1-3)

- Labeled as "Base Agent"

- **Agent 1**:

- Contains "Agent 1 Code"

- Three benchmarks (Bench 1-3)

- Connected to Agent 0 via "Meta-Improvement" arrow

- **Agent 2**:

- Contains "Agent 2 Code"

- Three benchmarks (Bench 1-3)

- Connected to Agent 1 via "Meta-Improvement" arrow

- **Best Agent Selection**:

- "Best Agent 0, 1" (bottom-left)

- "Best Agent 0, ..., 2" (bottom-right)

- Indicates iterative selection across agents

### Key Observations

1. **Iterative Refinement**: Each agent's code is positioned as an improvement over the previous through meta-improvement cycles.

2. **Benchmark Consistency**: All agents share identical benchmark structures (3 per agent), suggesting standardized evaluation criteria.

3. **Scalability**: The "..." notation implies the system can accommodate additional agents beyond Agent 2.

4. **Hierarchical Selection**: The "Best Agent" labels indicate a comparative evaluation process across agents.

### Interpretation

This diagram represents an evolutionary optimization framework where:

1. **Base Agent (Agent 0)** serves as the initial reference point

2. **Meta-Improvement** arrows suggest knowledge transfer or algorithmic refinement between agents

3. **Benchmarking** acts as the evaluation mechanism for code performance

4. **Best Agent Selection** implies a competitive process where agents are ranked based on benchmark results

The structure suggests a reinforcement learning or genetic algorithm approach where:

- Each new agent incorporates improvements from previous iterations

- Benchmarks provide objective performance metrics

- The "Best Agent" selection represents the global optimum across iterations

Notably, the absence of quantitative performance metrics in the diagram leaves the exact nature of "improvement" undefined - it could represent speed, accuracy, resource efficiency, or other measurable criteria. The consistent benchmark structure across agents implies standardized evaluation parameters, while the meta-improvement arrows suggest cumulative knowledge transfer between iterations.

DECODING INTELLIGENCE...