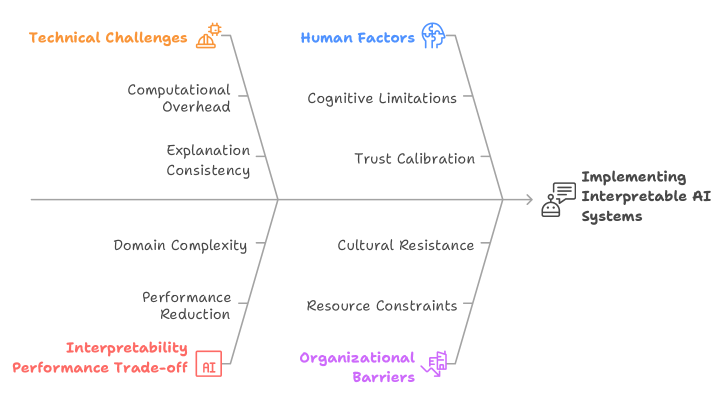

## Fishbone Diagram: Implementing Interpretable AI Systems

### Overview

The image is a fishbone diagram illustrating the various factors that influence the implementation of interpretable AI systems. The diagram identifies several categories of challenges and barriers, including technical challenges, human factors, organizational barriers, and the interpretability-performance trade-off.

### Components/Axes

* **Main Axis:** A horizontal arrow pointing to the right, leading to the effect being analyzed: "Implementing Interpretable AI Systems".

* **Categories:** The main "bones" of the fishbone diagram represent the categories of factors:

* **Technical Challenges:** Located on the top-left, marked with an orange hard hat icon.

* **Human Factors:** Located on the top-right, marked with a blue brain icon.

* **Interpretability Performance Trade-off:** Located on the bottom-left, marked with a red AI icon.

* **Organizational Barriers:** Located on the bottom-right, marked with a purple building icon with downward arrows.

* **Sub-Categories:** Each main category has sub-categories branching off:

* **Technical Challenges:** Computational Overhead, Explanation Consistency, Domain Complexity, Performance Reduction.

* **Human Factors:** Cognitive Limitations, Trust Calibration, Cultural Resistance, Resource Constraints.

* **Interpretability Performance Trade-off:** Interpretability Performance Trade-off.

* **Organizational Barriers:** Organizational Barriers.

* **Effect:** "Implementing Interpretable AI Systems" is represented by a speech bubble with a robot icon.

### Detailed Analysis or ### Content Details

The diagram outlines the following factors:

* **Technical Challenges (Orange):**

* Computational Overhead: The computational resources required for interpretable AI.

* Explanation Consistency: The reliability and uniformity of explanations provided by the AI.

* Domain Complexity: The difficulty in understanding and modeling complex domains.

* Performance Reduction: The potential decrease in performance when prioritizing interpretability.

* **Human Factors (Blue):**

* Cognitive Limitations: The limitations of human understanding and processing of AI explanations.

* Trust Calibration: The appropriate level of trust humans should place in AI systems.

* Cultural Resistance: Resistance to adopting and using AI systems due to cultural norms or beliefs.

* Resource Constraints: Limited resources (e.g., time, expertise) for implementing interpretable AI.

* **Interpretability Performance Trade-off (Red):**

* Interpretability Performance Trade-off: The inherent tension between making AI systems interpretable and maintaining high performance.

* **Organizational Barriers (Purple):**

* Organizational Barriers: Obstacles within organizations that hinder the adoption of interpretable AI.

### Key Observations

* The diagram presents a structured view of the challenges and barriers to implementing interpretable AI systems.

* It highlights the interplay between technical, human, and organizational factors.

* The diagram emphasizes the trade-off between interpretability and performance.

### Interpretation

The fishbone diagram illustrates that implementing interpretable AI systems is a multifaceted challenge. It's not just a technical problem but also involves human understanding, trust, and organizational readiness. The diagram suggests that successful implementation requires addressing all these factors in a holistic manner. The interpretability-performance trade-off is a central theme, indicating that efforts to make AI more transparent may come at the cost of some performance. Overcoming organizational barriers and considering human factors are crucial for the successful adoption of interpretable AI systems.