\n

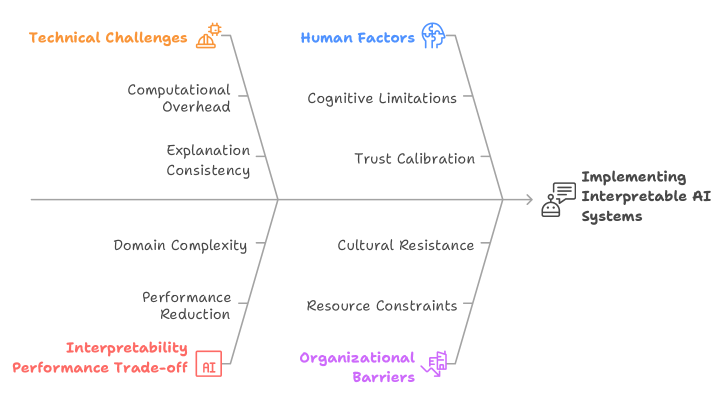

## Diagram: Factors Influencing Interpretable AI Implementation

### Overview

The image is a diagram illustrating the factors that influence the implementation of interpretable AI systems. It presents these factors as branching from a central arrow pointing towards "Implementing Interpretable AI Systems". The factors are categorized into "Technical Challenges" (left side, orange) and "Human Factors" (right side, blue). The diagram also highlights the "Interpretability Performance Trade-off" at the bottom.

### Components/Axes

* **Central Arrow:** Points from left to right, labeled "Implementing Interpretable AI Systems". A small icon of a computer chip with lines emanating from it is positioned to the right of the text.

* **Left Branch (Technical Challenges):** Labeled "Technical Challenges" in orange, with a small icon of a gear with sparkles.

* Computational Overhead

* Explanation Consistency

* Domain Complexity

* Performance Reduction

* **Right Branch (Human Factors):** Labeled "Human Factors" in blue, with a small icon of a human head.

* Cognitive Limitations

* Trust Calibration

* Cultural Resistance

* Resource Constraints

* **Bottom Label:** "Interpretability Performance Trade-off" in orange, with an AI icon.

* **Organizational Barriers:** Labeled "Organizational Barriers" in purple, with a small icon of a building.

### Detailed Analysis or Content Details

The diagram doesn't contain numerical data. It's a conceptual representation of factors. The branches are arranged symmetrically around the central arrow.

* **Technical Challenges:** The factors listed suggest that making AI interpretable can introduce computational costs, inconsistencies in explanations, difficulties with complex domains, and a reduction in performance.

* **Human Factors:** These factors indicate that human cognitive abilities, the need to calibrate trust in AI, cultural resistance to change, and resource limitations can all hinder the implementation of interpretable AI.

* **Interpretability Performance Trade-off:** This label suggests that there is an inherent tension between making AI interpretable and maintaining its performance.

* **Organizational Barriers:** These factors indicate that organizational structures and processes can hinder the implementation of interpretable AI.

### Key Observations

The diagram emphasizes that implementing interpretable AI is not solely a technical problem. It requires addressing both technical challenges and human factors. The "Interpretability Performance Trade-off" highlights a key constraint in this field. The inclusion of "Organizational Barriers" suggests that successful implementation requires more than just technical solutions and user acceptance; it also needs organizational support and change management.

### Interpretation

This diagram illustrates a systems-thinking approach to interpretable AI. It moves beyond simply focusing on algorithms and models to consider the broader context of implementation. The diagram suggests that a successful implementation strategy must address all of these factors simultaneously. The symmetrical arrangement of "Technical Challenges" and "Human Factors" implies that both are equally important. The "Interpretability Performance Trade-off" is a critical consideration, suggesting that designers must carefully balance the need for interpretability with the need for accuracy and efficiency. The inclusion of "Organizational Barriers" is a pragmatic acknowledgement that even the best technical solutions and user interfaces can fail if the organization isn't prepared to adopt them. The diagram is a high-level overview and doesn't provide specific details about how to address these challenges, but it serves as a useful framework for thinking about the complexities of implementing interpretable AI systems.