## Diagram: Implementing Interpretable AI Systems

### Overview

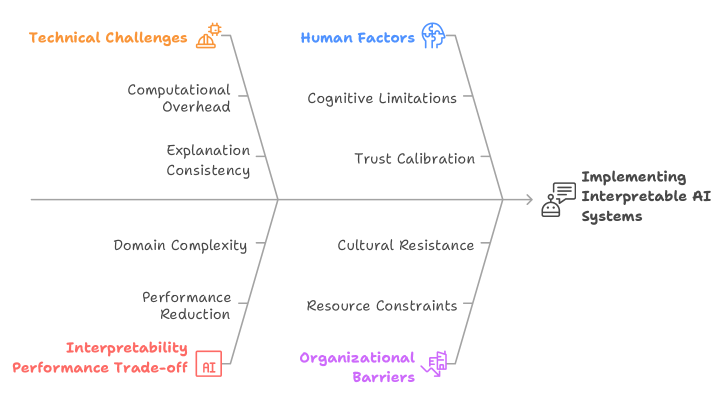

The diagram illustrates the interplay between **Technical Challenges** and **Human Factors** in implementing interpretable AI systems, converging toward an **Interpretability Performance Trade-off**. It emphasizes the complexity of balancing technical feasibility with human-centric considerations.

### Components/Axes

1. **Main Branches**:

- **Technical Challenges** (orange):

- Computational Overhead

- Explanation Consistency

- Domain Complexity

- Performance Reduction

- **Human Factors** (blue):

- Cognitive Limitations

- Trust Calibration

- Cultural Resistance

- Resource Constraints

- **Interpretability Performance Trade-off** (red): Central node connecting both branches.

- **Organizational Barriers** (purple): External factor influencing the trade-off.

2. **Flow**:

- Technical and human factors feed into the trade-off, which then informs the goal of **Implementing Interpretable AI Systems** (text box with speech bubble icon).

3. **Legend**:

- Colors map to categories:

- Orange = Technical Challenges

- Blue = Human Factors

- Red = Trade-off

- Purple = Organizational Barriers

### Detailed Analysis

- **Technical Challenges** focus on computational and algorithmic limitations (e.g., "Computational Overhead," "Domain Complexity").

- **Human Factors** address psychological and societal barriers (e.g., "Cognitive Limitations," "Cultural Resistance").

- **Interpretability Performance Trade-off** acts as a bottleneck, highlighting the tension between accuracy and explainability.

- **Organizational Barriers** (purple) are positioned as an external constraint, suggesting institutional or infrastructural challenges.

### Key Observations

- The diagram prioritizes **explanation consistency** and **trust calibration** as critical nodes.

- "Performance Reduction" (technical) and "Resource Constraints" (human) are positioned as downstream effects of the trade-off.

- No numerical data is present; the diagram is conceptual, emphasizing relationships over quantifiable metrics.

### Interpretation

The diagram underscores that implementing interpretable AI requires navigating **technical trade-offs** (e.g., sacrificing performance for explainability) while addressing **human-centric barriers** (e.g., cultural resistance to AI decisions). The **Interpretability Performance Trade-off** serves as a focal point, illustrating that no single solution satisfies all stakeholders. Organizational barriers further complicate adoption, suggesting that technical and human factors alone are insufficient without systemic support.

This framework aligns with Peircean semiotics: the **icon** (diagram) represents the **interpretant** (trade-off), mediated by **signs** (technical/human factors) to guide action toward interpretable AI systems.