## Diagram: Entity-Relation Association Architecture

### Overview

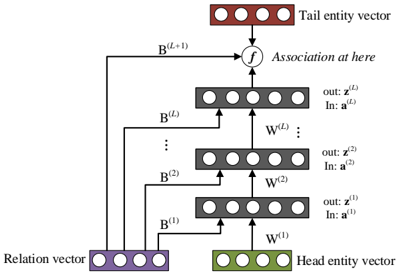

The diagram illustrates a multi-layered neural network architecture for entity-relation association. It depicts the flow of information from a **Head entity vector** and **Relation vector** through sequential processing blocks to produce a **Tail entity vector**. The architecture includes multiple transformation layers (B and W) and a final association function (f).

### Components/Axes

1. **Key Elements**:

- **Head entity vector**: Green block at the bottom-right.

- **Relation vector**: Purple block at the bottom-left.

- **Tail entity vector**: Red block at the top-right.

- **Processing blocks**:

- **B^(1) to B^(L+1)**: Vertical stack of gray blocks on the left, labeled with superscripts (B^(1), B^(2), ..., B^(L+1)).

- **W^(1) to W^(L)**: Vertical stack of gray blocks in the center, labeled with superscripts (W^(1), W^(2), ..., W^(L)).

- **Arrows**: Indicate directional flow (e.g., from Head entity vector to B^(1), then upward through B^(2) to B^(L+1)).

- **Function "f"**: Connects B^(L+1) to the Tail entity vector, labeled "Association at here."

2. **Color Coding**:

- **Green**: Head entity vector.

- **Purple**: Relation vector.

- **Red**: Tail entity vector.

- **Gray**: Processing blocks (B and W).

3. **Textual Labels**:

- Inputs:

- Head entity vector: "In: a^(1)" (W^(1)), "In: a^(2)" (W^(2)), ..., "In: a^(L)" (W^(L)).

- Outputs:

- "out: z^(1)" (W^(1)), "out: z^(2)" (W^(2)), ..., "out: z^(L)" (W^(L)).

- Function: "f" (association step).

### Detailed Analysis

- **Flow Direction**:

1. The **Head entity vector** (green) and **Relation vector** (purple) are combined at B^(1).

2. The output of B^(1) is passed to W^(1), producing "out: z^(1)."

3. This process repeats iteratively: B^(i) processes inputs, W^(i) generates outputs, and the sequence progresses upward.

4. At the top layer (B^(L+1)), the output is fed into function "f," which generates the **Tail entity vector** (red).

- **Component Relationships**:

- The **B^(i)** blocks likely represent bidirectional or contextual transformation layers.

- The **W^(i)** blocks appear to be weight matrices or linear transformations.

- Function "f" acts as the final association mechanism, integrating all prior transformations.

### Key Observations

- The architecture is hierarchical, with increasing complexity from B^(1) to B^(L+1).

- The **Tail entity vector** depends on the cumulative output of all B and W layers.

- No numerical values or quantitative data are present; the diagram focuses on structural relationships.

### Interpretation

This diagram represents a **sequence-to-sequence model** or **transformer-like architecture** for entity-relation prediction. The Head and Tail entity vectors likely correspond to subject and object entities in a knowledge graph, while the Relation vector encodes their interaction. The layers B and W progressively refine the association, with "f" serving as the final classifier or regressor. The absence of numerical data suggests this is a conceptual blueprint rather than an empirical analysis.

**Critical Insight**: The iterative processing of B and W layers implies a focus on capturing long-range dependencies, common in models like BERT or GPT for contextual understanding. The "association at here" label emphasizes the importance of the final function "f" in determining the Tail entity.