TECHNICAL ASSET FINGERPRINT

f204bd75b5670dc95d913b4d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

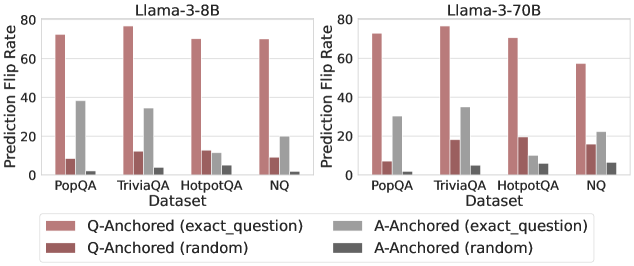

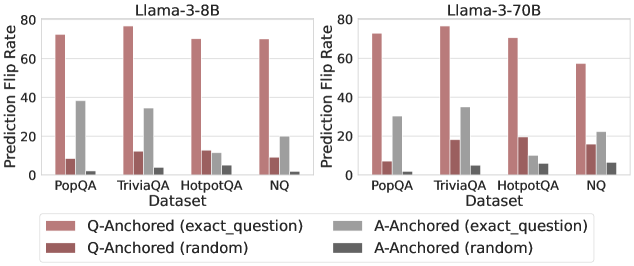

## Bar Chart: Prediction Flip Rate Comparison for Llama-3 Models

### Overview

The image presents two bar charts comparing the prediction flip rates of Llama-3-8B and Llama-3-70B models across different datasets (PopQA, TriviaQA, HotpotQA, and NQ). The charts show the prediction flip rates for question-anchored (Q-Anchored) and answer-anchored (A-Anchored) methods, with both "exact_question" and "random" variations.

### Components/Axes

* **Titles:**

* Left Chart: Llama-3-8B

* Right Chart: Llama-3-70B

* **Y-Axis:** Prediction Flip Rate (ranging from 0 to 80)

* **X-Axis:** Dataset (PopQA, TriviaQA, HotpotQA, NQ)

* **Legend:** Located at the bottom of the image.

* Q-Anchored (exact\_question): Light Brown

* Q-Anchored (random): Dark Brown

* A-Anchored (exact\_question): Light Gray

* A-Anchored (random): Dark Gray

### Detailed Analysis

**Left Chart: Llama-3-8B**

* **PopQA:**

* Q-Anchored (exact\_question): Approximately 73

* Q-Anchored (random): Approximately 8

* A-Anchored (exact\_question): Approximately 38

* A-Anchored (random): Approximately 1

* **TriviaQA:**

* Q-Anchored (exact\_question): Approximately 77

* Q-Anchored (random): Approximately 12

* A-Anchored (exact\_question): Approximately 34

* A-Anchored (random): Approximately 3

* **HotpotQA:**

* Q-Anchored (exact\_question): Approximately 71

* Q-Anchored (random): Approximately 12

* A-Anchored (exact\_question): Approximately 11

* A-Anchored (random): Approximately 5

* **NQ:**

* Q-Anchored (exact\_question): Approximately 70

* Q-Anchored (random): Approximately 12

* A-Anchored (exact\_question): Approximately 20

* A-Anchored (random): Approximately 5

**Right Chart: Llama-3-70B**

* **PopQA:**

* Q-Anchored (exact\_question): Approximately 74

* Q-Anchored (random): Approximately 8

* A-Anchored (exact\_question): Approximately 22

* A-Anchored (random): Approximately 1

* **TriviaQA:**

* Q-Anchored (exact\_question): Approximately 77

* Q-Anchored (random): Approximately 18

* A-Anchored (exact\_question): Approximately 34

* A-Anchored (random): Approximately 2

* **HotpotQA:**

* Q-Anchored (exact\_question): Approximately 74

* Q-Anchored (random): Approximately 10

* A-Anchored (exact\_question): Approximately 10

* A-Anchored (random): Approximately 3

* **NQ:**

* Q-Anchored (exact\_question): Approximately 56

* Q-Anchored (random): Approximately 16

* A-Anchored (exact\_question): Approximately 22

* A-Anchored (random): Approximately 6

### Key Observations

* For both models, the Q-Anchored (exact\_question) method consistently shows the highest prediction flip rates across all datasets.

* The Q-Anchored (random) method generally has low prediction flip rates.

* The A-Anchored (exact\_question) method shows moderate prediction flip rates, while A-Anchored (random) has the lowest.

* The Llama-3-70B model exhibits a slightly lower Q-Anchored (exact\_question) prediction flip rate for the NQ dataset compared to the Llama-3-8B model.

### Interpretation

The data suggests that anchoring the question directly (Q-Anchored, exact\_question) leads to a higher likelihood of prediction flips, indicating potential sensitivity to specific question formulations. Randomizing the question anchoring significantly reduces the flip rate, suggesting that the model relies on specific question structures for its predictions. Answer anchoring shows a lower flip rate compared to question anchoring, implying that the model is more robust to variations in the answer context. The differences between the 8B and 70B models are subtle, but the 70B model shows a slightly reduced flip rate for Q-Anchored (exact\_question) on the NQ dataset, potentially indicating improved robustness.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: Prediction Flip Rate for Llama-3 Models

### Overview

This image presents a comparative bar chart illustrating the Prediction Flip Rate for two Llama-3 models (8B and 70B) across four different datasets: PopQA, TriviaQA, HotpotQA, and NQ. The flip rate is measured for both Question-Anchored (Q-Anchored) and Answer-Anchored (A-Anchored) scenarios, with variations based on whether the anchoring is done using the exact question or a random question.

### Components/Axes

* **X-axis:** Dataset (PopQA, TriviaQA, HotpotQA, NQ)

* **Y-axis:** Prediction Flip Rate (ranging from 0 to 80)

* **Models:** Two separate charts, one for Llama-3-8B (left) and one for Llama-3-70B (right).

* **Legend:** Located at the bottom-center of the image.

* Q-Anchored (exact\_question) - Red

* Q-Anchored (random) - Dark Red

* A-Anchored (exact\_question) - Light Gray

* A-Anchored (random) - Dark Gray

### Detailed Analysis

**Llama-3-8B (Left Chart)**

* **PopQA:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 10.

* A-Anchored (exact\_question): Approximately 32.

* A-Anchored (random): Approximately 12.

* **TriviaQA:**

* Q-Anchored (exact\_question): Approximately 76.

* Q-Anchored (random): Approximately 12.

* A-Anchored (exact\_question): Approximately 24.

* A-Anchored (random): Approximately 10.

* **HotpotQA:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 16.

* A-Anchored (exact\_question): Approximately 16.

* A-Anchored (random): Approximately 8.

* **NQ:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 16.

* A-Anchored (exact\_question): Approximately 16.

* A-Anchored (random): Approximately 8.

**Llama-3-70B (Right Chart)**

* **PopQA:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 24.

* A-Anchored (exact\_question): Approximately 36.

* A-Anchored (random): Approximately 16.

* **TriviaQA:**

* Q-Anchored (exact\_question): Approximately 76.

* Q-Anchored (random): Approximately 20.

* A-Anchored (exact\_question): Approximately 28.

* A-Anchored (random): Approximately 12.

* **HotpotQA:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 20.

* A-Anchored (exact\_question): Approximately 16.

* A-Anchored (random): Approximately 8.

* **NQ:**

* Q-Anchored (exact\_question): Approximately 72.

* Q-Anchored (random): Approximately 20.

* A-Anchored (exact\_question): Approximately 16.

* A-Anchored (random): Approximately 8.

**Trends:**

* In both models, Q-Anchored (exact\_question) consistently exhibits the highest prediction flip rate across all datasets.

* Q-Anchored (random) consistently shows the lowest prediction flip rate.

* A-Anchored (exact\_question) generally has a higher flip rate than A-Anchored (random).

* The 70B model generally shows higher flip rates for A-Anchored scenarios compared to the 8B model.

### Key Observations

* The difference between Q-Anchored (exact\_question) and Q-Anchored (random) is substantial, indicating that using the exact question for anchoring significantly impacts prediction flip rate.

* The 70B model demonstrates a more pronounced difference between A-Anchored (exact\_question) and A-Anchored (random) than the 8B model.

* The prediction flip rate is relatively consistent across the datasets for Q-Anchored (exact\_question).

### Interpretation

The data suggests that anchoring predictions to the exact question (Q-Anchored (exact\_question)) is a highly effective method for inducing prediction flips, resulting in the highest flip rates across all datasets and models. This indicates that the models are sensitive to the specific wording of the question. The lower flip rates observed with random question anchoring suggest that the models are less susceptible to irrelevant or unrelated information.

The larger difference in A-Anchored flip rates for the 70B model suggests that the larger model is more capable of leveraging answer-related information to influence predictions. The consistency of the Q-Anchored (exact\_question) flip rate across datasets implies that this anchoring strategy is robust and generalizable.

The concept of "prediction flip rate" likely refers to the frequency with which the model changes its predicted answer when presented with different anchoring information. This metric is valuable for understanding the model's sensitivity to context and its ability to revise its predictions based on new evidence. The results highlight the importance of carefully considering the anchoring strategy when evaluating and deploying these models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Grouped Bar Chart: Prediction Flip Rate by Dataset and Model

### Overview

The image displays two side-by-side grouped bar charts comparing the "Prediction Flip Rate" across four question-answering datasets for two different language models: Llama-3-8B (left panel) and Llama-3-70B (right panel). The charts analyze how different "anchoring" methods affect the stability of model predictions.

### Components/Axes

* **Chart Type:** Two grouped bar charts (panels).

* **Panel Titles:**

* Left: `Llama-3-8B`

* Right: `Llama-3-70B`

* **Y-Axis (Both Panels):**

* **Label:** `Prediction Flip Rate`

* **Scale:** Linear, from 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **X-Axis (Both Panels):**

* **Label:** `Dataset`

* **Categories (from left to right):** `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom Center, spanning both panels):**

* **Position:** Below the x-axis labels.

* **Categories & Colors (from left to right):**

1. `Q-Anchored (exact_question)` - Light reddish-brown (salmon) bar.

2. `Q-Anchored (random)` - Dark red (burgundy) bar.

3. `A-Anchored (exact_question)` - Light gray bar.

4. `A-Anchored (random)` - Dark gray bar.

### Detailed Analysis

The analysis is segmented by model panel. Values are approximate visual estimates from the chart.

**Panel 1: Llama-3-8B**

* **PopQA:**

* Q-Anchored (exact_question): ~72

* Q-Anchored (random): ~8

* A-Anchored (exact_question): ~38

* A-Anchored (random): ~1

* **TriviaQA:**

* Q-Anchored (exact_question): ~78

* Q-Anchored (random): ~12

* A-Anchored (exact_question): ~34

* A-Anchored (random): ~4

* **HotpotQA:**

* Q-Anchored (exact_question): ~70

* Q-Anchored (random): ~12

* A-Anchored (exact_question): ~12

* A-Anchored (random): ~6

* **NQ:**

* Q-Anchored (exact_question): ~70

* Q-Anchored (random): ~9

* A-Anchored (exact_question): ~19

* A-Anchored (random): ~1

**Panel 2: Llama-3-70B**

* **PopQA:**

* Q-Anchored (exact_question): ~73

* Q-Anchored (random): ~7

* A-Anchored (exact_question): ~31

* A-Anchored (random): ~1

* **TriviaQA:**

* Q-Anchored (exact_question): ~78

* Q-Anchored (random): ~17

* A-Anchored (exact_question): ~35

* A-Anchored (random): ~5

* **HotpotQA:**

* Q-Anchored (exact_question): ~70

* Q-Anchored (random): ~19

* A-Anchored (exact_question): ~12

* A-Anchored (random): ~6

* **NQ:**

* Q-Anchored (exact_question): ~57

* Q-Anchored (random): ~15

* A-Anchored (exact_question): ~22

* A-Anchored (random): ~6

### Key Observations

1. **Dominant Series:** The `Q-Anchored (exact_question)` bar (light reddish-brown) is consistently the tallest across all datasets and both models, indicating the highest prediction flip rate.

2. **Secondary Series:** The `A-Anchored (exact_question)` bar (light gray) is consistently the second tallest, but significantly lower than its Q-Anchored counterpart.

3. **Low Flip Rates:** The `Q-Anchored (random)` (dark red) and `A-Anchored (random)` (dark gray) bars show very low flip rates, often below 20 and frequently below 10.

4. **Model Comparison (8B vs. 70B):** The overall pattern is similar between models. However, for the `NQ` dataset, the `Q-Anchored (exact_question)` flip rate appears noticeably lower for the 70B model (~57) compared to the 8B model (~70). Conversely, the `Q-Anchored (random)` rate for `NQ` is slightly higher in the 70B model.

5. **Dataset Variation:** The `TriviaQA` dataset tends to show the highest flip rates for the `Q-Anchored (exact_question)` method in both models. The `HotpotQA` dataset shows the smallest difference between the `Q-Anchored (exact_question)` and `A-Anchored (exact_question)` methods.

### Interpretation

This chart investigates the stability of language model answers when the input prompt is slightly altered ("anchored"). A high "Prediction Flip Rate" means the model frequently changes its answer.

* **Core Finding:** Anchoring a prompt to the **exact question** (`Q-Anchored (exact_question)`) makes model predictions highly unstable, causing them to "flip" their answers over 70% of the time in most cases. This suggests models are very sensitive to minor rephrasings of the same question.

* **Anchoring to Answers:** Anchoring to the exact answer (`A-Anchored (exact_question)`) also causes instability, but to a much lesser degree (~12-38%). This implies that providing the answer in the prompt still perturbs the model, but less than rephrasing the question.

* **Random Anchoring:** Using a random question or answer for anchoring (`random` variants) results in minimal flip rates. This is a crucial control, showing that the high flip rates are not due to randomness in the anchoring process itself, but specifically due to using the *exact* question or answer from the evaluation set.

* **Model Scale:** The larger Llama-3-70B model does not show a universal improvement in stability (lower flip rates). Its behavior is dataset-dependent, performing slightly worse (higher flip rates) on some random-anchored tasks but better on the challenging `Q-Anchored (exact_question)` task for the `NQ` dataset. This indicates that simply increasing model size does not automatically resolve sensitivity to prompt phrasing.

* **Practical Implication:** The data strongly suggests that evaluating models using multiple, semantically equivalent but phrased-differently questions (a common practice) may lead to highly variable results, undermining the reliability of single-point accuracy metrics. The model's "answer" is not a fixed property but is highly contingent on the precise formulation of the query.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Prediction Flip Rate Comparison for Llama-3-8B and Llama-3-70B Models

### Overview

The image compares prediction flip rates across four question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ) for two Llama-3 models (8B and 70B parameters). Four anchoring strategies are visualized: Q-Anchored (exact_question), A-Anchored (exact_question), Q-Anchored (random), and A-Anchored (random). The y-axis represents prediction flip rate (0-80%), while the x-axis categorizes datasets.

### Components/Axes

- **X-Axis (Datasets)**: PopQA, TriviaQA, HotpotQA, NQ (left to right)

- **Y-Axis (Prediction Flip Rate)**: 0-80% in 20% increments

- **Legend (Bottom Center)**:

- Red: Q-Anchored (exact_question)

- Gray: A-Anchored (exact_question)

- Dark Red: Q-Anchored (random)

- Dark Gray: A-Anchored (random)

### Detailed Analysis

#### Llama-3-8B (Left Chart)

- **PopQA**:

- Q-Anchored (exact): ~75%

- A-Anchored (exact): ~38%

- Q-Anchored (random): ~8%

- A-Anchored (random): ~2%

- **TriviaQA**:

- Q-Anchored (exact): ~78%

- A-Anchored (exact): ~35%

- Q-Anchored (random): ~10%

- A-Anchored (random): ~3%

- **HotpotQA**:

- Q-Anchored (exact): ~70%

- A-Anchored (exact): ~12%

- Q-Anchored (random): ~9%

- A-Anchored (random): ~4%

- **NQ**:

- Q-Anchored (exact): ~72%

- A-Anchored (exact): ~20%

- Q-Anchored (random): ~5%

- A-Anchored (random): ~1%

#### Llama-3-70B (Right Chart)

- **PopQA**:

- Q-Anchored (exact): ~75%

- A-Anchored (exact): ~30%

- Q-Anchored (random): ~6%

- A-Anchored (random): ~1%

- **TriviaQA**:

- Q-Anchored (exact): ~78%

- A-Anchored (exact): ~35%

- Q-Anchored (random): ~18%

- A-Anchored (random): ~4%

- **HotpotQA**:

- Q-Anchored (exact): ~72%

- A-Anchored (exact): ~10%

- Q-Anchored (random): ~19%

- A-Anchored (random): ~5%

- **NQ**:

- Q-Anchored (exact): ~58% (↓ 14% vs 8B)

- A-Anchored (exact): ~22%

- Q-Anchored (random): ~15%

- A-Anchored (random): ~6%

### Key Observations

1. **Q-Anchored (exact_question)** consistently shows the highest flip rates across all datasets and models, suggesting superior performance.

2. **Model Size Impact**: Llama-3-70B generally matches or slightly underperforms Llama-3-8B in Q-Anchored (exact) methods, except for NQ where 70B drops 14%.

3. **Random Anchoring**: Both Q and A random anchoring methods show significantly lower flip rates (<20%), indicating poor effectiveness.

4. **A-Anchored (exact_question)** performs better than random methods but lags behind Q-Anchored (exact) by 20-40%.

5. **NQ Dataset Anomaly**: Llama-3-70B shows a notable 14% drop in Q-Anchored (exact) performance compared to 8B, contrary to expectations for larger models.

### Interpretation

The data demonstrates that:

- **Anchoring Strategy Matters More Than Model Size**: Q-Anchored (exact_question) outperforms all other methods regardless of model size, suggesting it captures critical contextual relationships.

- **Diminishing Returns for Larger Models**: The 70B model's performance plateau or decline in some cases (e.g., NQ) implies potential overfitting or architectural limitations in handling specific datasets.

- **Random Anchoring Ineffectiveness**: Both Q and A random methods show minimal utility, highlighting the importance of structured anchoring for prediction reliability.

- **Dataset-Specific Behavior**: NQ's anomalous drop in 70B suggests dataset-model compatibility issues, warranting further investigation into dataset characteristics and model training dynamics.

This analysis underscores the critical role of precise anchoring strategies in question-answering systems, with implications for optimizing model architecture and training protocols.

DECODING INTELLIGENCE...