\n

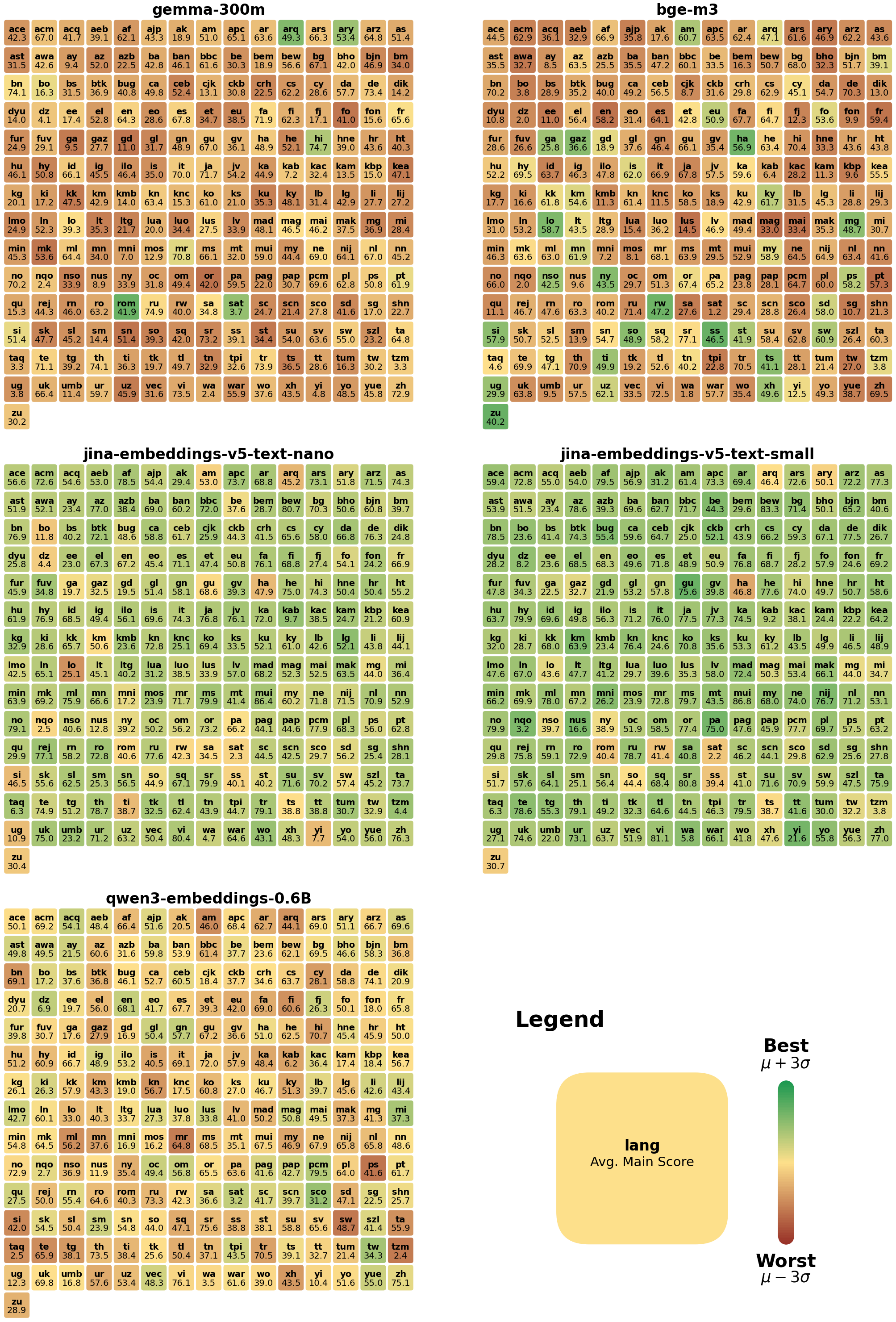

## Heatmaps: Attention Entropy for Different Models

### Overview

The image presents a 4x3 grid of heatmaps, each representing the attention entropy for different language models. The models are: `gemma-300m`, `bge-m3`, `acq-embeddings-v5-text-nano`, `acq-embeddings-v5-text-small`, `acq-embeddings-v5-text-base`, and `acq-embeddings-v5-text-large`. Each heatmap displays attention weights between different tokens, represented by two-letter codes along the x and y axes. The color intensity indicates the magnitude of the attention weight, with warmer colors (yellow/red) representing higher attention and cooler colors (green/blue) representing lower attention. A legend at the bottom-right indicates the color scale, ranging from 0.0 to 1.0.

### Components/Axes

Each heatmap shares the following components:

* **X-axis:** Represents the "query" tokens, labeled with two-letter codes (e.g., "ac", "be", "fu", "it", "km", "na", "rs", "uw", "yz").

* **Y-axis:** Represents the "key" tokens, labeled with the same two-letter codes as the x-axis.

* **Color Scale:** A continuous scale from 0.0 (blue) to 1.0 (red), representing the attention entropy.

* **Title:** Each heatmap is labeled with the name of the corresponding language model.

* **Legend:** Located at the bottom-right, showing the color mapping to attention entropy values.

### Detailed Analysis or Content Details

Each heatmap is 26x26, representing attention between 26 tokens. Due to the density of the data, precise values are difficult to extract without automated tools. However, general trends and approximate values can be observed.

**1. gemma-300m (Top-Left)**

* The heatmap shows a generally sparse attention pattern.

* Strongest attention appears along the diagonal (query = key), with values around 0.6-0.8 (yellow).

* Some off-diagonal attention is visible, particularly around "ac", "be", "fu", "it", "km", "na", "rs", "uw", and "yz", with values ranging from approximately 0.2-0.5 (light green to yellow).

* The overall attention distribution appears relatively uniform.

**2. bge-m3 (Top-Center)**

* Similar to `gemma-300m`, this heatmap also exhibits a sparse attention pattern.

* Strong diagonal attention, with values around 0.6-0.8 (yellow).

* Off-diagonal attention is present, but generally weaker than in `gemma-300m`, with values around 0.1-0.4 (light green).

* A slightly more concentrated attention pattern around the central tokens.

**3. acq-embeddings-v5-text-nano (Top-Right)**

* This heatmap shows a more pronounced diagonal attention, with values consistently around 0.8-1.0 (red).

* Off-diagonal attention is minimal, mostly below 0.2 (blue).

* The attention pattern is highly focused on the diagonal.

**4. acq-embeddings-v5-text-small (Center-Left)**

* Similar to `acq-embeddings-v5-text-nano`, strong diagonal attention (0.8-1.0, red).

* Very limited off-diagonal attention (below 0.2, blue).

* Highly diagonal-focused attention.

**5. acq-embeddings-v5-text-base (Center-Center)**

* Strong diagonal attention (0.8-1.0, red).

* Minimal off-diagonal attention (below 0.2, blue).

* Highly diagonal-focused attention.

**6. acq-embeddings-v5-text-large (Center-Right)**

* Strong diagonal attention (0.8-1.0, red).

* Minimal off-diagonal attention (below 0.2, blue).

* Highly diagonal-focused attention.

**7. attn-context-length-16.0-H.16 (Bottom-Left)**

* This heatmap displays a more complex attention pattern.

* Strong diagonal attention (0.6-0.8, yellow).

* Significant off-diagonal attention, with several areas showing values between 0.4-0.7 (light green to yellow).

* The attention distribution is less uniform than in `gemma-300m` and `bge-m3`.

**8. attn-context-length-32.0-H.16 (Bottom-Center)**

* Similar to `attn-context-length-16.0-H.16`, this heatmap shows a complex attention pattern.

* Strong diagonal attention (0.6-0.8, yellow).

* Significant off-diagonal attention, with values between 0.4-0.7 (light green to yellow).

* The attention distribution appears slightly more dispersed than in `attn-context-length-16.0-H.16`.

**9. attn-context-length-64.0-H.16 (Bottom-Right)**

* This heatmap exhibits a complex attention pattern.

* Strong diagonal attention (0.6-0.8, yellow).

* Significant off-diagonal attention, with values between 0.4-0.7 (light green to yellow).

* The attention distribution is the most dispersed of the three context length heatmaps.

### Key Observations

* The `acq-embeddings-v5-text-*` models (nano, small, base, large) demonstrate a highly diagonal-focused attention pattern, suggesting they primarily attend to themselves.

* `gemma-300m` and `bge-m3` exhibit more dispersed attention, indicating they attend to a wider range of tokens.

* The attention patterns in the `attn-context-length-*` heatmaps become more dispersed as the context length increases (16.0 -> 32.0 -> 64.0).

* The color scale consistently shows attention values between 0.0 and 1.0 across all heatmaps.

### Interpretation

The heatmaps visualize the attention mechanisms within different language models. The strong diagonal attention in the `acq-embeddings-v5-text-*` models suggests these models are designed for tasks where self-attention is crucial, such as embedding generation. The more dispersed attention in `gemma-300m` and `bge-m3` indicates a broader focus on contextual information, potentially making them more suitable for tasks requiring a deeper understanding of relationships between tokens.

The increasing dispersion of attention with longer context lengths in the `attn-context-length-*` heatmaps suggests that as the models process more information, they need to attend to a wider range of tokens to maintain coherence and accuracy. This is consistent with the idea that longer contexts require more complex attention mechanisms to capture long-range dependencies.

The differences in attention patterns between the models likely reflect their underlying architectures, training data, and intended use cases. The heatmaps provide a valuable visual representation of these differences, offering insights into the inner workings of these language models.