## Diagram: Three-Round Interaction Prompt Template for Self-Refine

### Overview

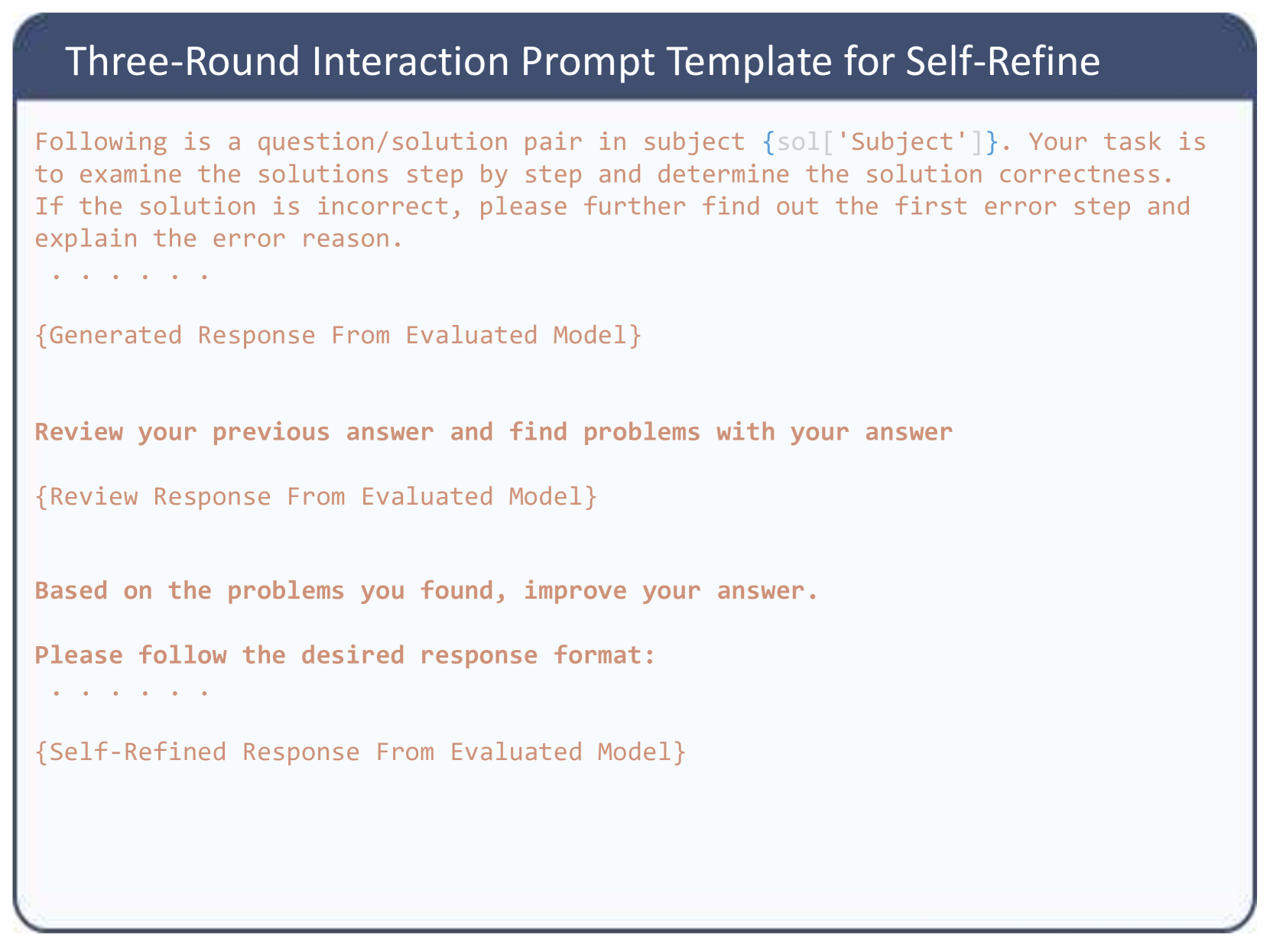

The image displays a structured text template titled "Three-Round Interaction Prompt Template for Self-Refine." It outlines a three-stage process for evaluating and improving a generated response from an AI model. The template is presented as a block of text with placeholders (indicated by curly braces `{}`) for dynamic content. The text is primarily in English, with no other languages present.

### Components/Axes

The template is a single, continuous block of text contained within a bordered box. It has a distinct header and a body structured into three sequential interaction rounds.

**Header (Top Center):**

* **Title:** "Three-Round Interaction Prompt Template for Self-Refine"

**Body (Left-Aligned Text):**

The body consists of instructional text and placeholders. The placeholders are visually distinct, rendered in an orange-brown color (`#d28a6e` approximate), while the instructional text is in a darker gray.

**Text Transcription (Exact):**

```

Following is a question/solution pair in subject {sol['Subject']}. Your task is to examine the solutions step by step and determine the solution correctness. If the solution is incorrect, please further find out the first error step and explain the error reason.

. . . . . .

{Generated Response From Evaluated Model}

Review your previous answer and find problems with your answer

{Review Response From Evaluated Model}

Based on the problems you found, improve your answer.

Please follow the desired response format:

. . . . . .

{Self-Refined Response From Evaluated Model}

```

### Detailed Analysis

The template defines a clear, three-round interaction flow:

1. **Round 1 - Initial Evaluation:**

* **Prompt:** Instructs the model to examine a solution pair for a given subject (`{sol['Subject']}`), check its correctness step-by-step, and identify the first error with an explanation if incorrect.

* **Expected Output:** `{Generated Response From Evaluated Model}`. This is the model's initial analysis.

2. **Round 2 - Self-Review:**

* **Prompt:** Asks the model to review its own previous answer (from Round 1) and identify problems within it.

* **Expected Output:** `{Review Response From Evaluated Model}`. This is the model's critical self-assessment.

3. **Round 3 - Refinement:**

* **Prompt:** Instructs the model to improve its original answer based on the problems found during the self-review. It specifies adherence to a "desired response format."

* **Expected Output:** `{Self-Refined Response From Evaluated Model}`. This is the final, improved output.

The ellipses (`. . . . . .`) appear to be visual separators or placeholders for additional, unspecified content or formatting.

### Key Observations

* **Iterative Structure:** The template enforces a strict iterative loop: Generate -> Critique -> Refine.

* **Self-Correction Focus:** The core mechanism is having the model critique and improve its own output, a technique known as "self-refinement" or "self-critique."

* **Placeholder Design:** The use of `{key}` syntax (e.g., `{sol['Subject']}`) suggests integration with a data structure (likely a Python dictionary) where `sol` is a variable containing problem data.

* **Visual Hierarchy:** The title is prominent in a dark blue header bar. The instructional text is uniform, while the placeholders are highlighted in a contrasting color to denote variable input/output zones.

### Interpretation

This image is not a data chart but a **procedural diagram for an AI interaction protocol**. It visually documents a method to enhance the reliability and accuracy of AI-generated solutions through structured self-evaluation.

* **What it demonstrates:** The template operationalizes a "chain of thought" or "reasoning trace" approach combined with self-criticism. It breaks down the complex task of solution verification into manageable, sequential steps, forcing the model to articulate its reasoning, find flaws, and then correct them.

* **How elements relate:** The three rounds are causally linked. The output of Round 1 becomes the subject of critique in Round 2, and the findings from Round 2 directly inform the improvements made in Round 3. The `{sol['Subject']}` placeholder is the constant input that grounds all three rounds in the same original problem.

* **Purpose and Utility:** This template is likely used in research or development settings to:

1. **Evaluate model capabilities:** Test a model's ability to perform accurate self-assessment.

2. **Improve output quality:** Generate more correct and refined final answers than a single-pass generation.

3. **Create training data:** The triplets (initial response, critique, refined response) can be used to fine-tune models to be better self-refiners.

* **Notable Design Choice:** The explicit instruction to "find out the **first** error step" is significant. It prevents the model from overwhelming the user with a list of all possible errors and instead focuses the correction process on the most critical, foundational mistake, which is often more efficient for learning and debugging.